1. Introduction

Hey there! So, you're into the idea of agents – little helpers that can get things done for you without you even lifting a finger, right? Awesome! But let's be real, one agent isn't always going to cut it, especially when you're tackling bigger, more complex projects. You're probably going to need a whole team of them! That's where multi-agent systems come in.

Agents, when powered by LLMs, give you incredible flexibility compared to old-school hard coding. But, and there's always a but, they come with their own set of tricky challenges. And that's exactly what we're going to dive into in this workshop!

Here's what you can expect to learn – think of it as leveling up your agent game:

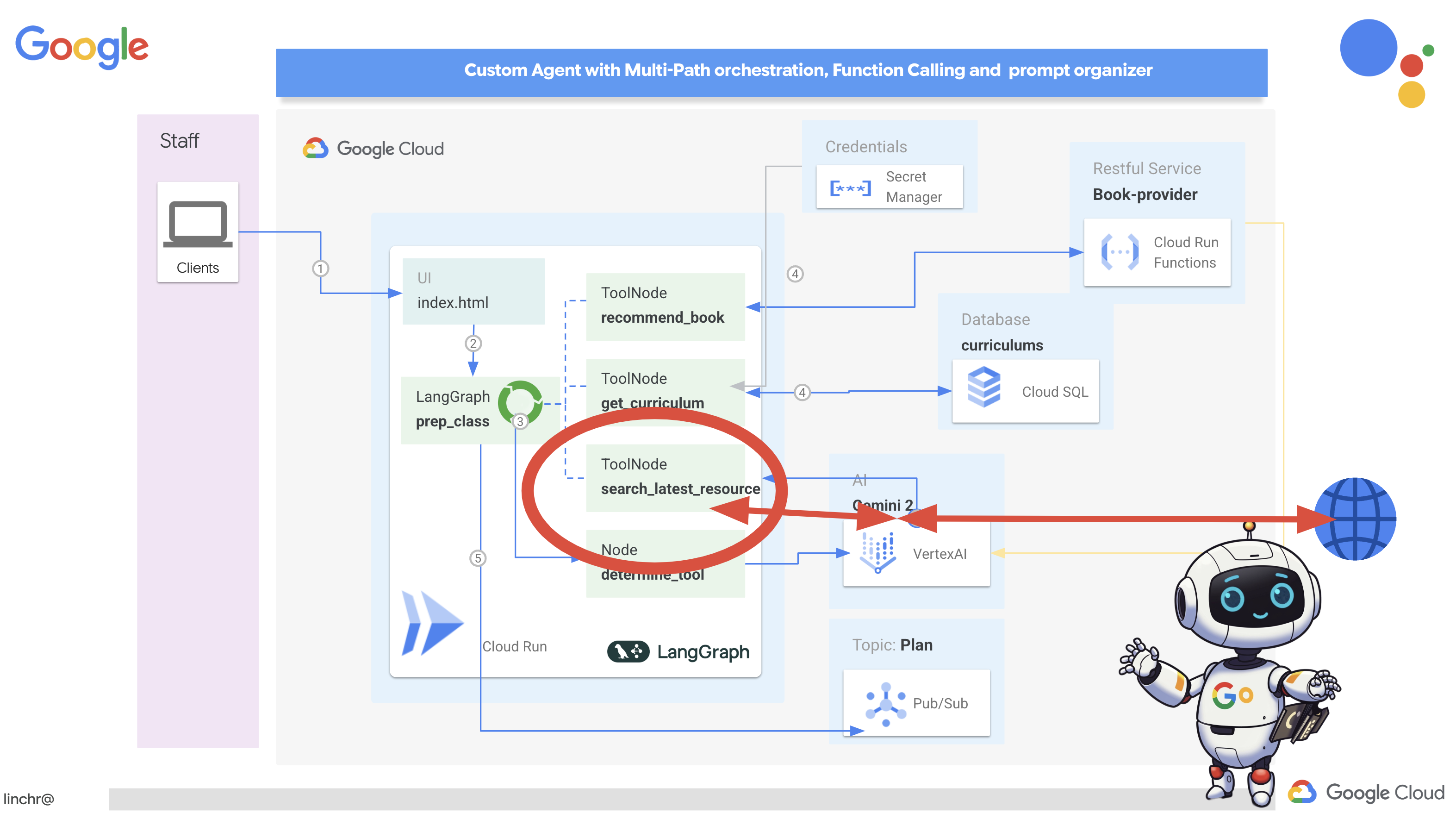

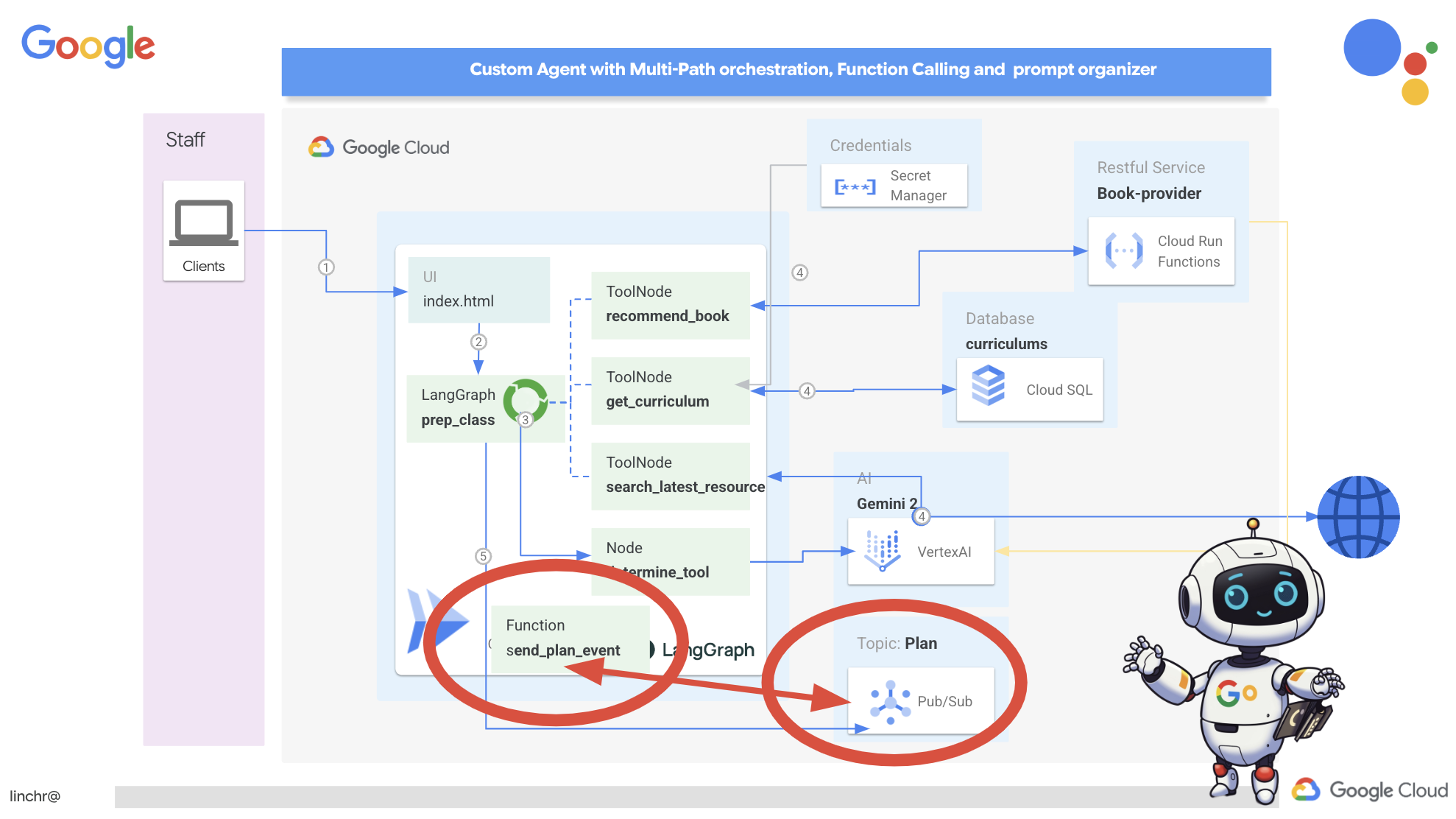

Building Your First Agent with LangGraph: We'll get our hands dirty building your very own agent using LangGraph, a popular framework. You'll learn how to create tools that connect to databases, tap into the latest Gemini 2 API for some internet searching, and optimize the prompts and response, so your agent can interact with not only LLMs but existing services. We'll also show you how function calling works.

Agent Orchestration, Your Way: We'll explore different ways to orchestrate your agents, from simple straight paths to more complex multi-path scenarios. Think of it as directing the flow of your agent team.

Multi-Agent Systems: You'll discover how to set up a system where your agents can collaborate, and get things done together – all thanks to an event-driven architecture.

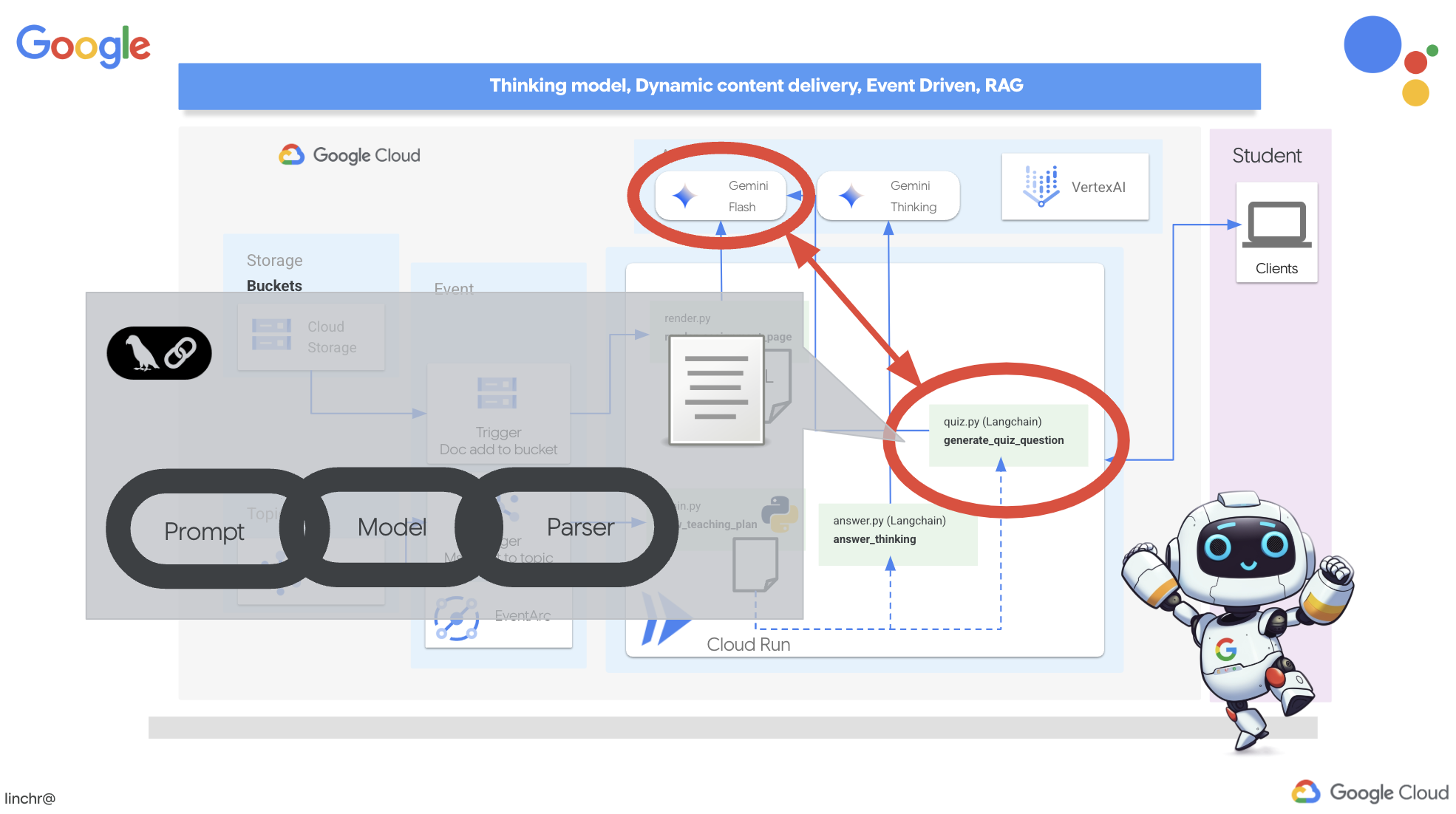

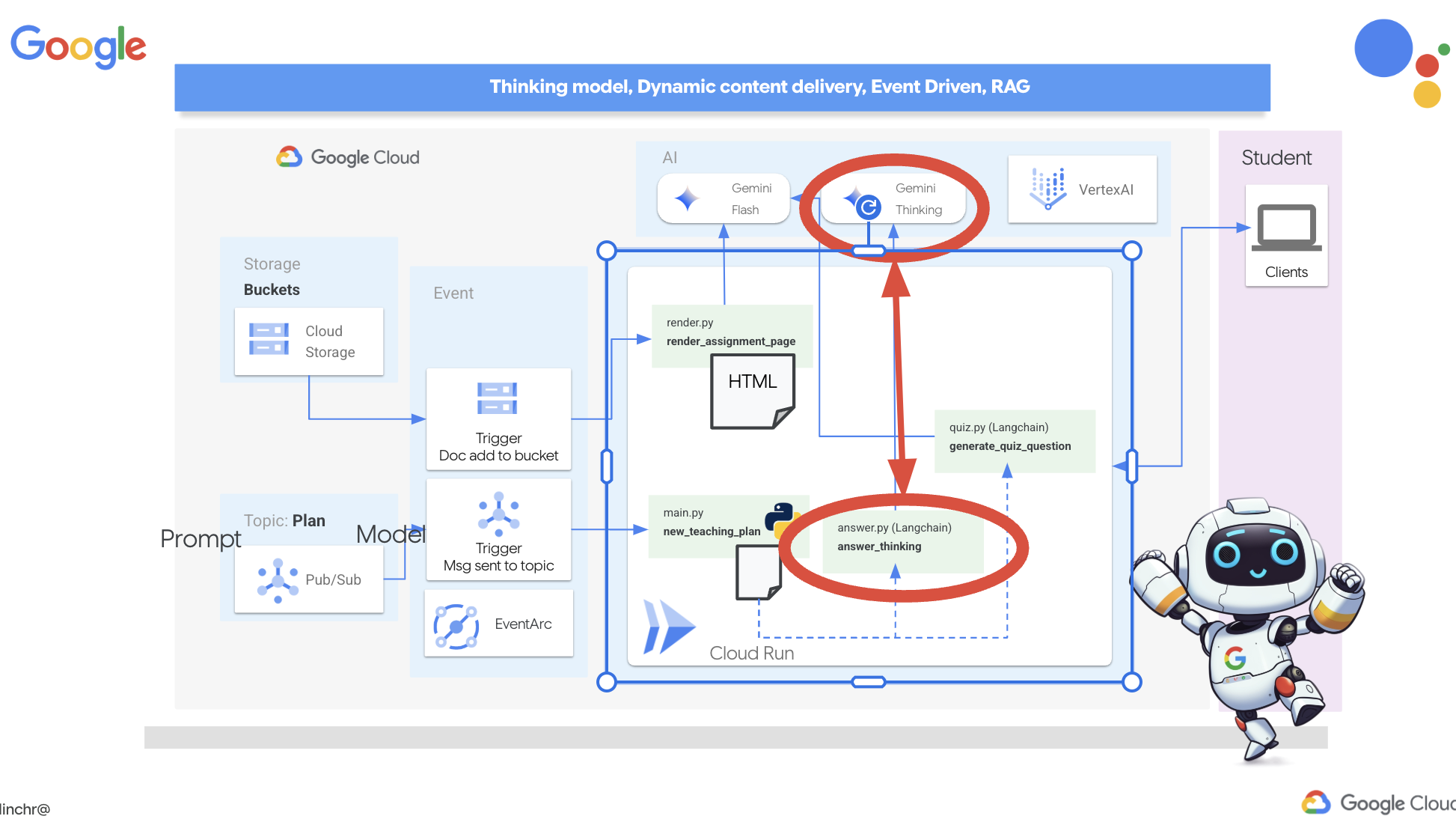

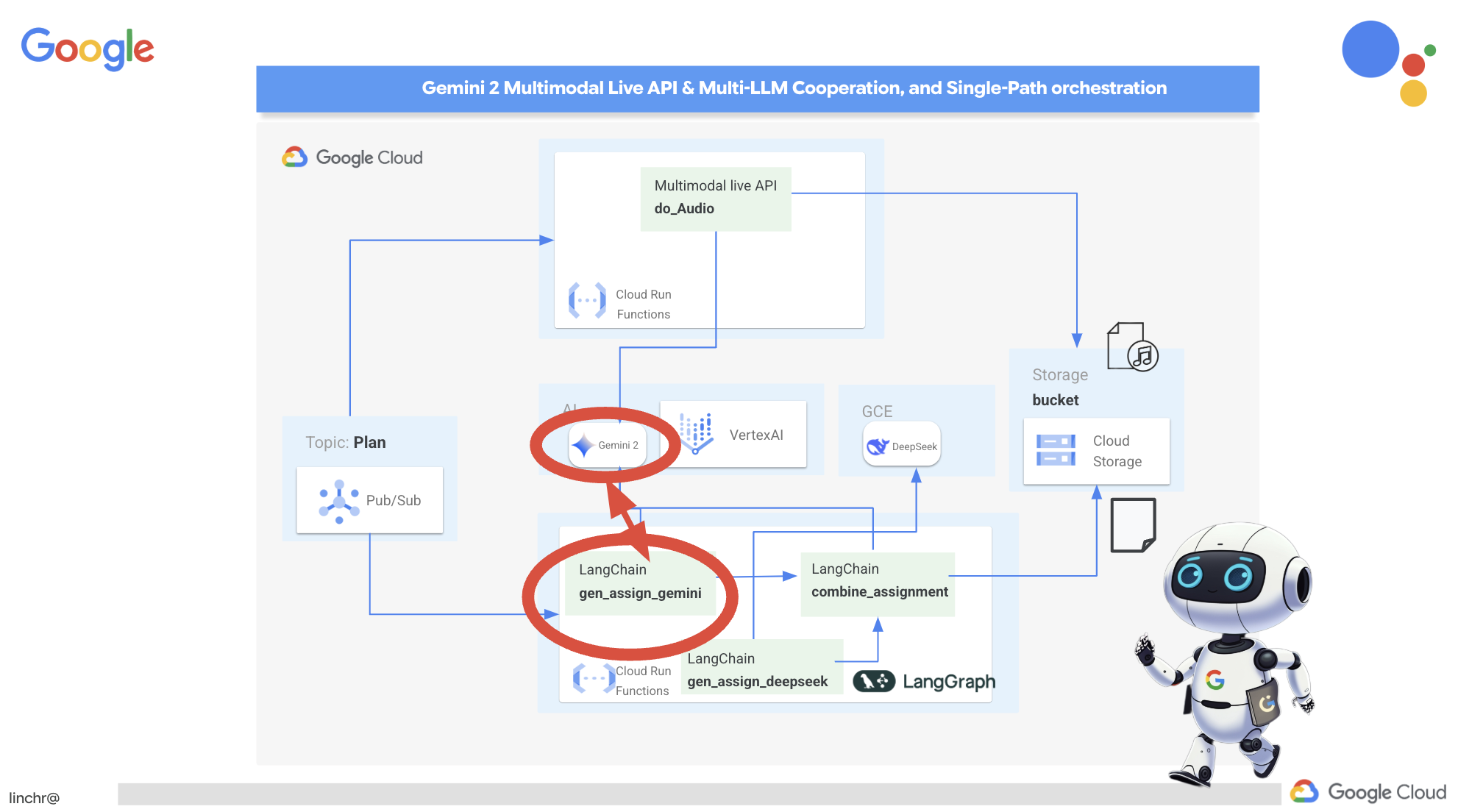

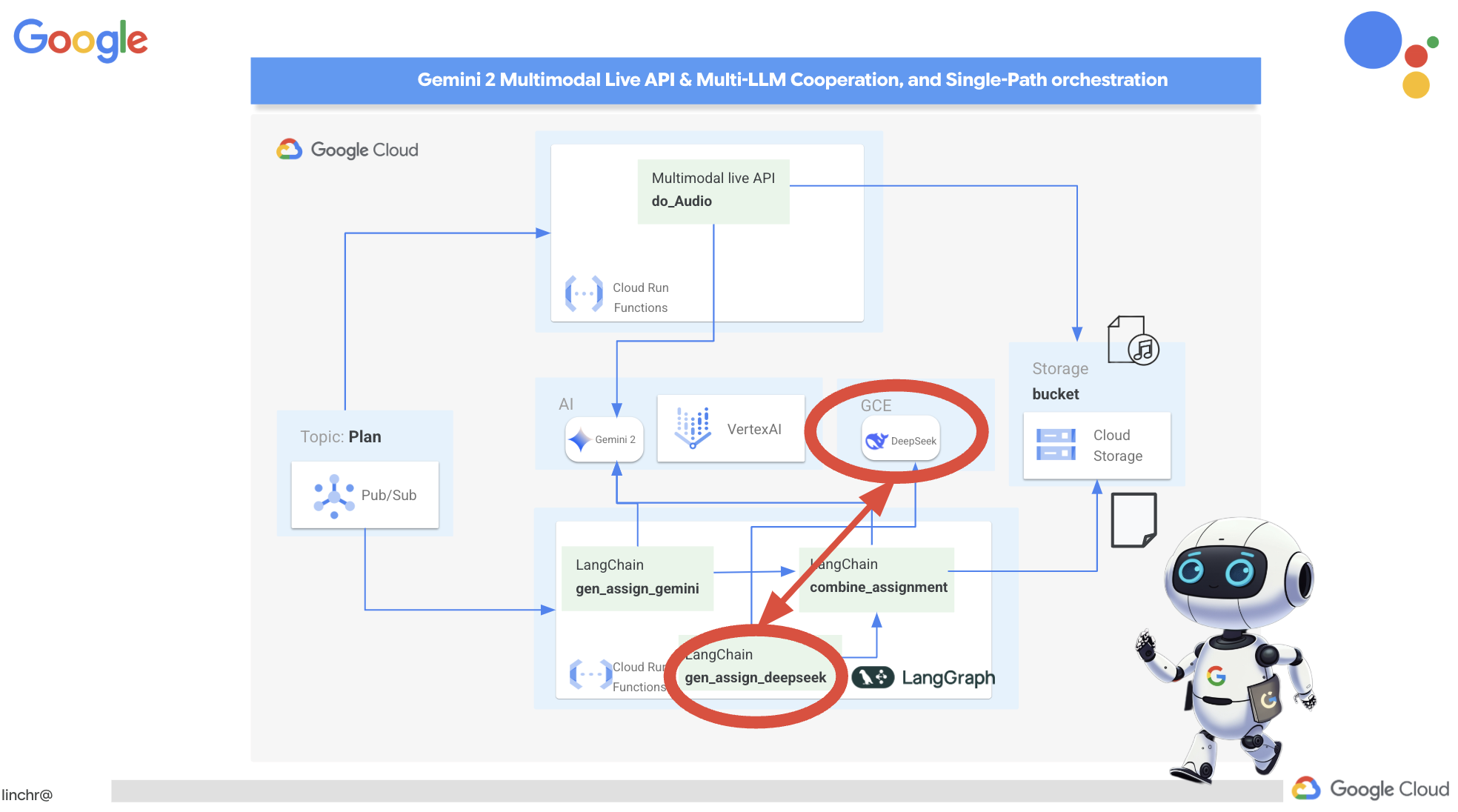

LLM Freedom: Use the Best for the Job: We're not stuck on just one LLM! You'll see how to use multiple LLMs, assigning them different roles to boost problem-solving power using cool "thinking models."

Dynamic Content? No Problem!: Imagine your agent creating dynamic content that's tailored specifically for each user, in real-time. We'll show you how to do it!

Taking it to the Cloud with Google Cloud: Forget just playing around in a notebook. We'll show you how to architect and deploy your multi-agent system on Google Cloud so it's ready for the real world!

This project will be a good example of how to use all the techniques we talked about.

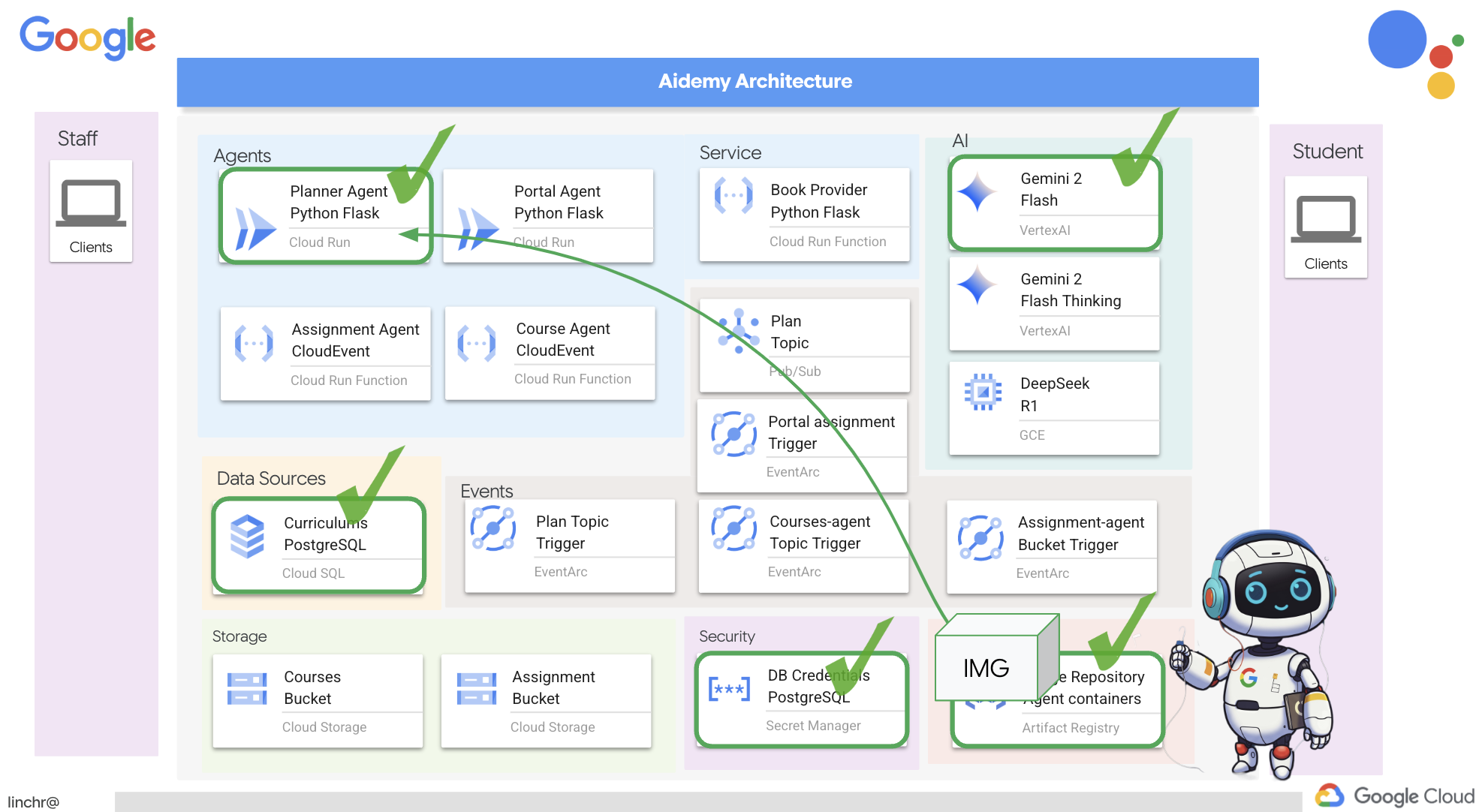

2. Architecture

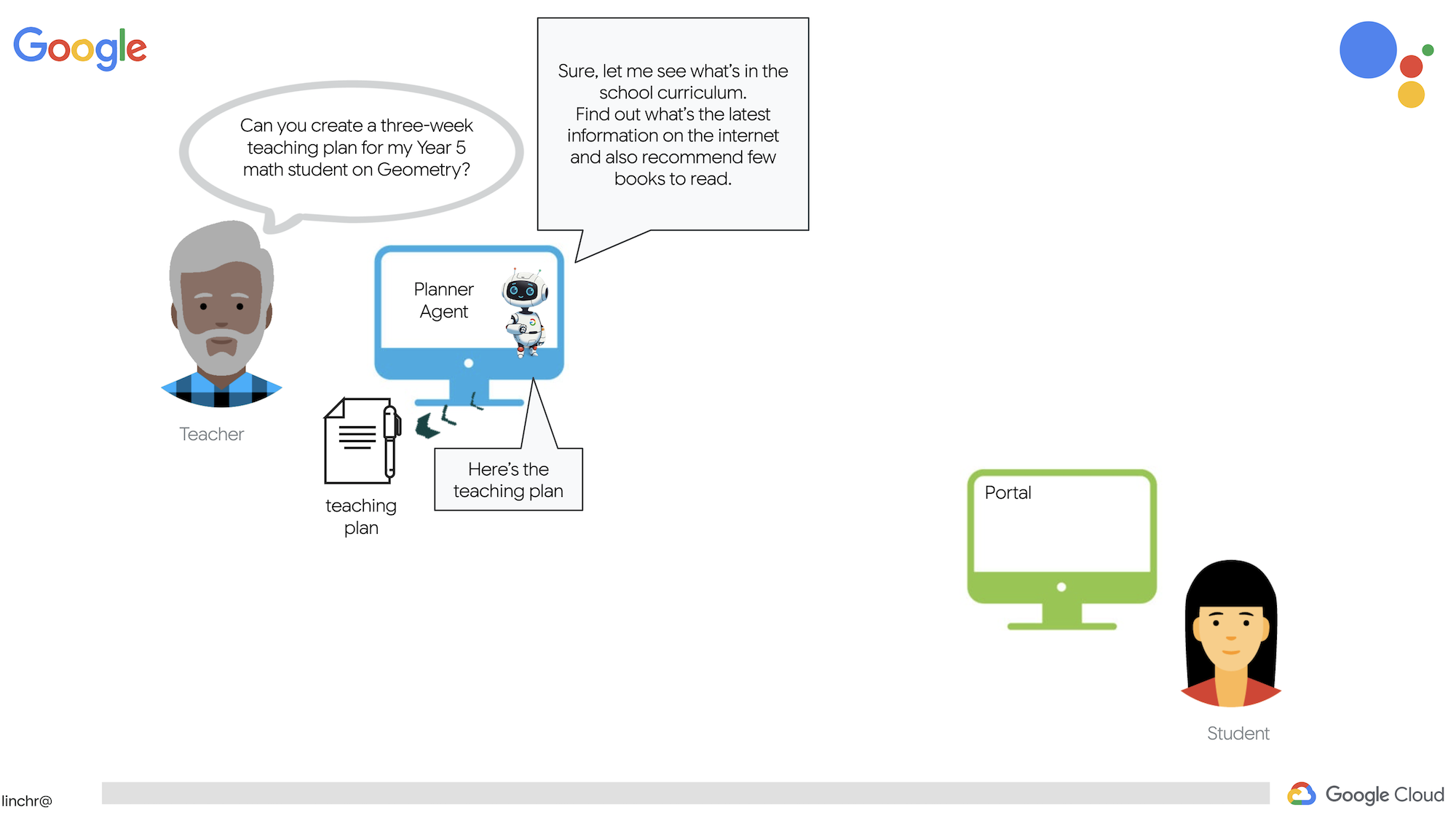

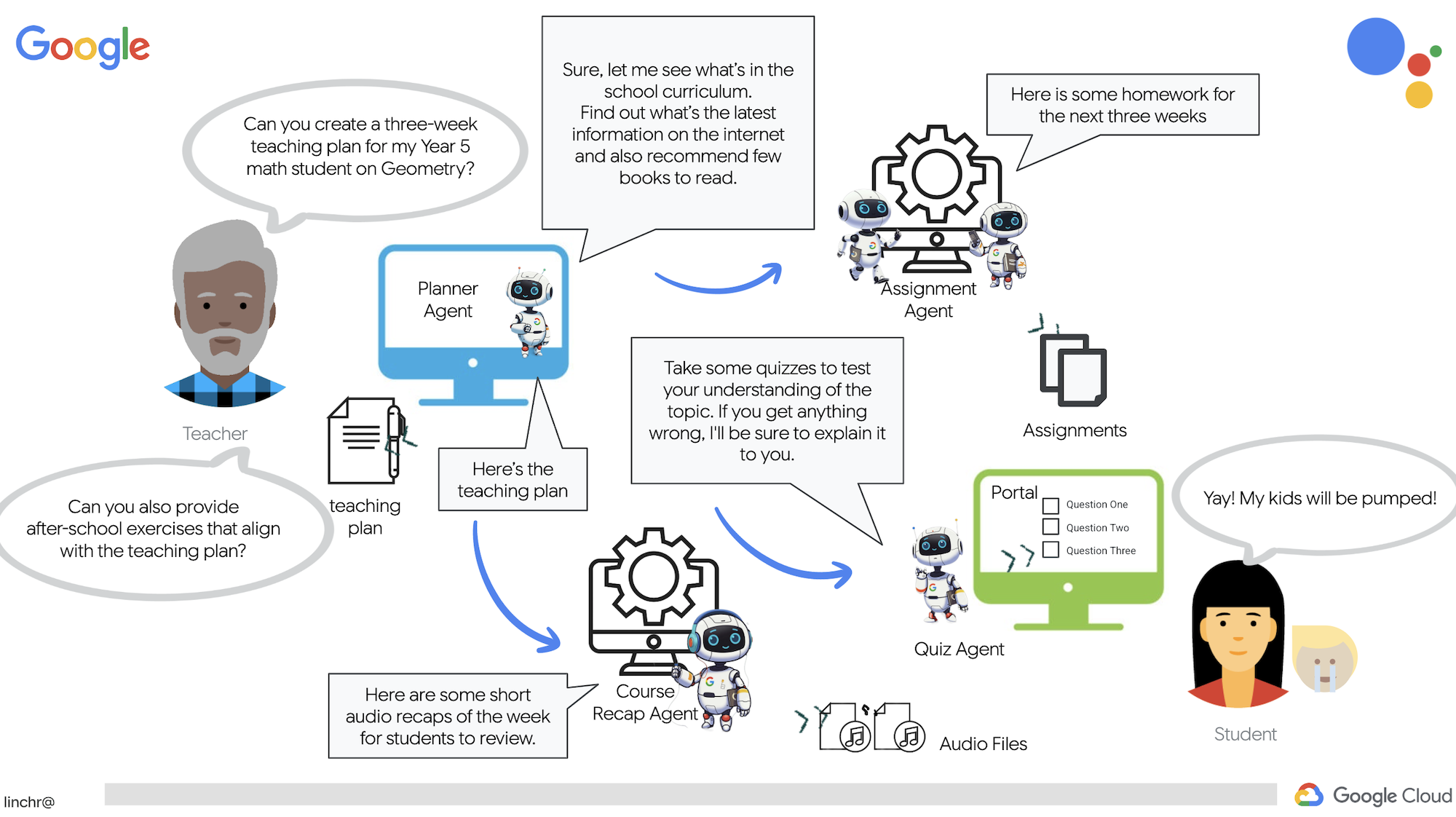

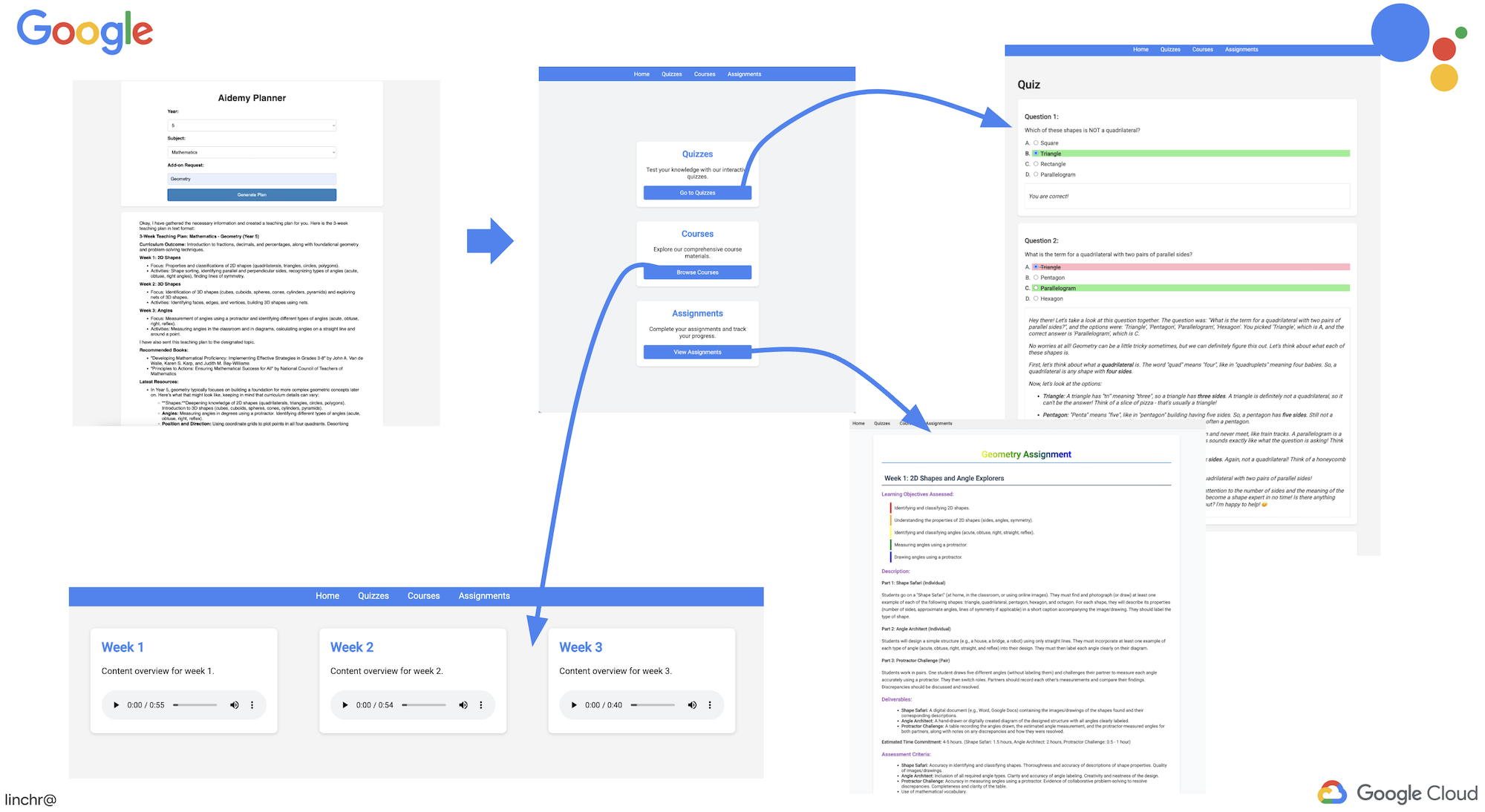

Being a teacher or working in education can be super rewarding, but let's face it, the workload, especially all the prep work, can be challenging! Plus, there's often not enough staff and tutoring can be expensive. That's why we're proposing an AI-powered teaching assistant. This tool can lighten the load for educators and help bridge the gap caused by staff shortages and the lack of affordable tutoring.

Our AI teaching assistant can whip up detailed lesson plans, fun quizzes, easy-to-follow audio recaps, and personalized assignments. This lets teachers focus on what they do best: connecting with students and helping them fall in love with learning.

The system has two sites: one for teachers to create lesson plans for upcoming weeks,

and one for students to access quizzes, audio recaps, and assignments.

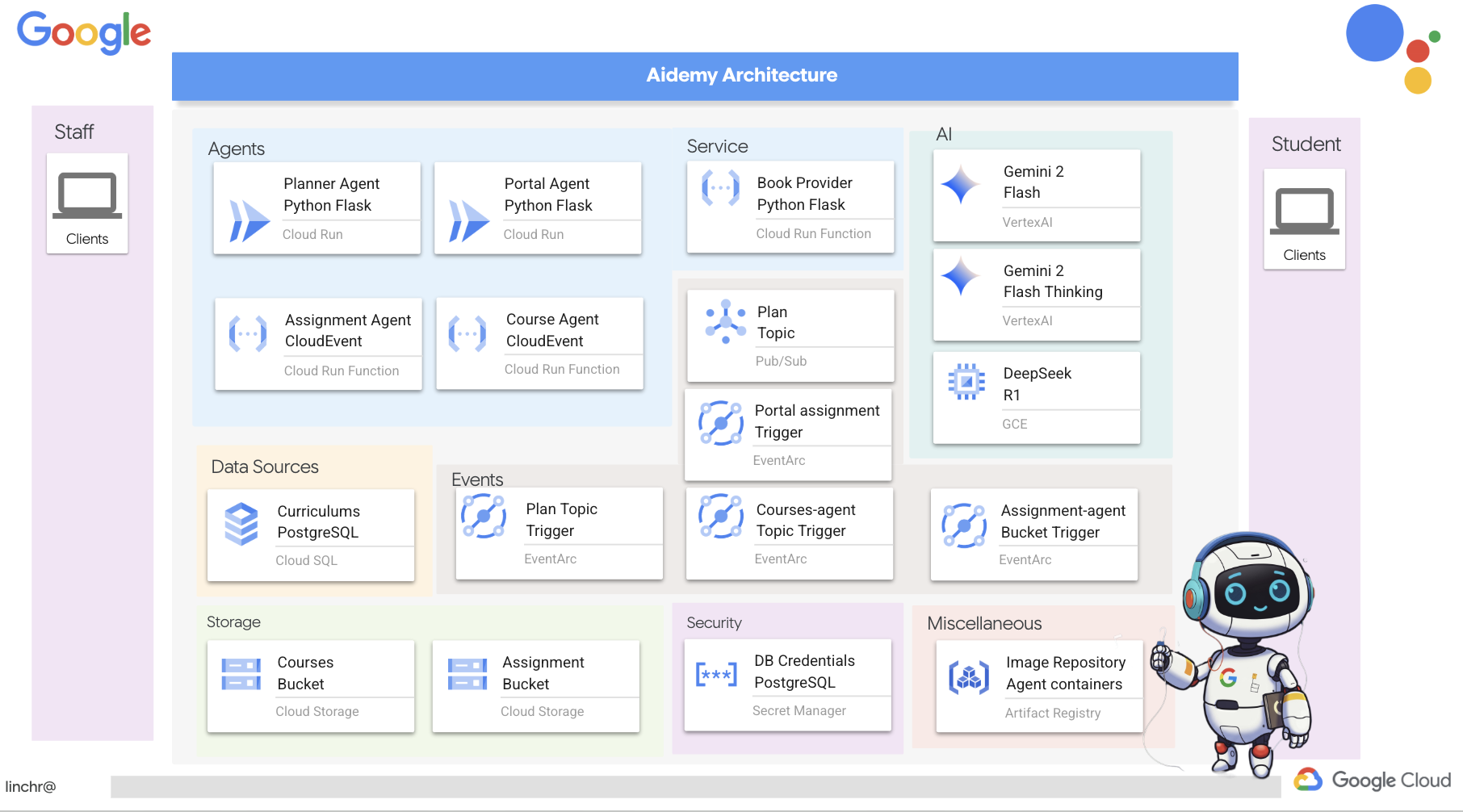

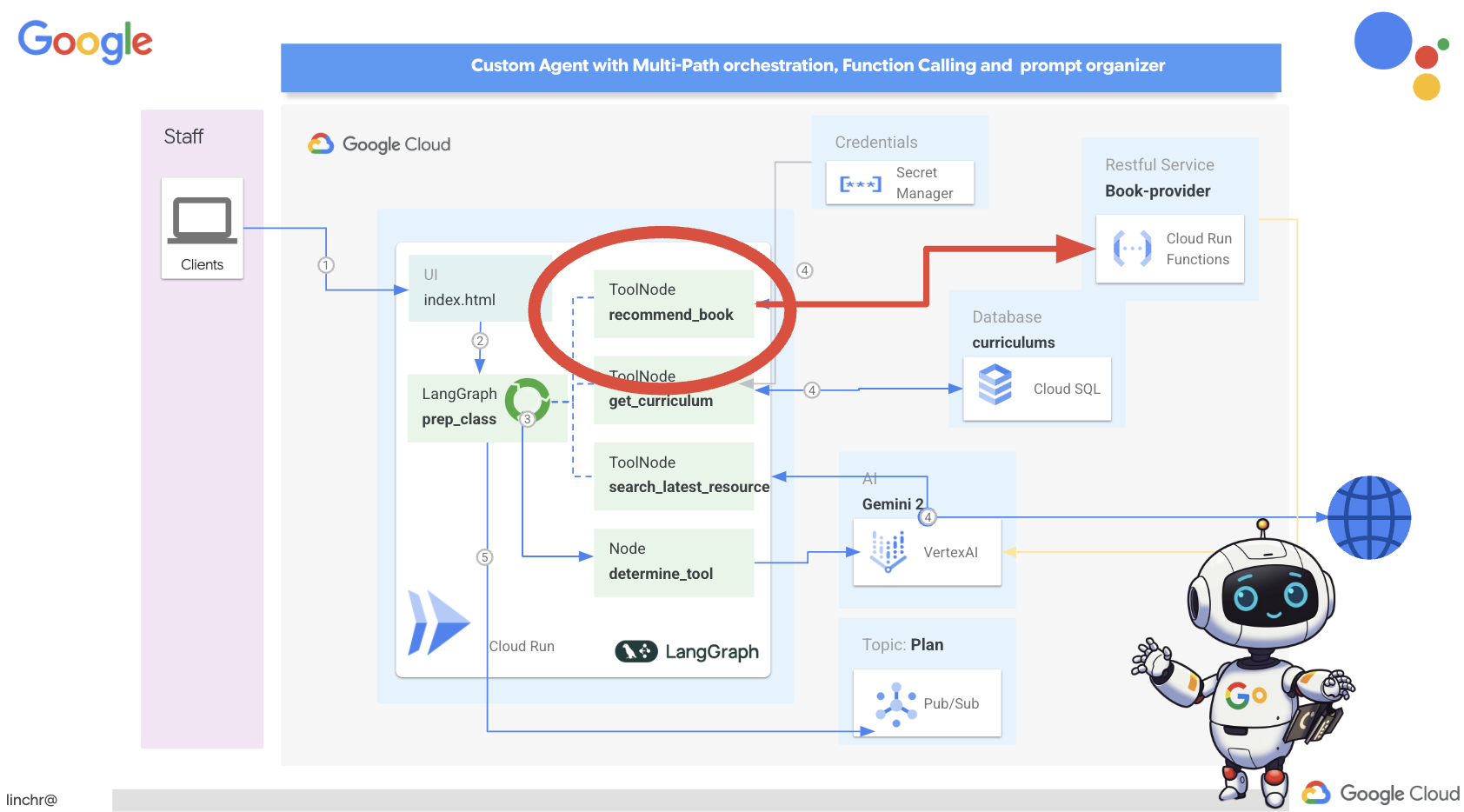

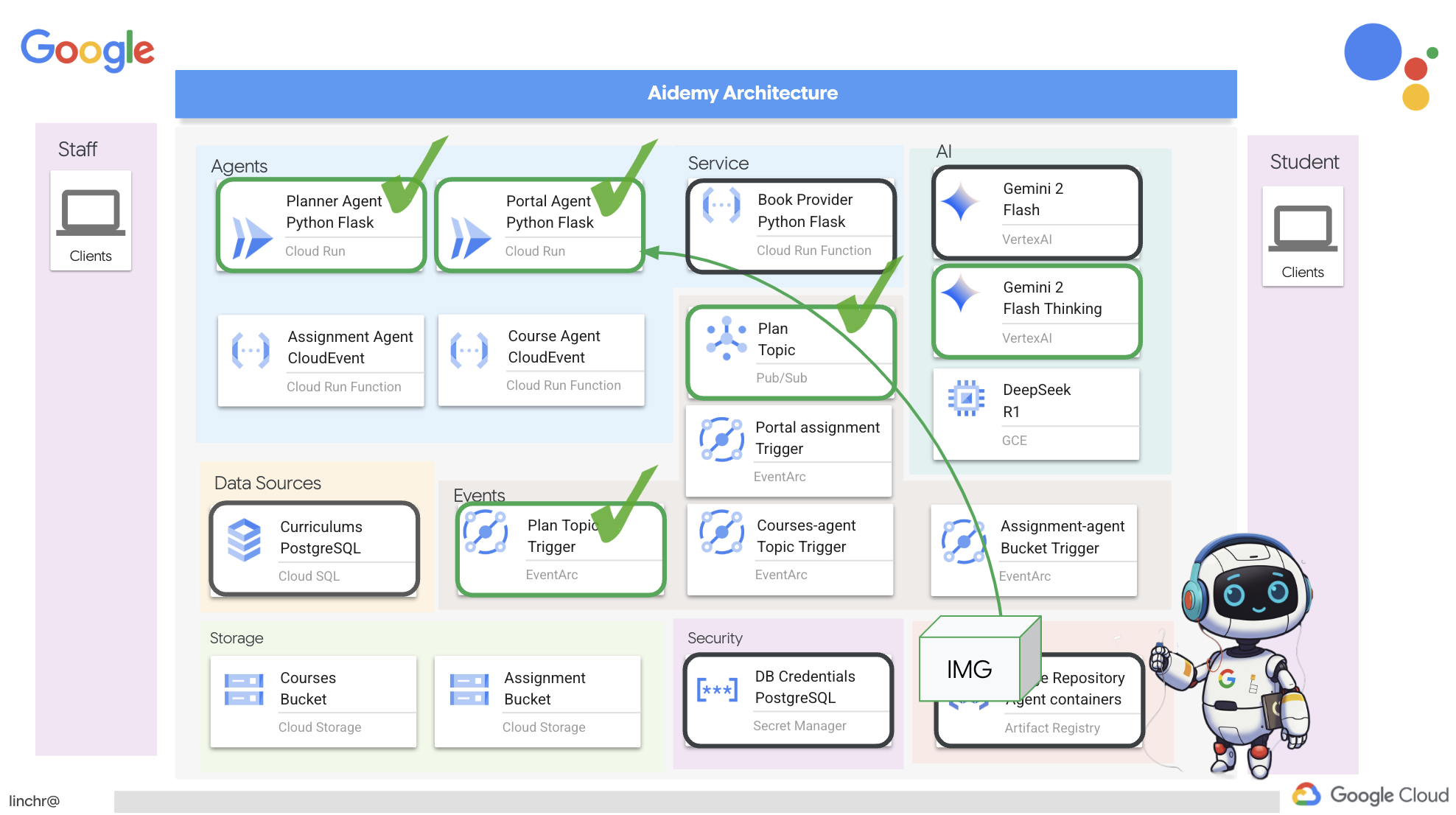

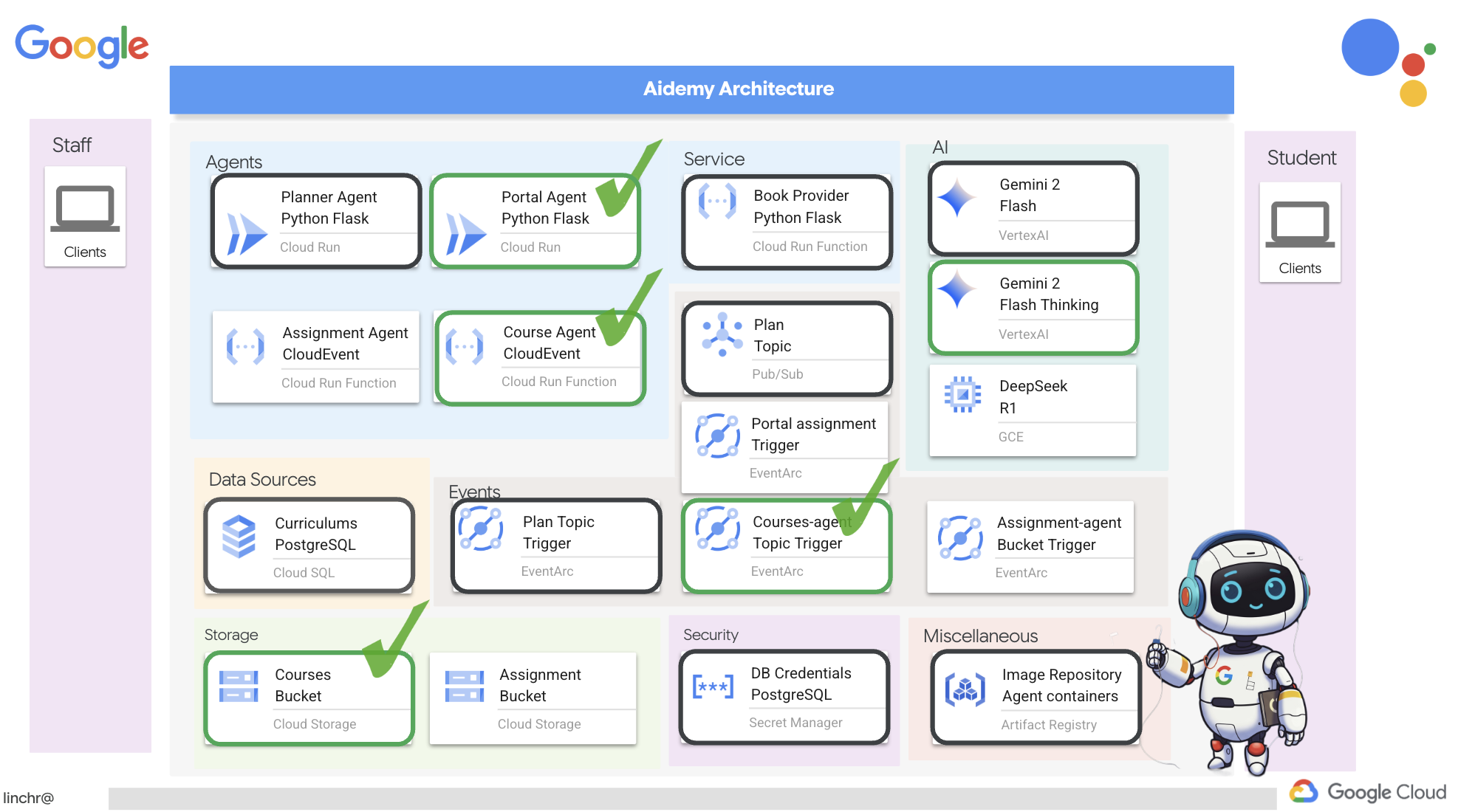

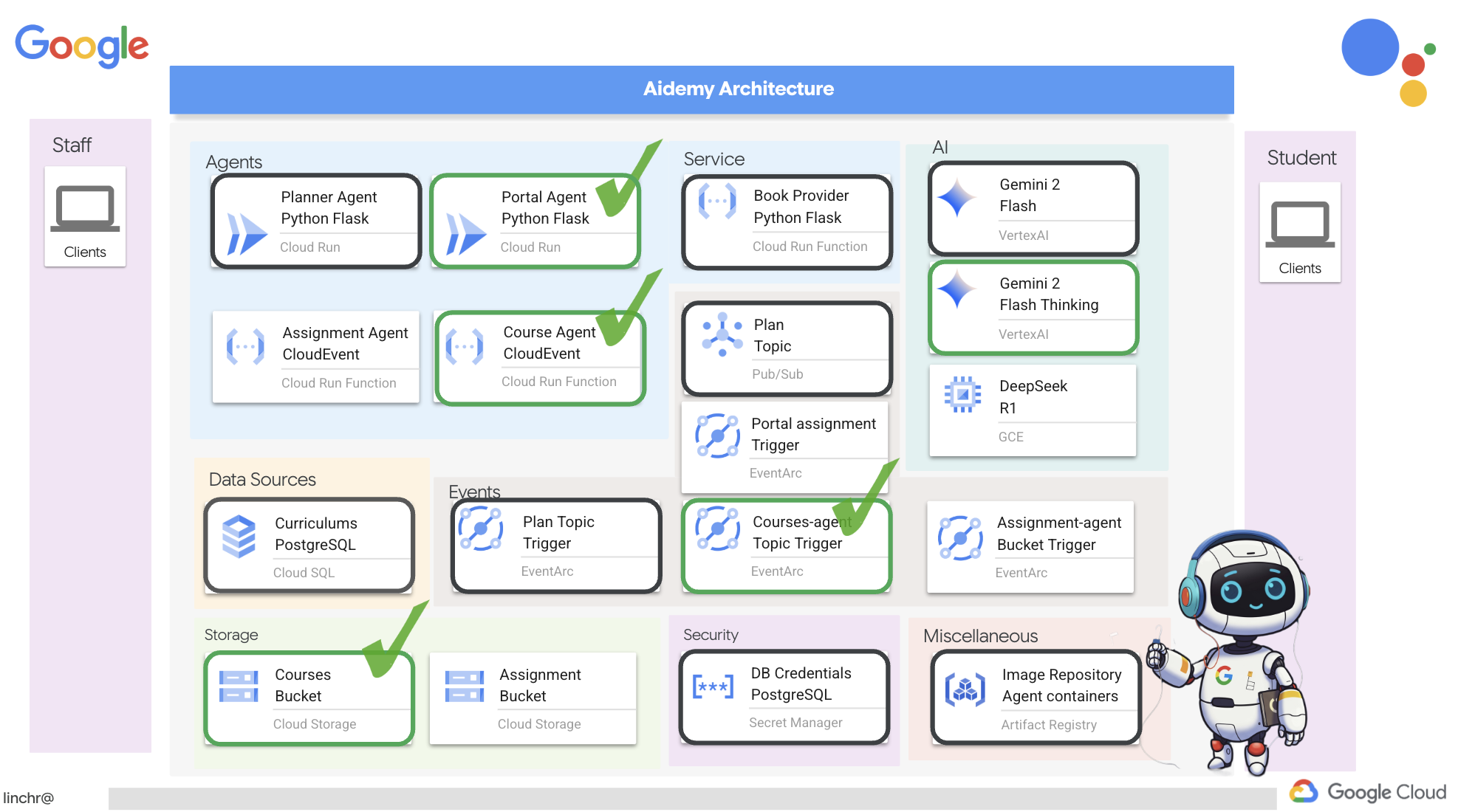

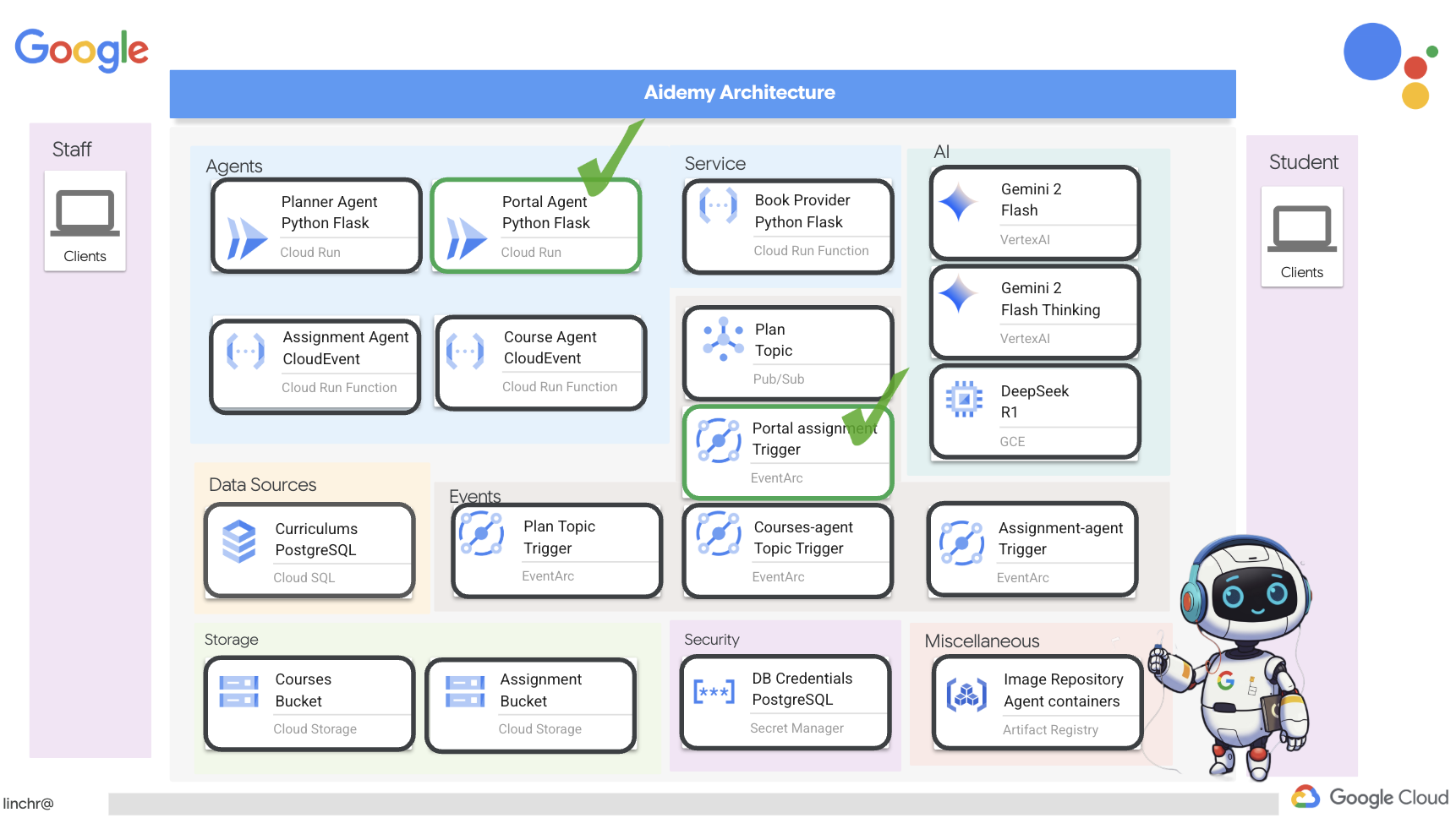

Alright, let's walk through the architecture powering our teaching assistant, Aidemy. As you can see, we've broken it down into several key components, all working together to make this happen.

Key Architectural Elements and Technologies:

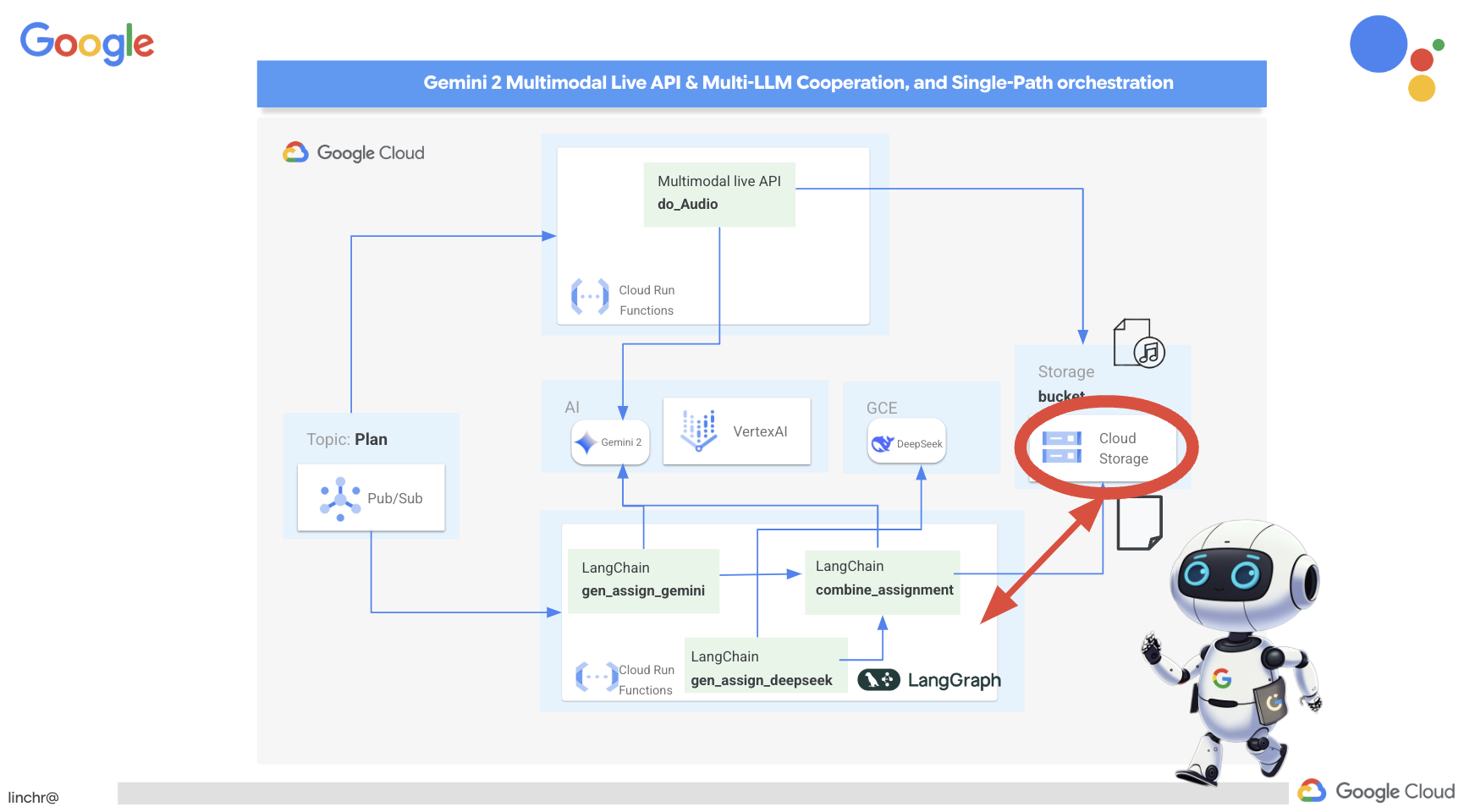

Google Cloud Platform (GCP): Central to the entire system:

- Vertex AI: Accesses Google's Gemini LLMs.

- Cloud Run: Serverless platform for deploying containerized agents and functions.

- Cloud SQL: PostgreSQL database for curriculum data.

- Pub/Sub & Eventarc: Foundation of the event-driven architecture, enabling asynchronous communication between components.

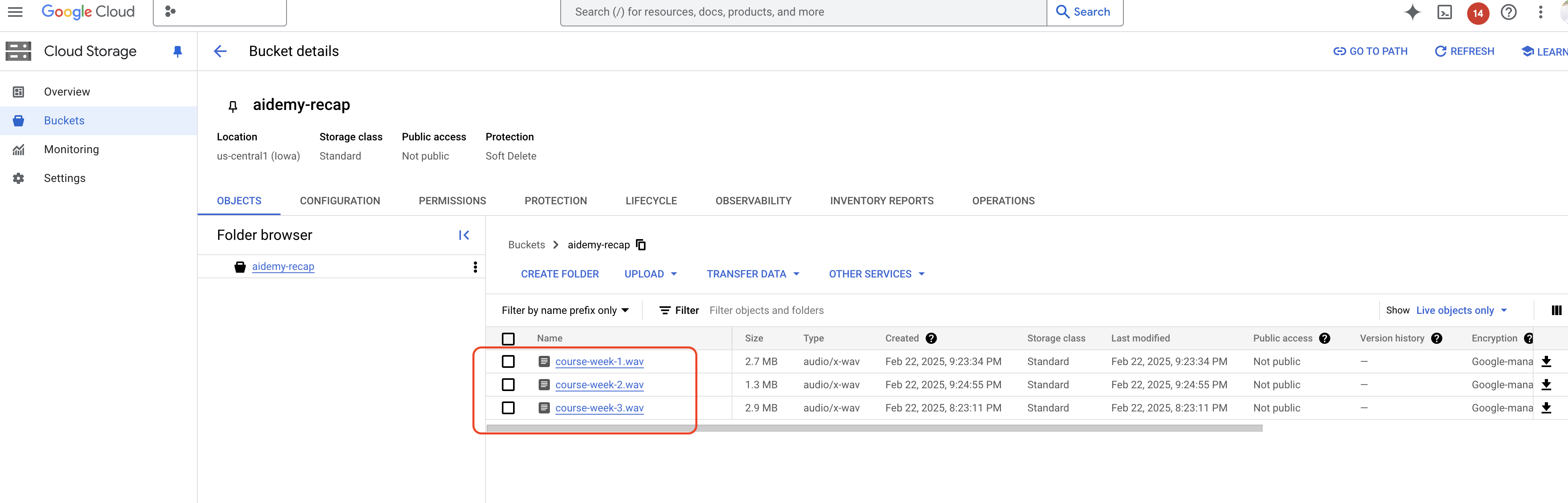

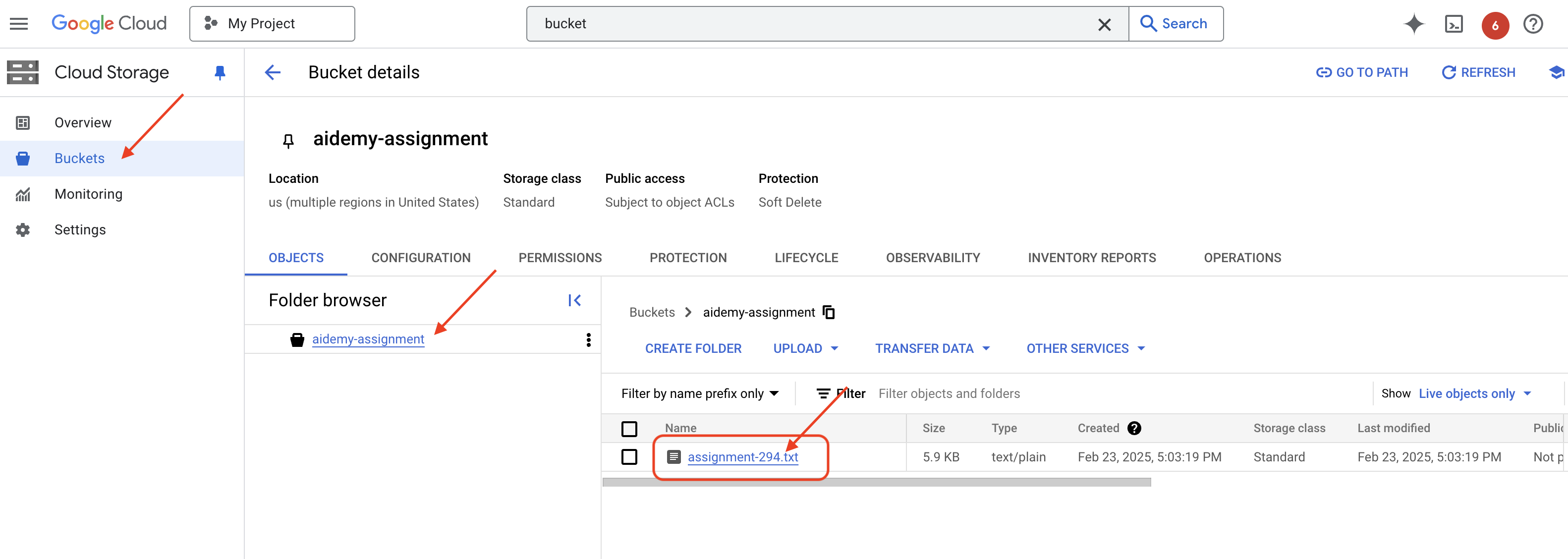

- Cloud Storage: Stores audio recaps and assignment files.

- Secret Manager: Securely manages database credentials.

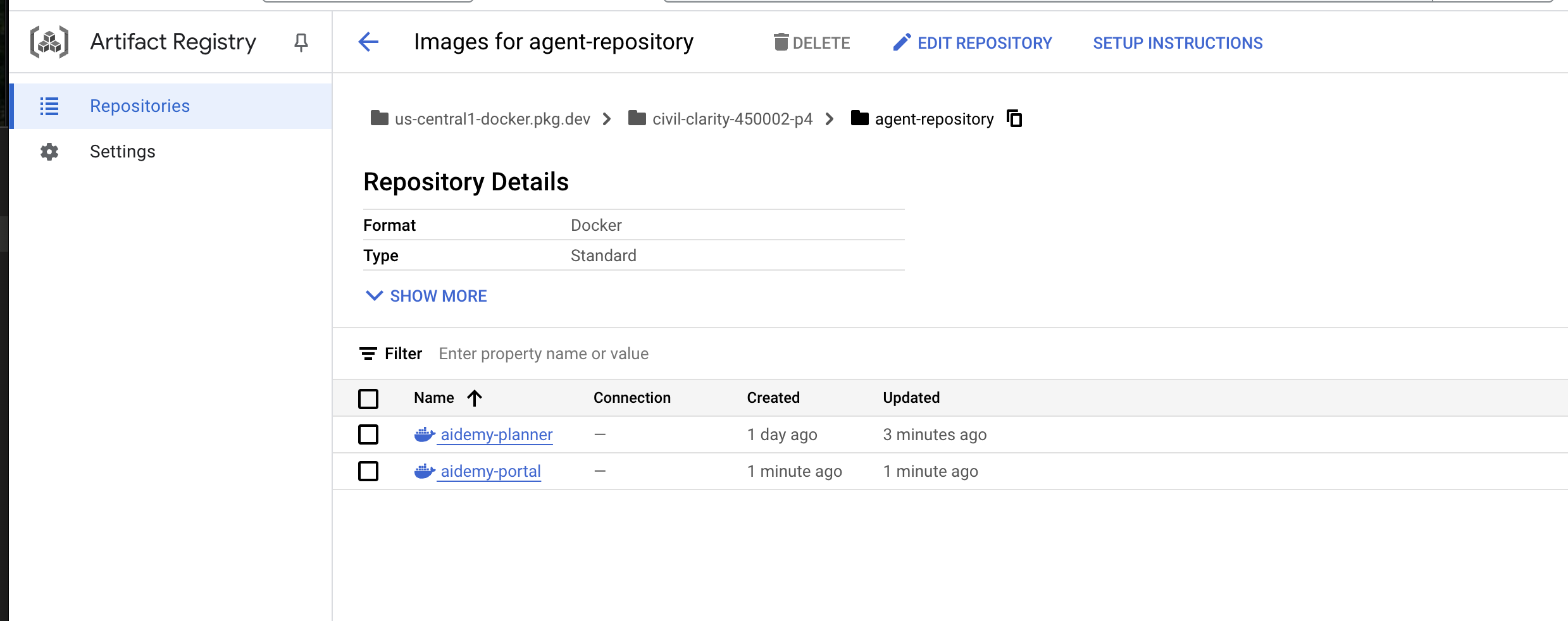

- Artifact Registry: Stores Docker images for the agents.

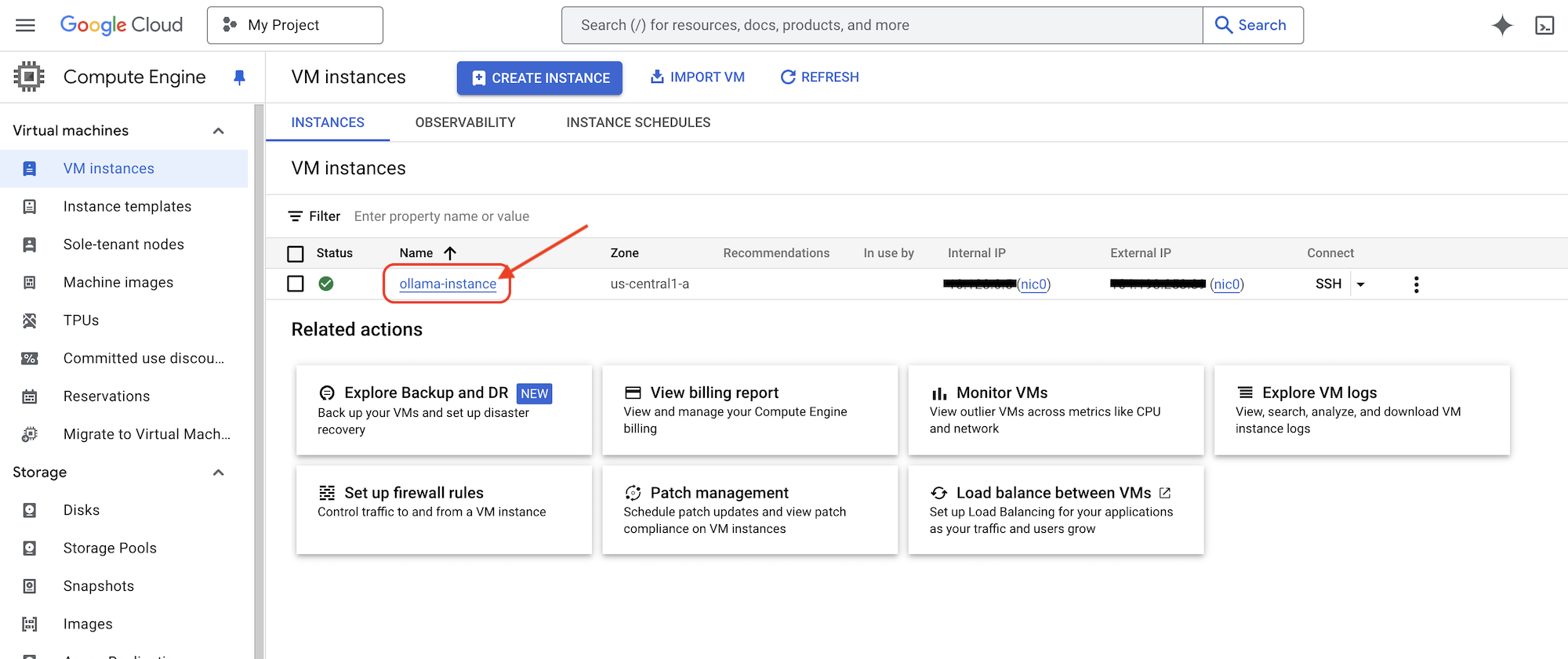

- Compute Engine: To deploy self-hosted LLM instead of relying on vendor solutions

LLMs: The "brains" of the system:

- Google's Gemini models: (Gemini x Pro, Gemini x Flash, Gemini x Flash Thinking) Used for lesson planning, content generation, dynamic HTML creation, quiz explanation and combining the assignments.

- DeepSeek: Utilized for the specialized task of generating self-study assignments

LangChain & LangGraph: Frameworks for LLM Application Development

- Facilitates the creation of complex multi-agent workflows.

- Enables the intelligent orchestration of tools (API calls, database queries, web searches).

- Implements event-driven architecture for system scalability and flexibility.

In essence, our architecture combines the power of LLMs with structured data and event-driven communication, all running on Google Cloud. This lets us build a scalable, reliable, and effective teaching assistant.

3. Before you begin

In the Google Cloud Console, on the project selector page, select or create a Google Cloud project. Make sure that billing is enabled for your Cloud project. Learn how to check if billing is enabled on a project.

Enable Gemini Code Assist in Cloud Shell IDE

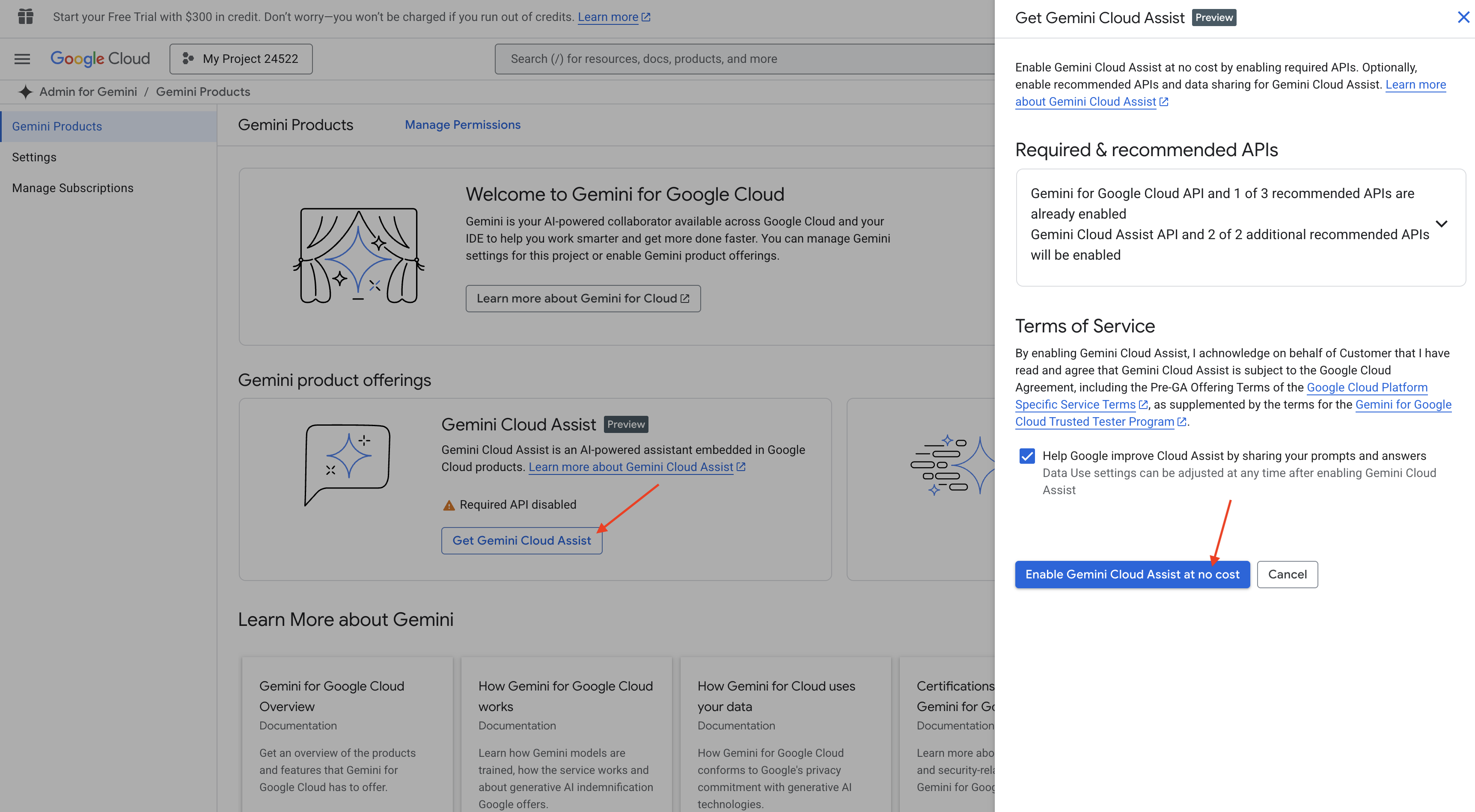

👉 In the Google Cloud console, go to the Gemini Code Assist Tools, enable the Gemini Code Assist at no cost by agreeing with the terms and condition.

Ignore the permission setup, leave this page.

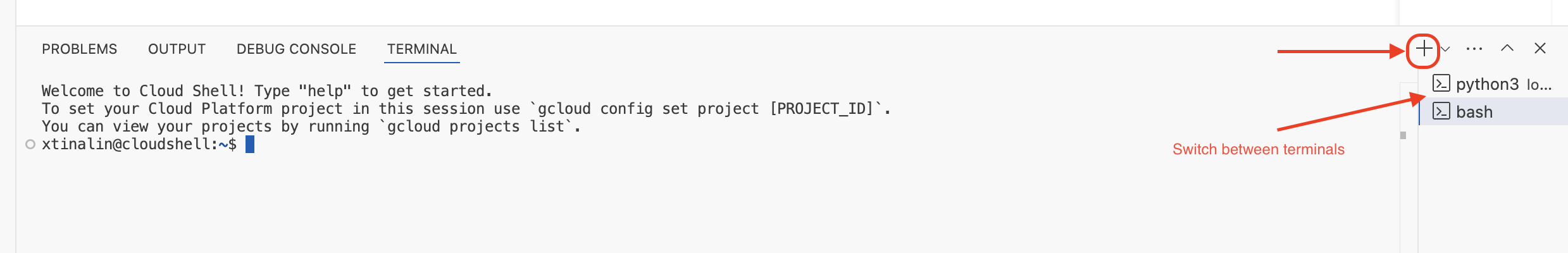

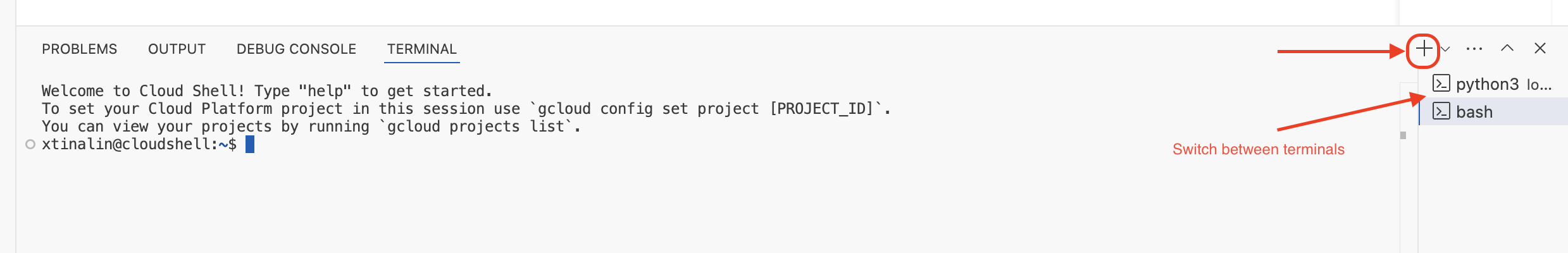

Work on Cloud Shell Editor

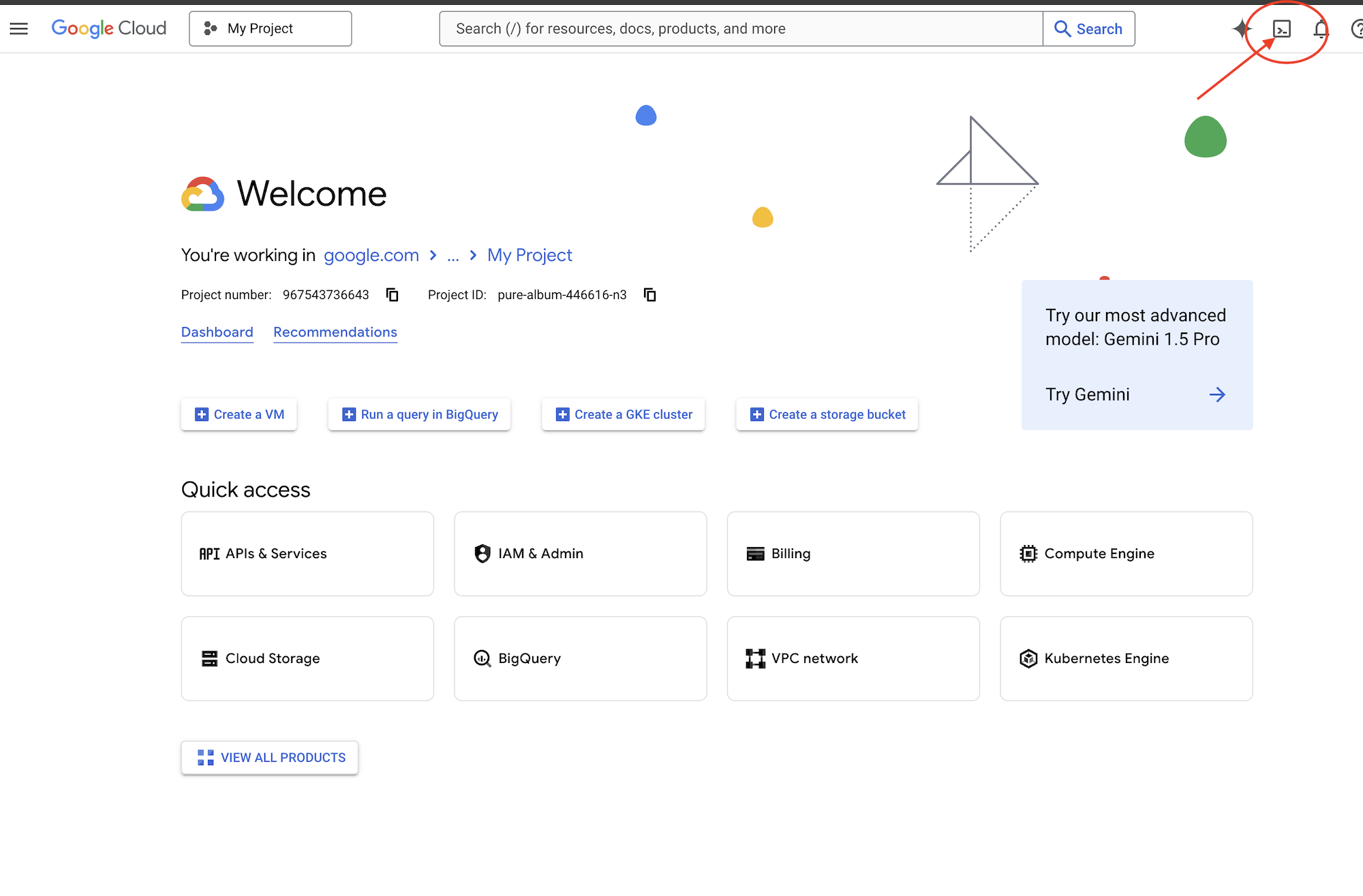

👉Click Activate Cloud Shell at the top of the Google Cloud console (It's the terminal shape icon at the top of the Cloud Shell pane), click on the "Open Editor" button (it looks like an open folder with a pencil). This will open the Cloud Shell Code Editor in the window. You'll see a file explorer on the left side.

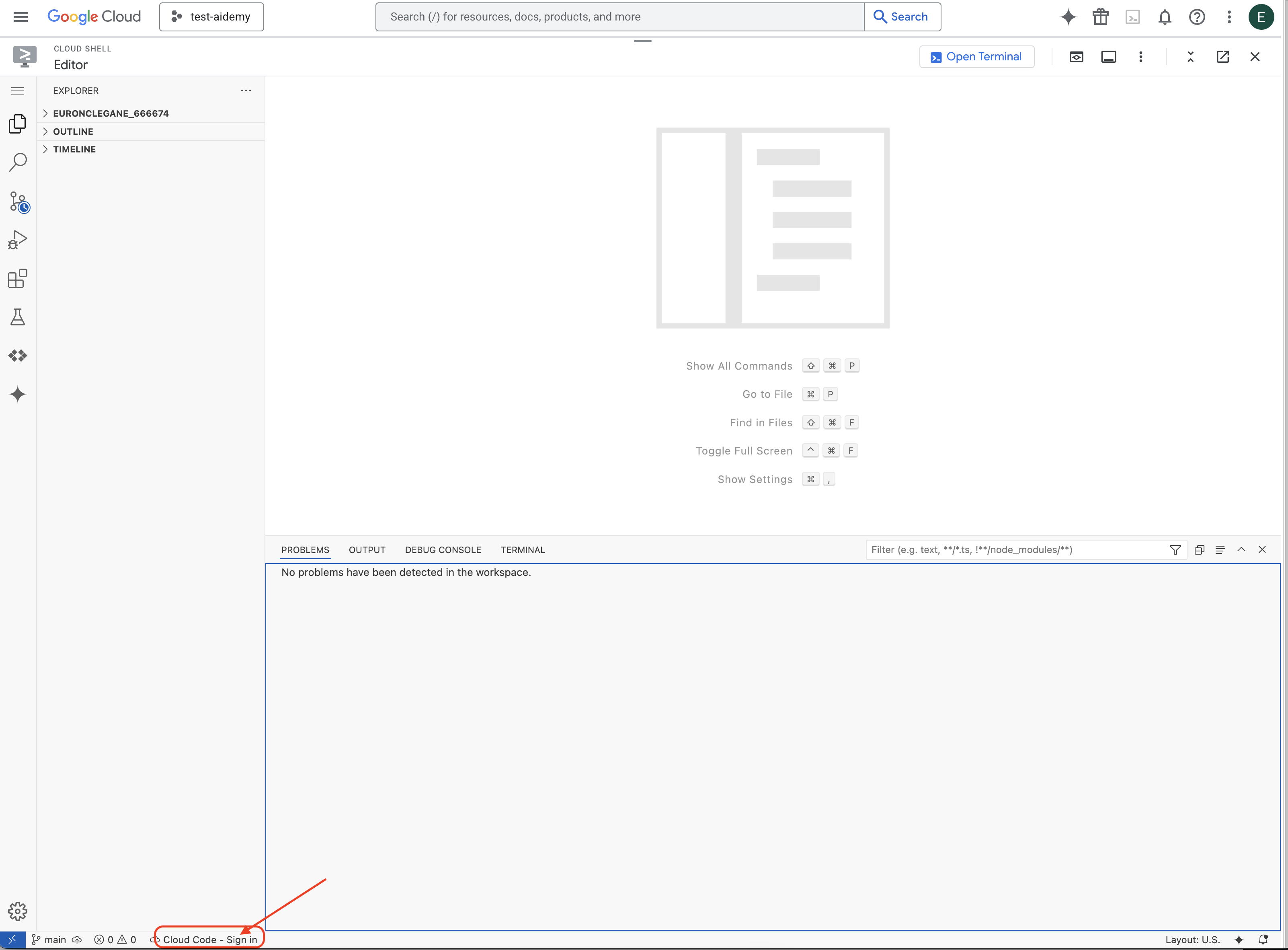

👉Click on the Cloud Code Sign-in button in the bottom status bar as shown. Authorize the plugin as instructed. If you see Cloud Code - no project in the status bar, select that then in the drop down ‘Select a Google Cloud Project' and then select the specific Google Cloud Project from the list of projects that you created.

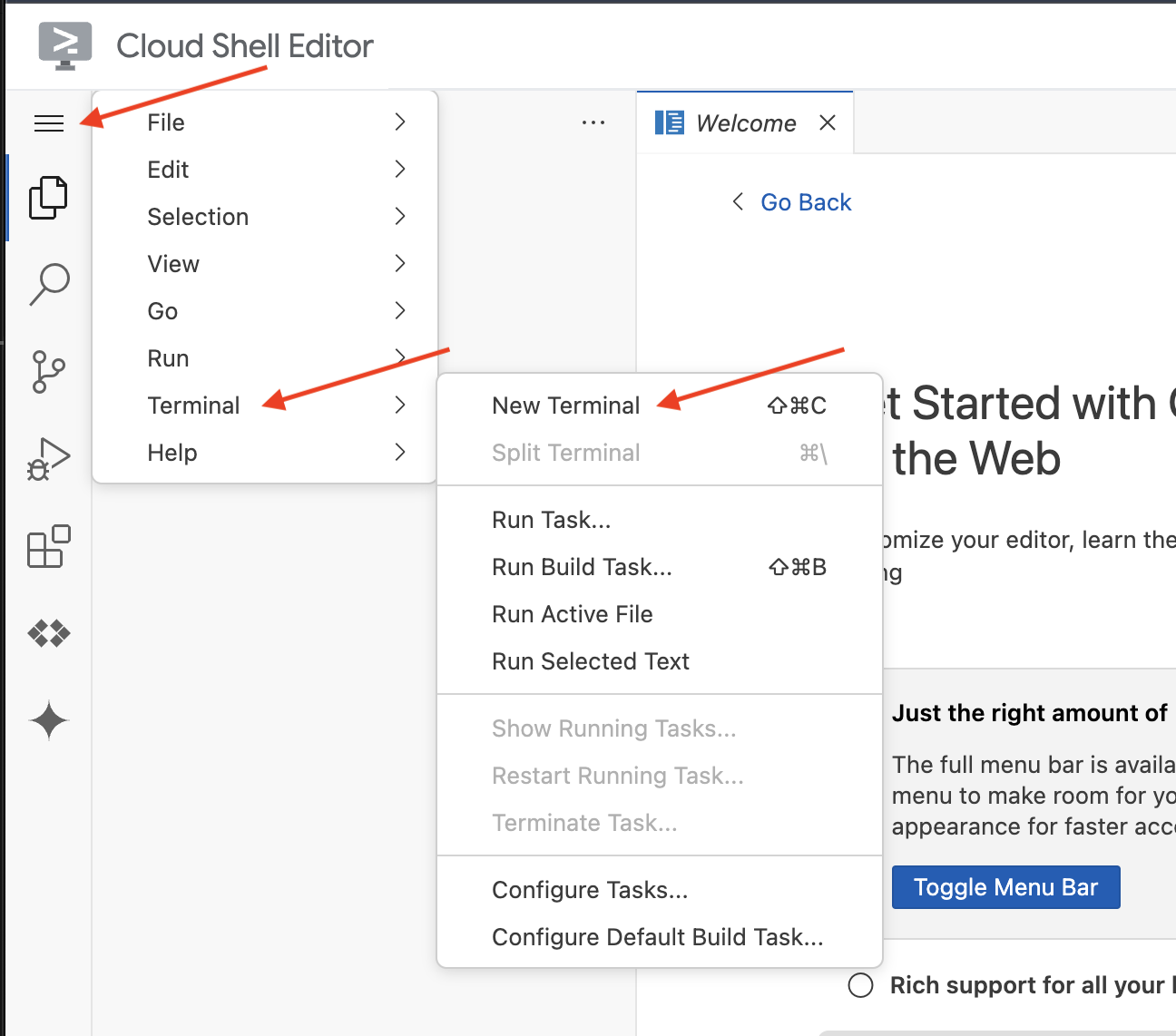

👉Open the terminal in the cloud IDE,  or

or

👉In the terminal, verify that you're already authenticated and that the project is set to your project ID using the following command:

gcloud auth list

👉And run make sure to replace <YOUR_PROJECT_ID> with your project ID:

echo <YOUR_PROJECT_ID> > ~/project_id.txt

gcloud config set project $(cat ~/project_id.txt)

👉Run the following command to enable the necessary Google Cloud APIs:

gcloud services enable compute.googleapis.com \

storage.googleapis.com \

run.googleapis.com \

artifactregistry.googleapis.com \

aiplatform.googleapis.com \

eventarc.googleapis.com \

sqladmin.googleapis.com \

secretmanager.googleapis.com \

cloudbuild.googleapis.com \

cloudresourcemanager.googleapis.com \

cloudfunctions.googleapis.com \

cloudaicompanion.googleapis.com

This may take a couple of minutes..

Setting up permission

👉Setup service account permission. In the terminal, run :

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

export SERVICE_ACCOUNT_NAME=$(gcloud compute project-info describe --format="value(defaultServiceAccount)")

echo "Here's your SERVICE_ACCOUNT_NAME $SERVICE_ACCOUNT_NAME"

👉 Grant Permissions. In the terminal, run :

#Cloud Storage (Read/Write):

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/storage.objectAdmin"

#Pub/Sub (Publish/Receive):

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/pubsub.publisher"

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/pubsub.subscriber"

#Cloud SQL (Read/Write):

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/cloudsql.editor"

#Eventarc (Receive Events):

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/iam.serviceAccountTokenCreator"

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/eventarc.eventReceiver"

#Vertex AI (User):

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/aiplatform.user"

#Secret Manager (Read):

gcloud projects add-iam-policy-binding $PROJECT_ID \

--member="serviceAccount:$SERVICE_ACCOUNT_NAME" \

--role="roles/secretmanager.secretAccessor"

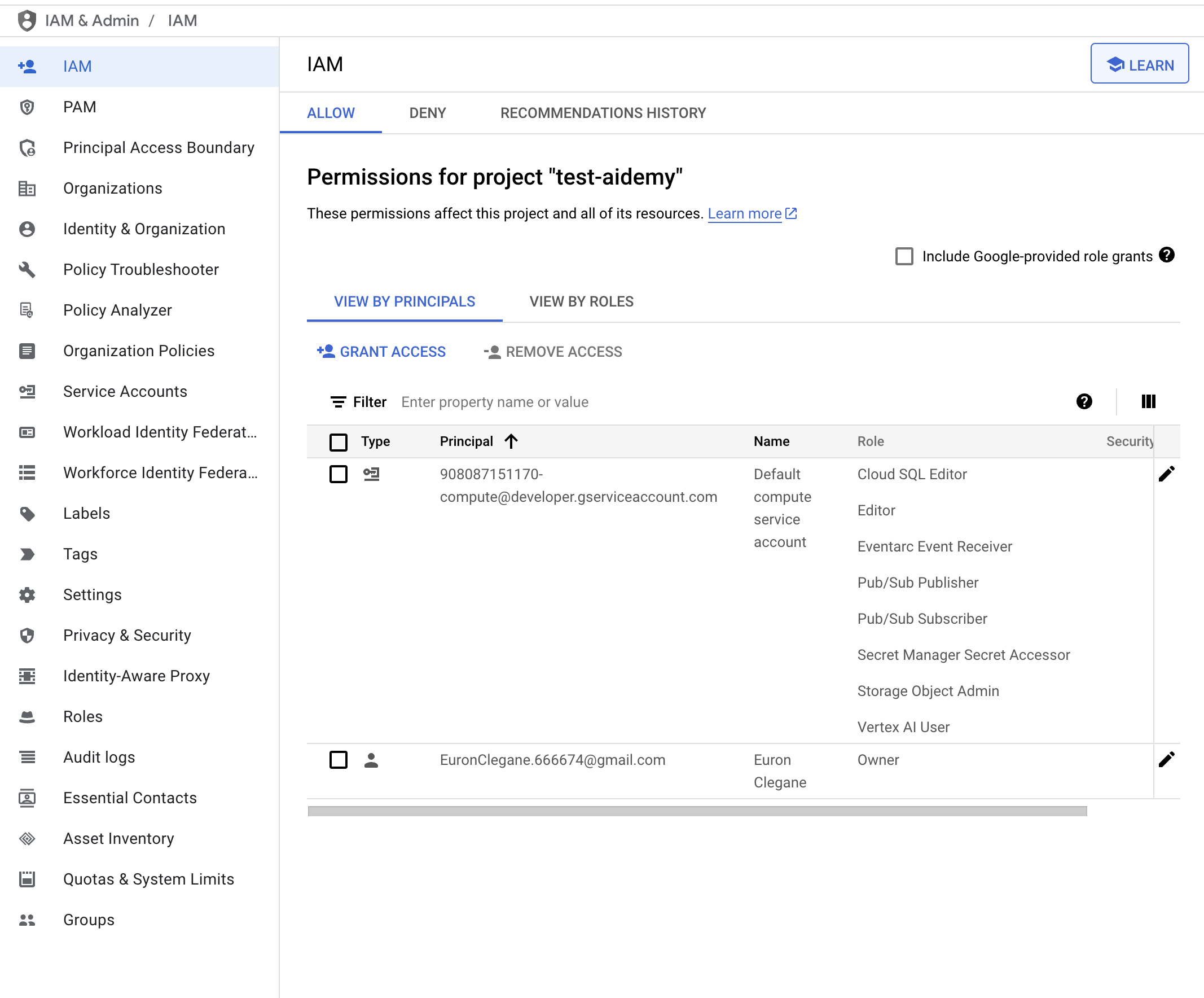

👉Validate result in your IAM console

👉Run the following commands in the terminal to create a Cloud SQL instance named aidemy. We'll need this later, but since this process can take some time, we'll do it now.

gcloud sql instances create aidemy \

--database-version=POSTGRES_14 \

--cpu=2 \

--memory=4GB \

--region=us-central1 \

--root-password=1234qwer \

--storage-size=10GB \

--storage-auto-increase

4. Building the first agent

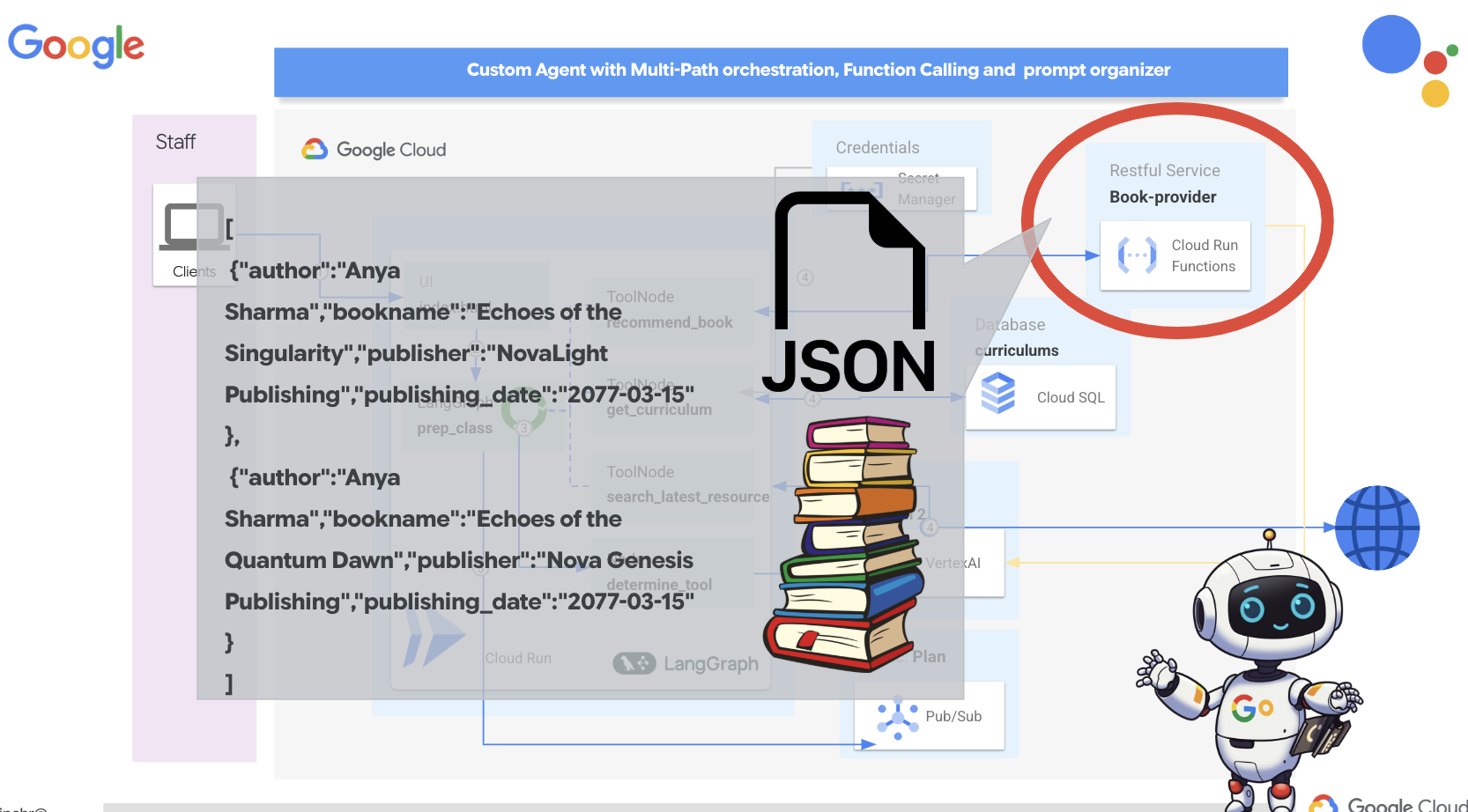

Before we dive into complex multi-agent systems, we need to establish a fundamental building block: a single, functional agent. In this section, we'll take our first steps by creating a simple "book provider" agent. The book provider agent takes a category as input and uses a Gemini LLM to generate a JSON representation book within that category. It then serves these book recommendations as a REST API endpoint .

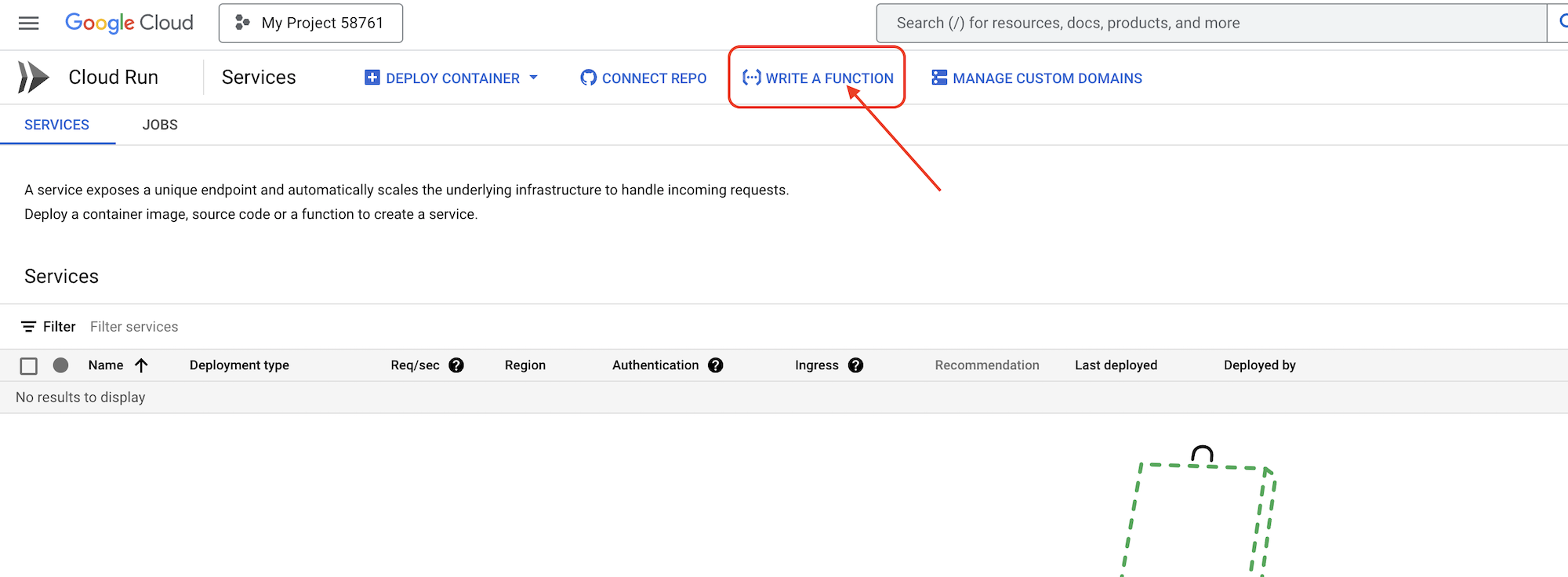

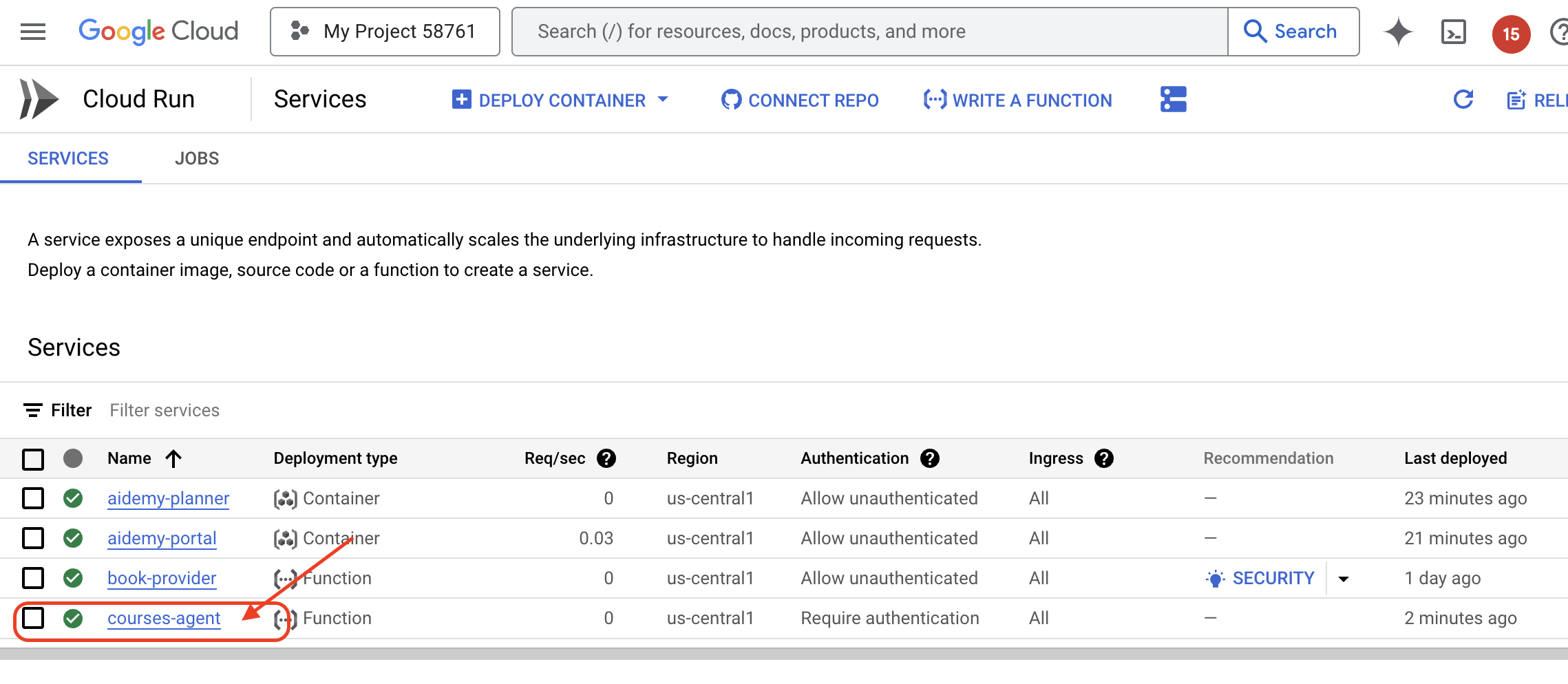

👉In another browser tab, open the Google Cloud Console in your web browser. In the navigation menu (☰), go to "Cloud Run". Click the "+ ... WRITE A FUNCTION" button.

👉Next we'll configures the basic settings of the Cloud Run Function:

- Service name:

book-provider - Region:

us-central1 - Runtime:

Python 3.12 - Authentication:

Allow unauthenticated invocationsto Enabled.

👉Leave other settings as default and click Create. This will take you to the source code editor.

You'll see pre-populated main.py and requirements.txt files.

The main.py will contain the business logic of the function, requirements.txt will contain the packages needed.

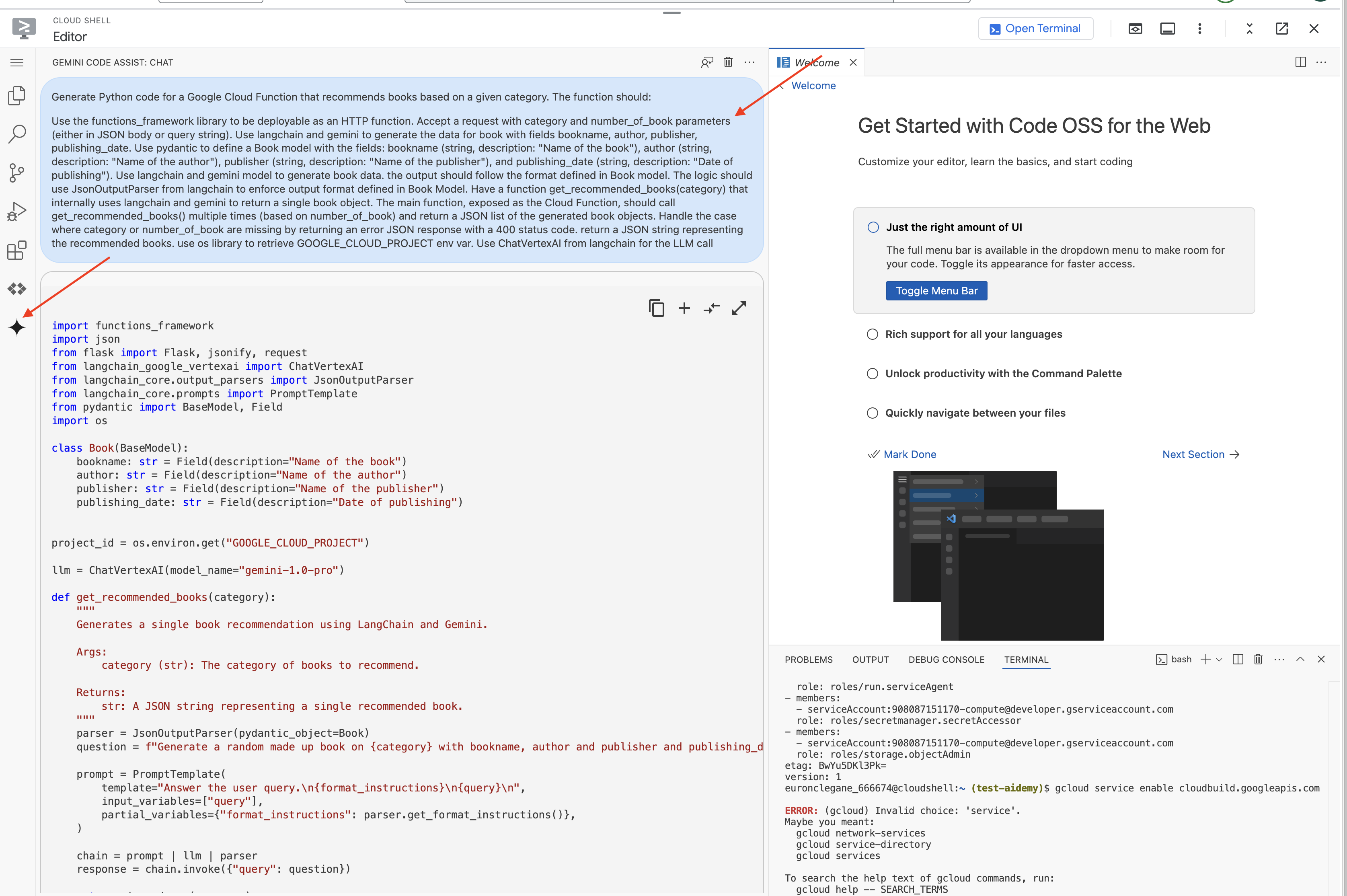

👉Now we are ready to write some code! But before diving in, let's see if Gemini Code Assist can give us a head start. Return to the Cloud Shell Editor, click on Gemini Code Assist icon located on the top, it should open the Gemini Code Assist chat.

👉 Paste the following request into the prompt box:

Use the functions_framework library to be deployable as an HTTP function.

Accept a request with category and number_of_book parameters (either in JSON body or query string).

Use langchain and gemini to generate the data for book with fields bookname, author, publisher, publishing_date.

Use pydantic to define a Book model with the fields: bookname (string, description: "Name of the book"), author (string, description: "Name of the author"), publisher (string, description: "Name of the publisher"), and publishing_date (string, description: "Date of publishing").

Use langchain and gemini model to generate book data. the output should follow the format defined in Book model.

The logic should use JsonOutputParser from langchain to enforce output format defined in Book Model.

Have a function get_recommended_books(category) that internally uses langchain and gemini to return a single book object.

The main function, exposed as the Cloud Function, should call get_recommended_books() multiple times (based on number_of_book) and return a JSON list of the generated book objects.

Handle the case where category or number_of_book are missing by returning an error JSON response with a 400 status code.

return a JSON string representing the recommended books. use os library to retrieve GOOGLE_CLOUD_PROJECT env var. Use ChatVertexAI from langchain for the LLM call

Code Assist will then generate a potential solution, providing both the source code and a requirements.txt dependency file. (DO NOT USE THIS CODE)

We encourage you to compare the Code Assist's generated code with the tested, correct solution provided below. This allows you to evaluate the tool's effectiveness and identify any potential discrepancies. While LLMs should never be blindly trusted, Code Assist can be a great tool for rapid prototyping and generating initial code structures, and should be use for a good head start.

Since this is a workshop, we'll proceed with the verified code provided below. However, feel free to experiment with the Code Assist-generated code in your own time to gain a deeper understanding of its capabilities and limitations.

👉Return to the Cloud Run Function's source code editor (in the other browser tab). Carefully replace the existing content of main.py with the code provided below:

import functions_framework

import json

from flask import Flask, jsonify, request

from langchain_google_vertexai import ChatVertexAI

from langchain_core.output_parsers import JsonOutputParser

from langchain_core.prompts import PromptTemplate

from pydantic import BaseModel, Field

import os

class Book(BaseModel):

bookname: str = Field(description="Name of the book")

author: str = Field(description="Name of the author")

publisher: str = Field(description="Name of the publisher")

publishing_date: str = Field(description="Date of publishing")

project_id = os.environ.get("GOOGLE_CLOUD_PROJECT")

llm = ChatVertexAI(model_name="gemini-2.0-flash-lite-001")

def get_recommended_books(category):

"""

A simple book recommendation function.

Args:

category (str): category

Returns:

str: A JSON string representing the recommended books.

"""

parser = JsonOutputParser(pydantic_object=Book)

question = f"Generate a random made up book on {category} with bookname, author and publisher and publishing_date"

prompt = PromptTemplate(

template="Answer the user query.\n{format_instructions}\n{query}\n",

input_variables=["query"],

partial_variables={"format_instructions": parser.get_format_instructions()},

)

chain = prompt | llm | parser

response = chain.invoke({"query": question})

return json.dumps(response)

@functions_framework.http

def recommended(request):

request_json = request.get_json(silent=True) # Get JSON data

if request_json and 'category' in request_json and 'number_of_book' in request_json:

category = request_json['category']

number_of_book = int(request_json['number_of_book'])

elif request.args and 'category' in request.args and 'number_of_book' in request.args:

category = request.args.get('category')

number_of_book = int(request.args.get('number_of_book'))

else:

return jsonify({'error': 'Missing category or number_of_book parameters'}), 400

recommendations_list = []

for i in range(number_of_book):

book_dict = json.loads(get_recommended_books(category))

print(f"book_dict=======>{book_dict}")

recommendations_list.append(book_dict)

return jsonify(recommendations_list)

👉Replace the contents of requirements.txt with the following:

functions-framework==3.*

google-genai==1.0.0

flask==3.1.0

jsonify==0.5

langchain_google_vertexai==2.0.13

langchain_core==0.3.34

pydantic==2.10.5

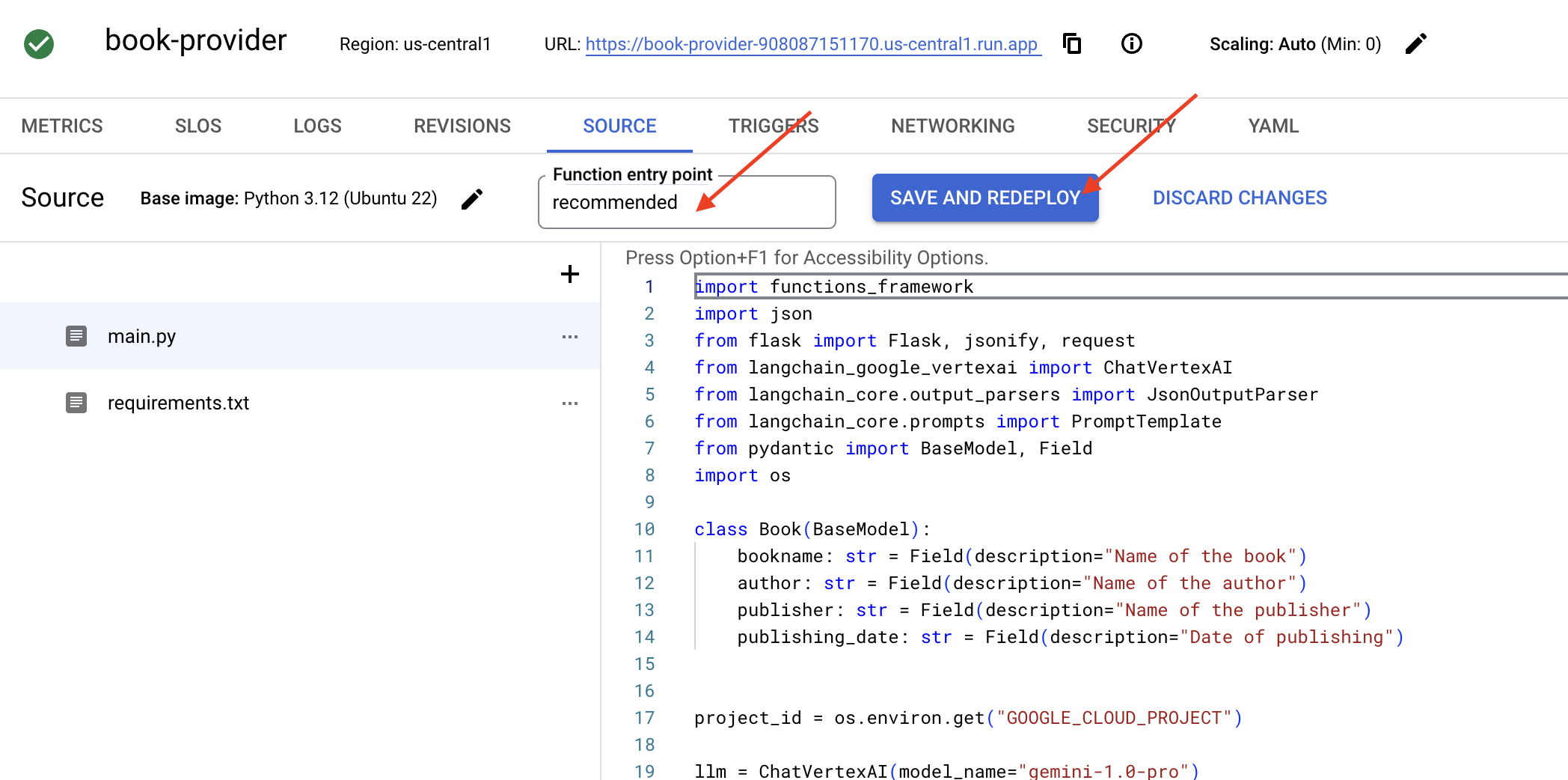

👉we'll set the Function entry point: recommended

👉Click SAVE AND DEPLOY (or SAVE AND REDEPLOY). to deploy the Function. Wait for the deployment process to complete. The Cloud Console will display the status. This may take several minutes.

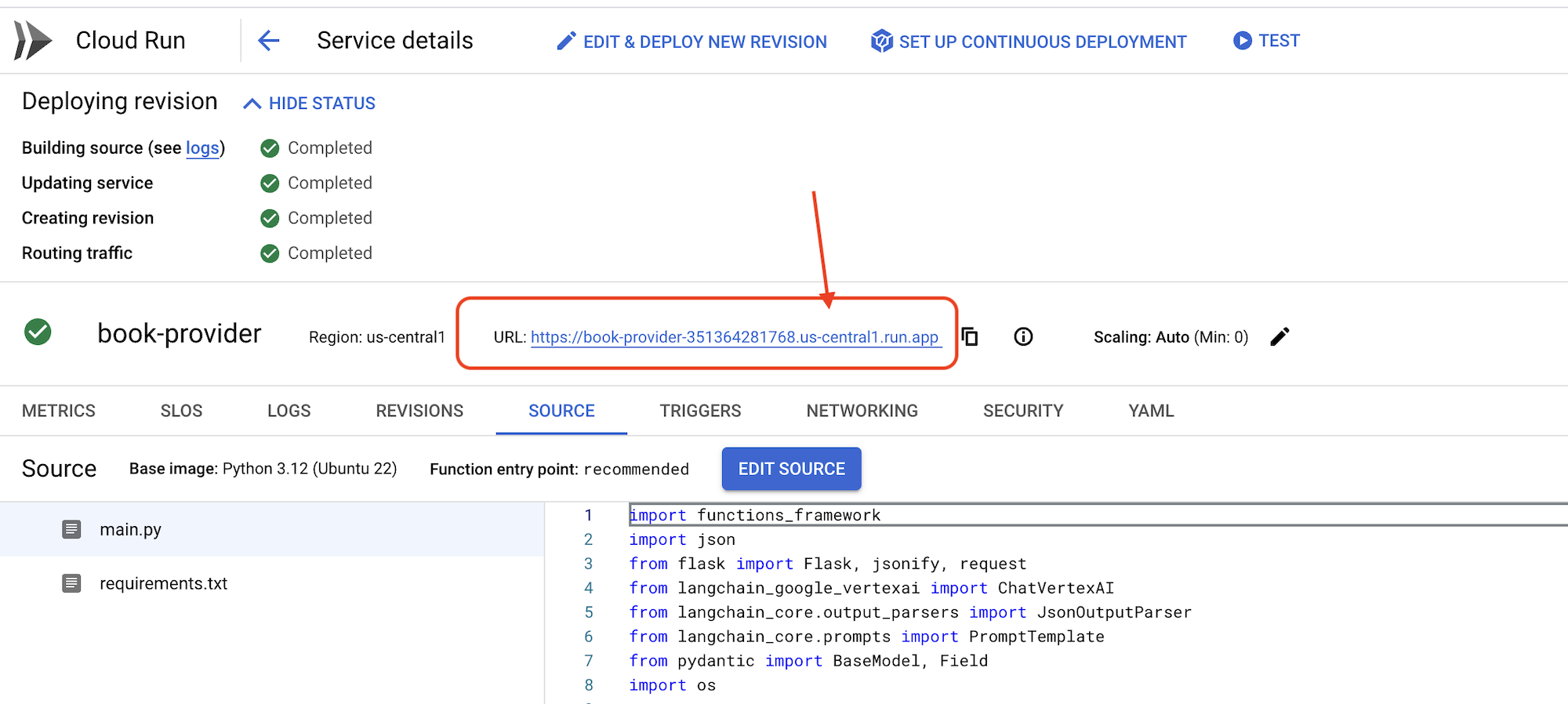

👉Once deployed, go back in the cloud shell editor, in the terminal run:

👉Once deployed, go back in the cloud shell editor, in the terminal run:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

export BOOK_PROVIDER_URL=$(gcloud run services describe book-provider --region=us-central1 --project=$PROJECT_ID --format="value(status.url)")

curl -X POST -H "Content-Type: application/json" -d '{"category": "Science Fiction", "number_of_book": 2}' $BOOK_PROVIDER_URL

It should show some book data in JSON format.

[

{"author":"Anya Sharma","bookname":"Echoes of the Singularity","publisher":"NovaLight Publishing","publishing_date":"2077-03-15"},

{"author":"Anya Sharma","bookname":"Echoes of the Quantum Dawn","publisher":"Nova Genesis Publishing","publishing_date":"2077-03-15"}

]

Congratulations! You have successfully deployed a Cloud Run Function. This is one of the services we will be integrating when developing our Aidemy agent.

5. Building Tools: Connecting Agents to RESTFUL service and Data

Let's go ahead and download the Bootstrap Skeleton Project, make sure you are in the Cloud Shell Editor. In the terminal run,

git clone https://github.com/weimeilin79/aidemy-bootstrap.git

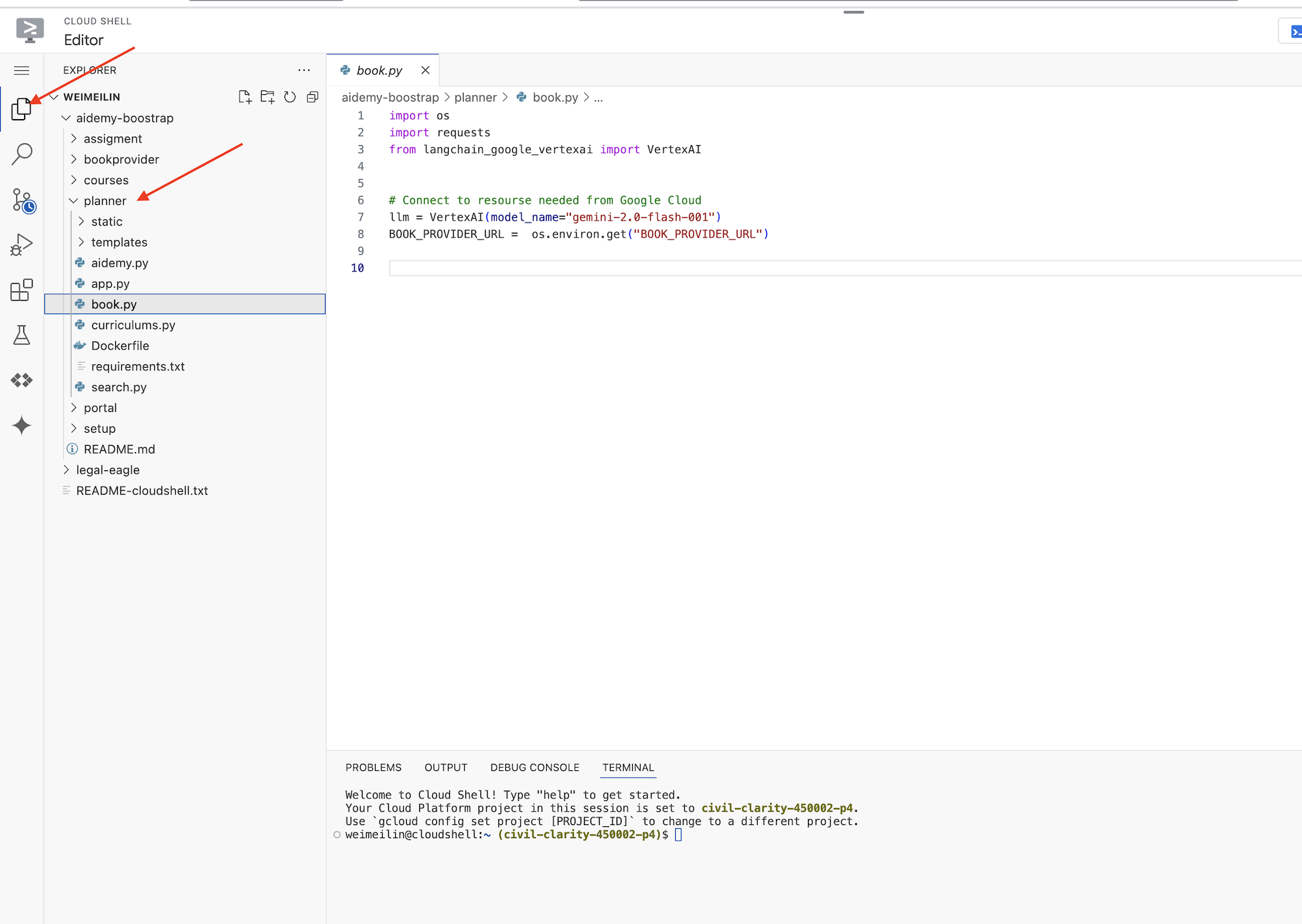

After running this command, a new folder named aidemy-bootstrap will be created in your Cloud Shell environment.

In the Cloud Shell Editor's Explorer pane (usually on the left side), you should now see the folder that was created when you cloned the Git repository aidemy-bootstrap. Open the root folder of your project in the Explorer. You'll find a planner subfolder within it, open that as well.

Let's start building the tools our agents will use to become truly helpful. As you know, LLMs are excellent at reasoning and generating text, but they need access to external resources to perform real-world tasks and provide accurate, up-to-date information. Think of these tools as the agent's "Swiss Army knife," giving it the ability to interact with the world.

When building an agent, it's easy to fall into hard-coding a ton of details. This creates an agent that is not flexible. Instead, by creating and using tools, the agent has access to external logic or systems which gives it the benefits of both the LLM and traditional programming.

In this section, we'll create the foundation for the planner agent, which teachers will use to generate lesson plans. Before the agent starts generating a plan, we want to set boundaries by providing more details on the subject and topic. We'll build three tools:

- Restful API Call: Interacting with a pre-existing API to retrieve data.

- Database Query: Fetching structured data from a Cloud SQL database.

- Google Search: Accessing real-time information from the web.

Fetching Book Recommendations from an API

First, let's create a tool that retrieves book recommendations from the book-provider API we deployed in the previous section. This demonstrates how an agent can leverage existing services.

In the Cloud Shell Editor, open the aidemy-bootstrap project that you cloned in the previous section.

👉Edit the book.py in the planner folder, and paste the following code at the end of the file:

def recommend_book(query: str):

"""

Get a list of recommended book from an API endpoint

Args:

query: User's request string

"""

region = get_next_region();

llm = VertexAI(model_name="gemini-1.5-pro", location=region)

query = f"""The user is trying to plan a education course, you are the teaching assistant. Help define the category of what the user requested to teach, respond the categroy with no more than two word.

user request: {query}

"""

print(f"-------->{query}")

response = llm.invoke(query)

print(f"CATEGORY RESPONSE------------>: {response}")

# call this using python and parse the json back to dict

category = response.strip()

headers = {"Content-Type": "application/json"}

data = {"category": category, "number_of_book": 2}

books = requests.post(BOOK_PROVIDER_URL, headers=headers, json=data)

return books.text

if __name__ == "__main__":

print(recommend_book("I'm doing a course for my 5th grade student on Math Geometry, I'll need to recommend few books come up with a teach plan, few quizes and also a homework assignment."))

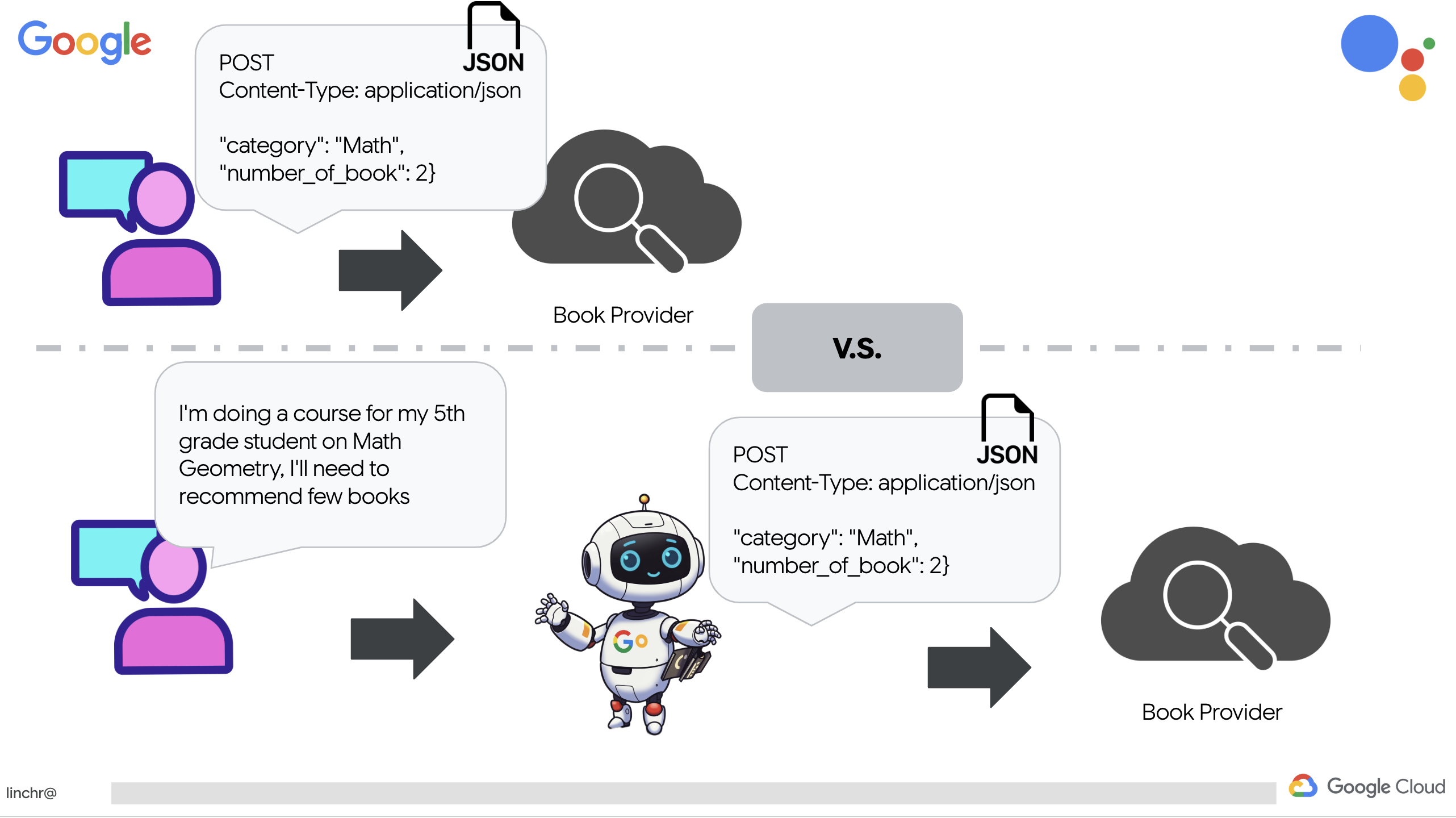

Explanation:

- recommend_book(query: str): This function takes a user's query as input.

- LLM Interaction: It uses the LLM to extract the category from the query. This demonstrates how you can use the LLM to help create parameters for tools.

- API Call: It makes a POST request to the book-provider API, passing the category and the desired number of books.

👉To test this new function, set the environment variable, run :

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

cd ~/aidemy-bootstrap/planner/

export BOOK_PROVIDER_URL=$(gcloud run services describe book-provider --region=us-central1 --project=$PROJECT_ID --format="value(status.url)")

👉Install the dependencies and run the code to ensure it works, run:

cd ~/aidemy-bootstrap/planner/

python -m venv env

source env/bin/activate

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

pip install -r requirements.txt

python book.py

You should see a JSON string containing book recommendations retrieved from the book-provider API. The results are randomly generated. Your books may not be the same, but you should receive two book recommendations in JSON format.

[{"author":"Anya Sharma","bookname":"Echoes of the Singularity","publisher":"NovaLight Publishing","publishing_date":"2077-03-15"},{"author":"Anya Sharma","bookname":"Echoes of the Quantum Dawn","publisher":"Nova Genesis Publishing","publishing_date":"2077-03-15"}]

If you see this, the first tool is working correctly!

Instead of explicitly crafting a RESTful API call with specific parameters, we're using natural language ("I'm doing a course..."). The agent then intelligently extracts the necessary parameters (like the category) using NLP, highlighting how the agent leverages natural language understanding to interact with the API.

👉Remove the following testing code from the book.py

if __name__ == "__main__":

print(recommend_book("I'm doing a course for my 5th grade student on Math Geometry, I'll need to recommend few books come up with a teach plan, few quizes and also a homework assignment."))

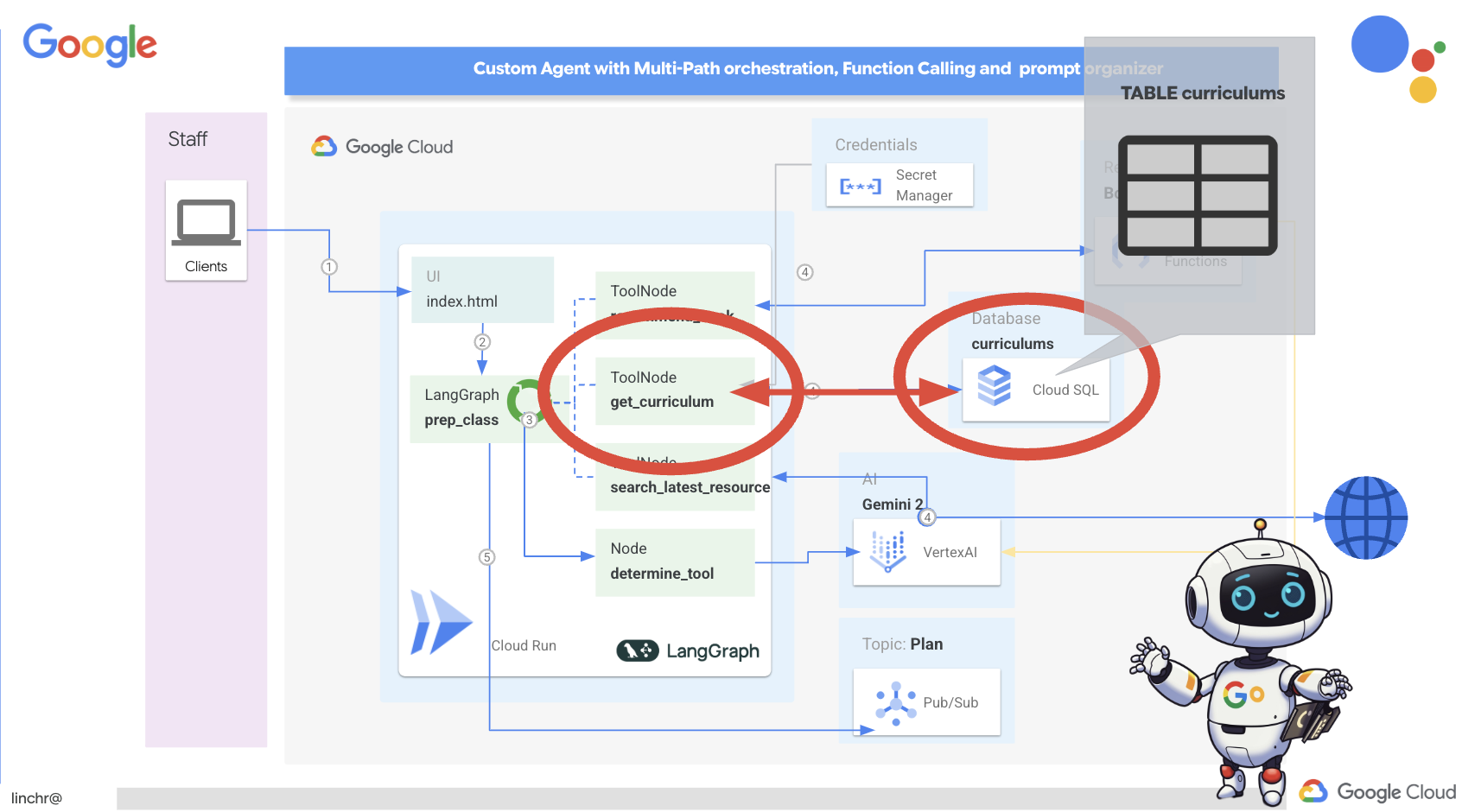

Getting Curriculum Data from a Database

Next, we'll build a tool that fetches structured curriculum data from a Cloud SQL PostgreSQL database. This allows the agent to access a reliable source of information for lesson planning.

Remember the aidemy Cloud SQL instance you've created in previous step? Here's where it will be used.

👉 In the terminal, run the following command to create a database named aidemy-db in the new instance.

gcloud sql databases create aidemy-db \

--instance=aidemy

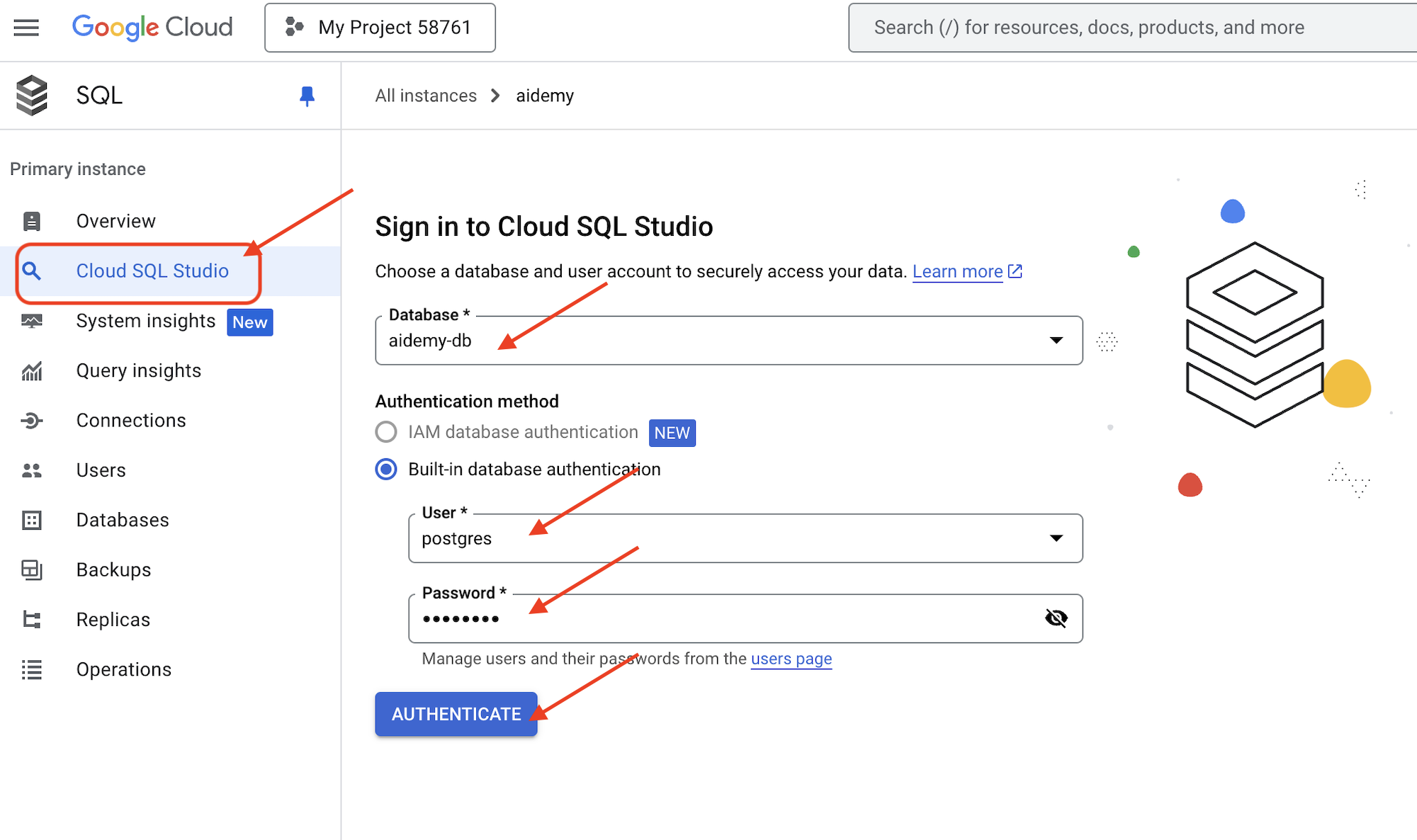

Let's verify the instance in the Cloud SQL in the Google Cloud Console, You should see a Cloud SQL instance named aidemy listed.

👉 Click on the instance name to view its details. 👉 In the Cloud SQL instance details page, click on Cloud SQL Studio in the left-hand navigation menu. This will open a new tab.

Select aidemy-db as the database. enter postgres as user and 1234qwer as the password.

Click Authenticate

👉In the SQL Studio query editor, navigate to tab Editor 1, paste the following SQL code:

CREATE TABLE curriculums (

id SERIAL PRIMARY KEY,

year INT,

subject VARCHAR(255),

description TEXT

);

-- Inserting detailed curriculum data for different school years and subjects

INSERT INTO curriculums (year, subject, description) VALUES

-- Year 5

(5, 'Mathematics', 'Introduction to fractions, decimals, and percentages, along with foundational geometry and problem-solving techniques.'),

(5, 'English', 'Developing reading comprehension, creative writing, and basic grammar, with a focus on storytelling and poetry.'),

(5, 'Science', 'Exploring basic physics, chemistry, and biology concepts, including forces, materials, and ecosystems.'),

(5, 'Computer Science', 'Basic coding concepts using block-based programming and an introduction to digital literacy.'),

-- Year 6

(6, 'Mathematics', 'Expanding on fractions, ratios, algebraic thinking, and problem-solving strategies.'),

(6, 'English', 'Introduction to persuasive writing, character analysis, and deeper comprehension of literary texts.'),

(6, 'Science', 'Forces and motion, the human body, and introductory chemical reactions with hands-on experiments.'),

(6, 'Computer Science', 'Introduction to algorithms, logical reasoning, and basic text-based programming (Python, Scratch).'),

-- Year 7

(7, 'Mathematics', 'Algebraic expressions, geometry, and introduction to statistics and probability.'),

(7, 'English', 'Analytical reading of classic and modern literature, essay writing, and advanced grammar skills.'),

(7, 'Science', 'Introduction to cells and organisms, chemical reactions, and energy transfer in physics.'),

(7, 'Computer Science', 'Building on programming skills with Python, introduction to web development, and cyber safety.');

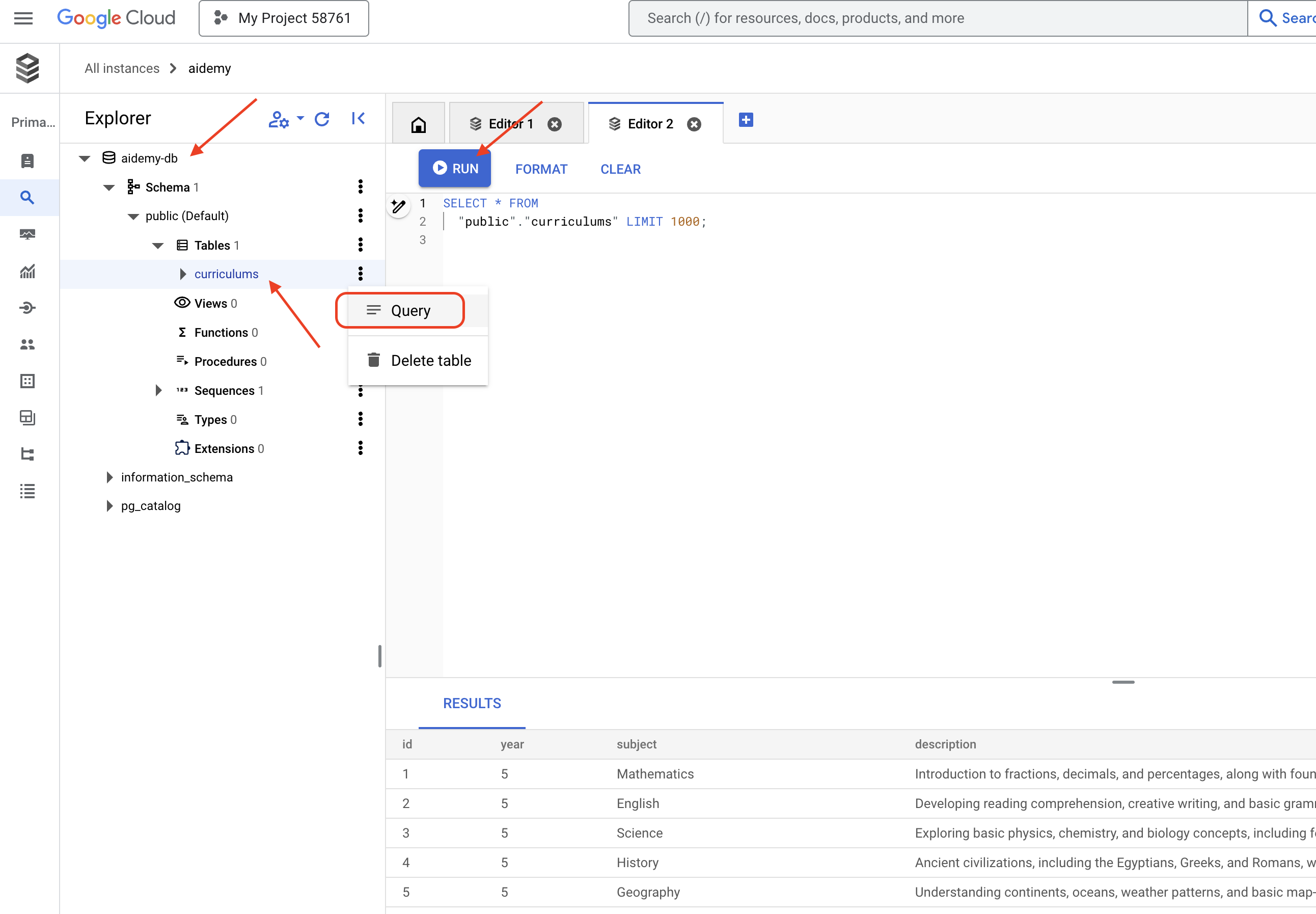

This SQL code creates a table named curriculums and inserts some sample data.

👉 Click Run to execute the SQL code. You should see a confirmation message indicating that the statements were executed successfully.

👉 Expand the explorer, find the newly created table curriculums and click query. It should open a new editor tab with SQL generated for you,

SELECT * FROM

"public"."curriculums" LIMIT 1000;

👉Click Run.

The results table should display the rows of data you inserted in the previous step, confirming that the table and data were created correctly.

Now that you have successfully created a database with populated sample curriculum data, we'll build a tool to retrieve it.

👉In the Cloud Code Editor, edit file curriculums.py in the aidemy-bootstrap folder and paste the following code at the end of the file:

def connect_with_connector() -> sqlalchemy.engine.base.Engine:

db_user = os.environ["DB_USER"]

db_pass = os.environ["DB_PASS"]

db_name = os.environ["DB_NAME"]

print(f"--------------------------->db_user: {db_user!r}")

print(f"--------------------------->db_pass: {db_pass!r}")

print(f"--------------------------->db_name: {db_name!r}")

connector = Connector()

pool = sqlalchemy.create_engine(

"postgresql+pg8000://",

creator=lambda: connector.connect(

instance_connection_name,

"pg8000",

user=db_user,

password=db_pass,

db=db_name,

),

pool_size=2,

max_overflow=2,

pool_timeout=30, # 30 seconds

pool_recycle=1800, # 30 minutes

)

return pool

def get_curriculum(year: int, subject: str):

"""

Get school curriculum

Args:

subject: User's request subject string

year: User's request year int

"""

try:

stmt = sqlalchemy.text(

"SELECT description FROM curriculums WHERE year = :year AND subject = :subject"

)

with db.connect() as conn:

result = conn.execute(stmt, parameters={"year": year, "subject": subject})

row = result.fetchone()

if row:

return row[0]

else:

return None

except Exception as e:

print(e)

return None

db = connect_with_connector()

Explanation:

- Environment Variables: The code retrieves database credentials and connection information from environment variables (more on this below).

- connect_with_connector(): This function uses the Cloud SQL Connector to establish a secure connection to the database.

- get_curriculum(year: int, subject: str): This function takes the year and subject as input, queries the curriculums table, and returns the corresponding curriculum description.

👉Before we can run the code, we must set some environment variables, in the terminal, run:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

export INSTANCE_NAME="aidemy"

export REGION="us-central1"

export DB_USER="postgres"

export DB_PASS="1234qwer"

export DB_NAME="aidemy-db"

👉To test add the following code to the end of curriculums.py:

if __name__ == "__main__":

print(get_curriculum(6, "Mathematics"))

👉Run the code:

cd ~/aidemy-bootstrap/planner/

source env/bin/activate

python curriculums.py

You should see the curriculum description for 6th-grade Mathematics printed to the console.

Expanding on fractions, ratios, algebraic thinking, and problem-solving strategies.

If you see the curriculum description, the database tool is working correctly! Go ahead and stop the script by pressing Ctrl+C if it is still running.

👉Remove the following testing code from the curriculums.py

if __name__ == "__main__":

print(get_curriculum(6, "Mathematics"))

👉Exit virtual environment, in the terminal run:

deactivate

6. Building Tools: Access real-time information from the web

Finally, we'll build a tool that uses the Gemini 2 and Google Search integration to access real-time information from the web. This helps the agent stay up-to-date and provide relevant results.

Gemini 2's integration with the Google Search API enhances agent capabilities by providing more accurate and contextually relevant search results. This allows agents to access up-to-date information and ground their responses in real-world data, minimizing hallucinations. The improved API integration also facilitates more natural language queries, enabling agents to formulate complex and nuanced search requests.

This function takes a search query, curriculum, subject, and year as input and uses the Gemini API and the Google Search tool to retrieve relevant information from the internet. If you look closely, it's using the Google Generative AI SDK to do function calling without using any other framework.

👉Edit search.py in the aidemy-bootstrap folder and paste the following code at the end of the file:

model_id = "gemini-2.0-flash-001"

google_search_tool = Tool(

google_search = GoogleSearch()

)

def search_latest_resource(search_text: str, curriculum: str, subject: str, year: int):

"""

Get latest information from the internet

Args:

search_text: User's request category string

subject: "User's request subject" string

year: "User's request year" integer

"""

search_text = "%s in the context of year %d and subject %s with following curriculum detail %s " % (search_text, year, subject, curriculum)

region = get_next_region()

client = genai.Client(vertexai=True, project=PROJECT_ID, location=region)

print(f"search_latest_resource text-----> {search_text}")

response = client.models.generate_content(

model=model_id,

contents=search_text,

config=GenerateContentConfig(

tools=[google_search_tool],

response_modalities=["TEXT"],

)

)

print(f"search_latest_resource response-----> {response}")

return response

if __name__ == "__main__":

response = search_latest_resource("What are the syllabus for Year 6 Mathematics?", "Expanding on fractions, ratios, algebraic thinking, and problem-solving strategies.", "Mathematics", 6)

for each in response.candidates[0].content.parts:

print(each.text)

Explanation:

- Defining Tool - google_search_tool: Wrapping the GoogleSearch object within a Tool

- search_latest_resource(search_text: str, subject: str, year: int): This function takes a search query, subject, and year as input and uses the Gemini API to perform a Google search.

- GenerateContentConfig: Define that it has access to the GoogleSearch tool

The Gemini model internally analyzes the search_text and determines whether it can answer the question directly or if it needs to use the GoogleSearch tool. This is a critical step that happens within the LLM's reasoning process. The model has been trained to recognize situations where external tools are necessary. If the model decides to use the GoogleSearch tool, the Google Generative AI SDK handles the actual invocation. The SDK takes the model's decision and the parameters it generates and sends them to the Google Search API. This part is hidden from the user in the code.

The Gemini model then integrates the search results into its response. It can use the information to answer the user's question, generate a summary, or perform some other task.

👉To test, run the code:

cd ~/aidemy-bootstrap/planner/

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

source env/bin/activate

python search.py

You should see the Gemini Search API response containing search results related to "Syllabus for Year 5 Mathematics." The exact output will depend on the search results, but it will be a JSON object with information about the search.

If you see search results, the Google Search tool is working correctly! Go ahead and stop the script by pressing Ctrl+C if the script is still running.

👉And remove the last part in the code.

if __name__ == "__main__":

response = search_latest_resource("What are the syllabus for Year 6 Mathematics?", "Expanding on fractions, ratios, algebraic thinking, and problem-solving strategies.", "Mathematics", 6)

for each in response.candidates[0].content.parts:

print(each.text)

👉Exit virtual environment, in terminal run:

deactivate

Congratulations! You have now built three powerful tools for your planner agent: an API connector, a database connector, and a Google Search tool. These tools will enable the agent to access the information and capabilities it needs to create effective teaching plans.

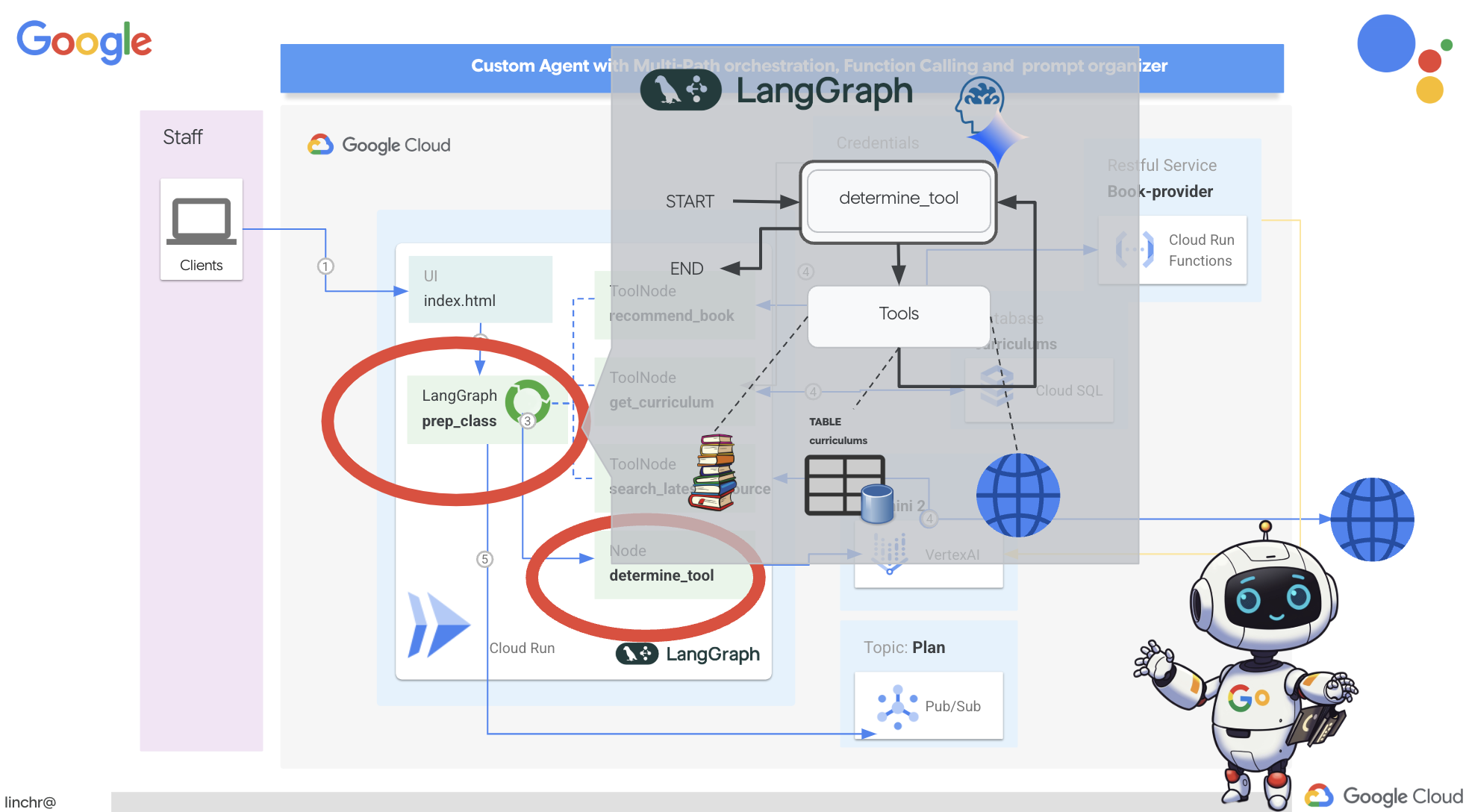

7. Orchestrating with LangGraph

Now that we have built our individual tools, it's time to orchestrate them using LangGraph. This will allow us to create a more sophisticated "planner" agent that can intelligently decide which tools to use and when, based on the user's request.

LangGraph is a Python library designed to make it easier to build stateful, multi-actor applications using Large Language Models (LLMs). Think of it as a framework for orchestrating complex conversations and workflows involving LLMs, tools, and other agents.

Key Concepts:

- Graph Structure: LangGraph represents your application's logic as a directed graph. Each node in the graph represents a step in the process (e.g., a call to an LLM, a tool invocation, a conditional check). Edges define the flow of execution between nodes.

- State: LangGraph manages the state of your application as it moves through the graph. This state can include variables like the user's input, the results of tool calls, intermediate outputs from LLMs, and any other information that needs to be preserved between steps.

- Nodes: Each node represents a computation or interaction. They can be:

- Tool Nodes: Use a tool (e.g., perform a web search, query a database)

- Function Nodes: Execute a Python function.

- Edges: Connect nodes, defining the flow of execution. They can be:

- Direct Edges: A simple, unconditional flow from one node to another.

- Conditional Edges: The flow depends on the outcome of a conditional node.

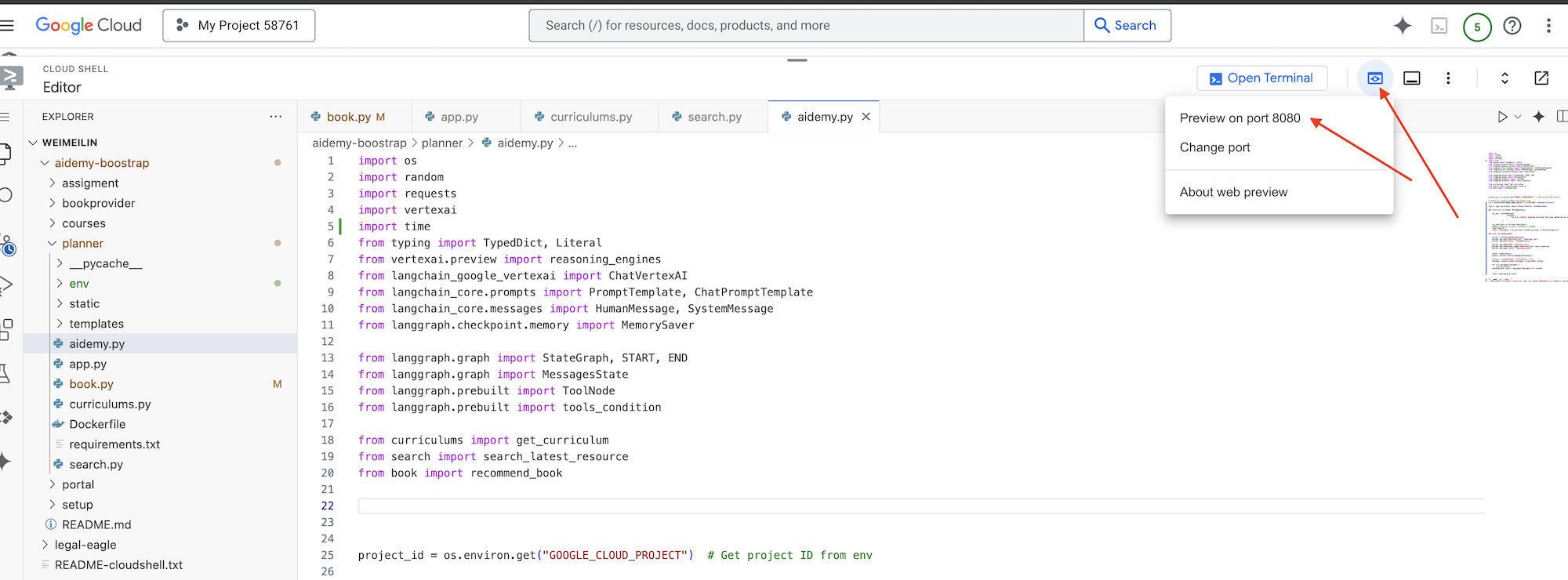

We will use LangGraph to implement the orchestration. Let's edit the aidemy.py file under aidemy-bootstrap folder to define our LangGraph logic.

👉 Append the following code to the end of

aidemy.py:

tools = [get_curriculum, search_latest_resource, recommend_book]

def determine_tool(state: MessagesState):

llm = ChatVertexAI(model_name="gemini-2.0-flash-001", location=get_next_region())

sys_msg = SystemMessage(

content=(

f"""You are a helpful teaching assistant that helps gather all needed information.

Your ultimate goal is to create a detailed 3-week teaching plan.

You have access to tools that help you gather information.

Based on the user request, decide which tool(s) are needed.

"""

)

)

llm_with_tools = llm.bind_tools(tools)

return {"messages": llm_with_tools.invoke([sys_msg] + state["messages"])}

This function is responsible for taking the current state of the conversation, providing the LLM with a system message, and then asking the LLM to generate a response. The LLM can either respond directly to the user or choose to use one of the available tools.

tools : This list represents the set of tools that the agent has available to it. It contains three tool functions that we defined in the previous steps: get_curriculum, search_latest_resource, and recommend_book. llm.bind_tools(tools): It "binds" the tools list to the llm object. Binding the tools tells the LLM that these tools are available and provides the LLM with information about how to use them (e.g., the names of the tools, the parameters they accept, and what they do).

We will use LangGraph to implement the orchestration.

👉 Append the following code to the end of

aidemy.py:

def prep_class(prep_needs):

builder = StateGraph(MessagesState)

builder.add_node("determine_tool", determine_tool)

builder.add_node("tools", ToolNode(tools))

builder.add_edge(START, "determine_tool")

builder.add_conditional_edges("determine_tool",tools_condition)

builder.add_edge("tools", "determine_tool")

memory = MemorySaver()

graph = builder.compile(checkpointer=memory)

config = {"configurable": {"thread_id": "1"}}

messages = graph.invoke({"messages": prep_needs},config)

print(messages)

for m in messages['messages']:

m.pretty_print()

teaching_plan_result = messages["messages"][-1].content

return teaching_plan_result

if __name__ == "__main__":

prep_class("I'm doing a course for year 5 on subject Mathematics in Geometry, , get school curriculum, and come up with few books recommendation plus search latest resources on the internet base on the curriculum outcome. And come up with a 3 week teaching plan")

Explanation:

StateGraph(MessagesState): Creates aStateGraphobject. AStateGraphis a core concept in LangGraph. It represents the workflow of your agent as a graph, where each node in the graph represents a step in the process. Think of it as defining the blueprint for how the agent will reason and act.- Conditional Edge: Originating from the

"determine_tool"node, thetools_conditionargument is likely a function that determines which edge to follow based on the output of thedetermine_toolfunction. Conditional edges allow the graph to branch based on the LLM's decision about which tool to use (or whether to respond to the user directly). This is where the agent's "intelligence" comes into play – it can dynamically adapt its behavior based on the situation. - Loop: Adds an edge to the graph that connects the

"tools"node back to the"determine_tool"node. This creates a loop in the graph, allowing the agent to repeatedly use tools until it has gathered enough information to complete the task and provide a satisfactory answer. This loop is crucial for complex tasks that require multiple steps of reasoning and information gathering.

Now, let's test our planner agent to see how it orchestrates the different tools.

This code will run the prep_class function with a specific user input, simulating a request to create a teaching plan for 5th-grade Mathematics in Geometry, using the curriculum, book recommendations, and the latest internet resources.

👉 In your terminal, if you've closed it or the environment variables are no longer set, re-run the following commands

export BOOK_PROVIDER_URL=$(gcloud run services describe book-provider --region=us-central1 --project=$PROJECT_ID --format="value(status.url)")

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

export INSTANCE_NAME="aidemy"

export REGION="us-central1"

export DB_USER="postgres"

export DB_PASS="1234qwer"

export DB_NAME="aidemy-db"

👉Run the code:

cd ~/aidemy-bootstrap/planner/

source env/bin/activate

pip install -r requirements.txt

python aidemy.py

Watch the log in the terminal. You should see evidence that the agent is calling all three tools (getting the school curriculum, getting book recommendations, and searching for the latest resources) before providing the final teaching plan. This demonstrates that the LangGraph orchestration is working correctly, and the agent is intelligently using all available tools to fulfill the user's request.

================================ Human Message =================================

I'm doing a course for year 5 on subject Mathematics in Geometry, , get school curriculum, and come up with few books recommendation plus search latest resources on the internet base on the curriculum outcome. And come up with a 3 week teaching plan

================================== Ai Message ==================================

Tool Calls:

get_curriculum (xxx)

Call ID: xxx

Args:

year: 5.0

subject: Mathematics

================================= Tool Message =================================

Name: get_curriculum

Introduction to fractions, decimals, and percentages, along with foundational geometry and problem-solving techniques.

================================== Ai Message ==================================

Tool Calls:

search_latest_resource (xxxx)

Call ID: xxxx

Args:

year: 5.0

search_text: Geometry

curriculum: {"content": "Introduction to fractions, decimals, and percentages, along with foundational geometry and problem-solving techniques."}

subject: Mathematics

================================= Tool Message =================================

Name: search_latest_resource

candidates=[Candidate(content=Content(parts=[Part(.....) automatic_function_calling_history=[] parsed=None

================================== Ai Message ==================================

Tool Calls:

recommend_book (93b48189-4d69-4c09-a3bd-4e60cdc5f1c6)

Call ID: 93b48189-4d69-4c09-a3bd-4e60cdc5f1c6

Args:

query: Mathematics Geometry Year 5

================================= Tool Message =================================

Name: recommend_book

[{.....}]

================================== Ai Message ==================================

Based on the curriculum outcome, here is a 3-week teaching plan for year 5 Mathematics Geometry:

**Week 1: Introduction to Shapes and Properties**

.........

Stop the script by pressing Ctrl+C if it is still running.

👉 (THIS STEP IS OPTIONAL) replace the testing code with a different prompt, which requires different tools to be called.

if __name__ == "__main__":

prep_class("I'm doing a course for year 5 on subject Mathematics in Geometry, search latest resources on the internet base on the subject. And come up with a 3 week teaching plan")

👉 If you've closed your terminal or the environment variables are no longer set, re-run the following commands

gcloud config set project $(cat ~/project_id.txt)

export BOOK_PROVIDER_URL=$(gcloud run services describe book-provider --region=us-central1 --project=$PROJECT_ID --format="value(status.url)")

export PROJECT_ID=$(gcloud config get project)

export INSTANCE_NAME="aidemy"

export REGION="us-central1"

export DB_USER="postgres"

export DB_PASS="1234qwer"

export DB_NAME="aidemy-db"

👉 (THIS STEP IS OPTIONAL, do this ONLY IF you ran the previous step) Run the code again:

cd ~/aidemy-bootstrap/planner/

source env/bin/activate

python aidemy.py

What did you notice this time? Which tools did the agent call? You should see that the agent only calls the search_latest_resource tool this time. This is because the prompt does not specify that it needs the other two tools, and our LLM is smart enough to not call the other tools.

================================ Human Message =================================

I'm doing a course for year 5 on subject Mathematics in Geometry, search latest resources on the internet base on the subject. And come up with a 3 week teaching plan

================================== Ai Message ==================================

Tool Calls:

get_curriculum (xxx)

Call ID: xxx

Args:

year: 5.0

subject: Mathematics

================================= Tool Message =================================

Name: get_curriculum

Introduction to fractions, decimals, and percentages, along with foundational geometry and problem-solving techniques.

================================== Ai Message ==================================

Tool Calls:

search_latest_resource (xxx)

Call ID: xxxx

Args:

year: 5.0

subject: Mathematics

curriculum: {"content": "Introduction to fractions, decimals, and percentages, along with foundational geometry and problem-solving techniques."}

search_text: Geometry

================================= Tool Message =================================

Name: search_latest_resource

candidates=[Candidate(content=Content(parts=[Part(.......token_count=40, total_token_count=772) automatic_function_calling_history=[] parsed=None

================================== Ai Message ==================================

Based on the information provided, a 3-week teaching plan for Year 5 Mathematics focusing on Geometry could look like this:

**Week 1: Introducing 2D Shapes**

........

* Use visuals, manipulatives, and real-world examples to make the learning experience engaging and relevant.

Stop the script by pressing Ctrl+C.

👉 (DO NOT SKIP THIS STEP!) Remove the testing code to keep your aidemy.py file clean :

if __name__ == "__main__":

prep_class("I'm doing a course for year 5 on subject Mathematics in Geometry, search latest resources on the internet base on the subject. And come up with a 3 week teaching plan")

With our agent logic now defined, let's launch the Flask web application. This will provide a familiar form-based interface for teachers to interact with the agent. While chatbot interactions are common with LLMs, we're opting for a traditional form submit UI, as it may be more intuitive for many educators.

👉 If you've closed your terminal or the environment variables are no longer set, re-run the following commands

export BOOK_PROVIDER_URL=$(gcloud run services describe book-provider --region=us-central1 --project=$PROJECT_ID --format="value(status.url)")

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

export INSTANCE_NAME="aidemy"

export REGION="us-central1"

export DB_USER="postgres"

export DB_PASS="1234qwer"

export DB_NAME="aidemy-db"

👉 Now, start the Web UI.

cd ~/aidemy-bootstrap/planner/

source env/bin/activate

python app.py

Look for startup messages in the Cloud Shell terminal output. Flask usually prints messages indicating that it's running and on what port.

Running on http://127.0.0.1:8080

Running on http://127.0.0.1:8080

The application needs to keep running to serve requests.

👉 From the "Web preview" menu in the top right corner, choose Preview on port 8080. Cloud Shell will open a new browser tab or window with the web preview of your application.

In the application interface, select 5 for Year, select subject Mathematics and type in Geometry in the Add-on Request

👉 If you navigated away from your application UI, navigate back and you should see the generated output.

👉 In your terminal, stop the script by pressing Ctrl+C.

👉 In your terminal, exit the virtual environment:

deactivate

8. Deploying planner agent to the cloud

Build and push image to registry

Time to deploy this to the cloud.

👉 In the terminal, create an artifacts repository to store the docker image we are going to build.

gcloud artifacts repositories create agent-repository \

--repository-format=docker \

--location=us-central1 \

--description="My agent repository"

You should see Created repository [agent-repository].

👉 Run the following command to build the Docker image.

cd ~/aidemy-bootstrap/planner/

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

docker build -t gcr.io/${PROJECT_ID}/aidemy-planner .

👉 We need to retag the image so that it's hosted in Artifact Registry instead of GCR and push the tagged image to Artifact Registry:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

docker tag gcr.io/${PROJECT_ID}/aidemy-planner us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-planner

docker push us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-planner

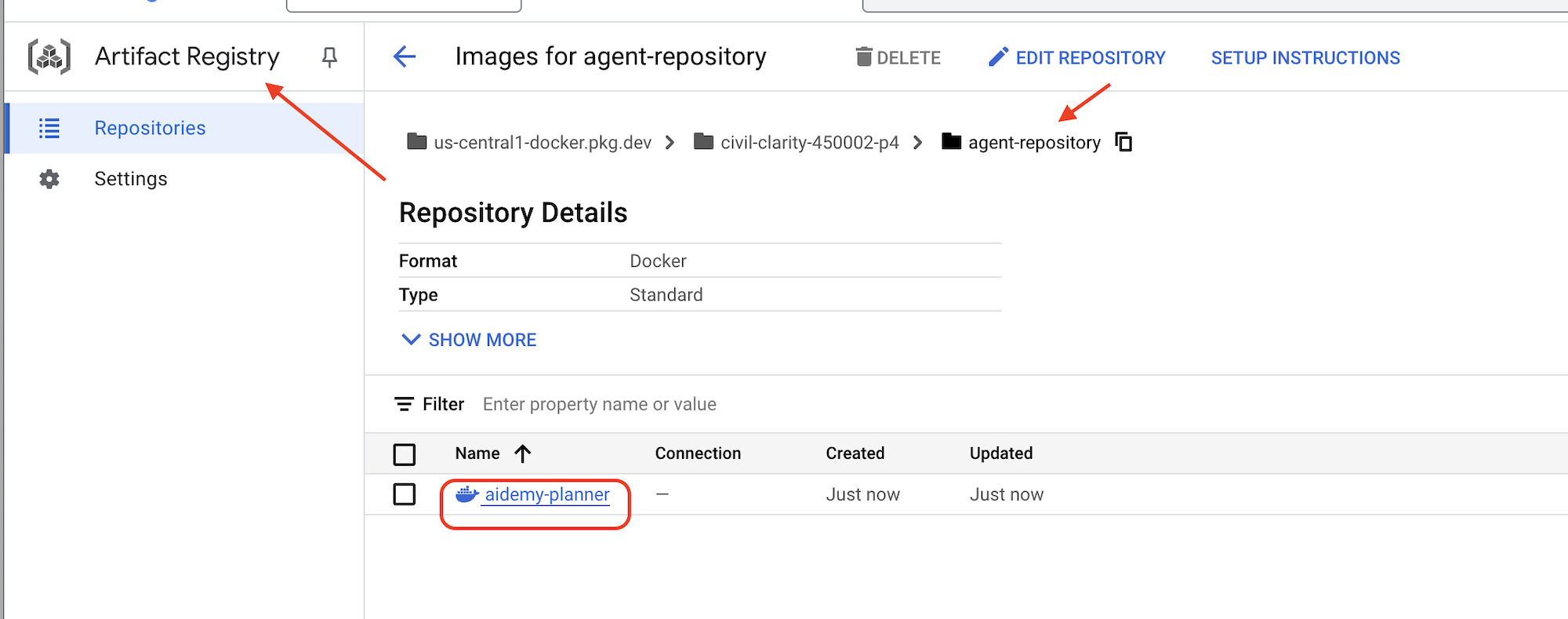

Once the push is complete, you can verify that the image is successfully stored in Artifact Registry.

👉 Navigate to the Artifact Registry in the Google Cloud Console. You should find the aidemy-planner image within the agent-repository repository.

Securing Database Credentials with Secret Manager

To securely manage and access database credentials, we'll use Google Cloud Secret Manager. This prevents hardcoding sensitive information in our application code and enhances security.

We'll create individual secrets for the database username, password, and database name. This approach allows us to manage each credential independently.

👉 In the terminal run the following:

gcloud secrets create db-user

printf "postgres" | gcloud secrets versions add db-user --data-file=-

gcloud secrets create db-pass

printf "1234qwer" | gcloud secrets versions add db-pass --data-file=-

gcloud secrets create db-name

printf "aidemy-db" | gcloud secrets versions add db-name --data-file=-

Using Secret Manager is a important step in securing your application and preventing accidental exposure of sensitive credentials. It follows security best practices for cloud deployments.

Deploy to Cloud Run

Cloud Run is a fully managed serverless platform that allows you to deploy containerized applications quickly and easily. It abstracts away the infrastructure management, letting you focus on writing and deploying your code. We'll be deploying our planner as a Cloud Run service.

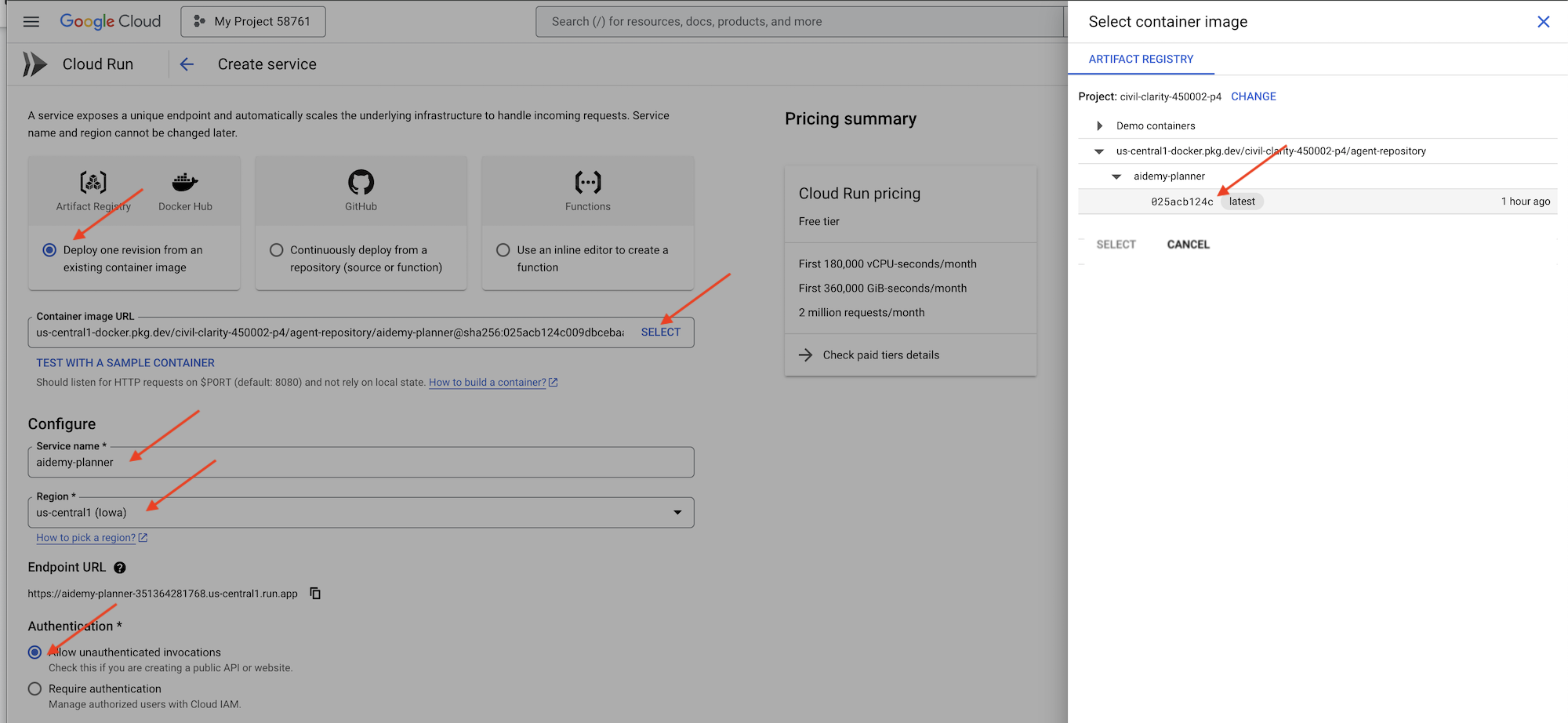

👉In the Google Cloud Console, navigate to "Cloud Run". Click on DEPLOY CONTAINER and select SERVICE. Configure your Cloud Run service:

- Container image: Click "Select" in the URL field. Find the image URL you pushed to Artifact Registry (e.g., us-central1-docker.pkg.dev/YOUR_PROJECT_ID/agent-repository/aidemy-planner/YOUR_IMG).

- Service name:

aidemy-planner - Region: Select the

us-central1region. - Authentication: For the purpose of this workshop, you can allow "Allow unauthenticated invocations". For production, you'll likely want to restrict access.

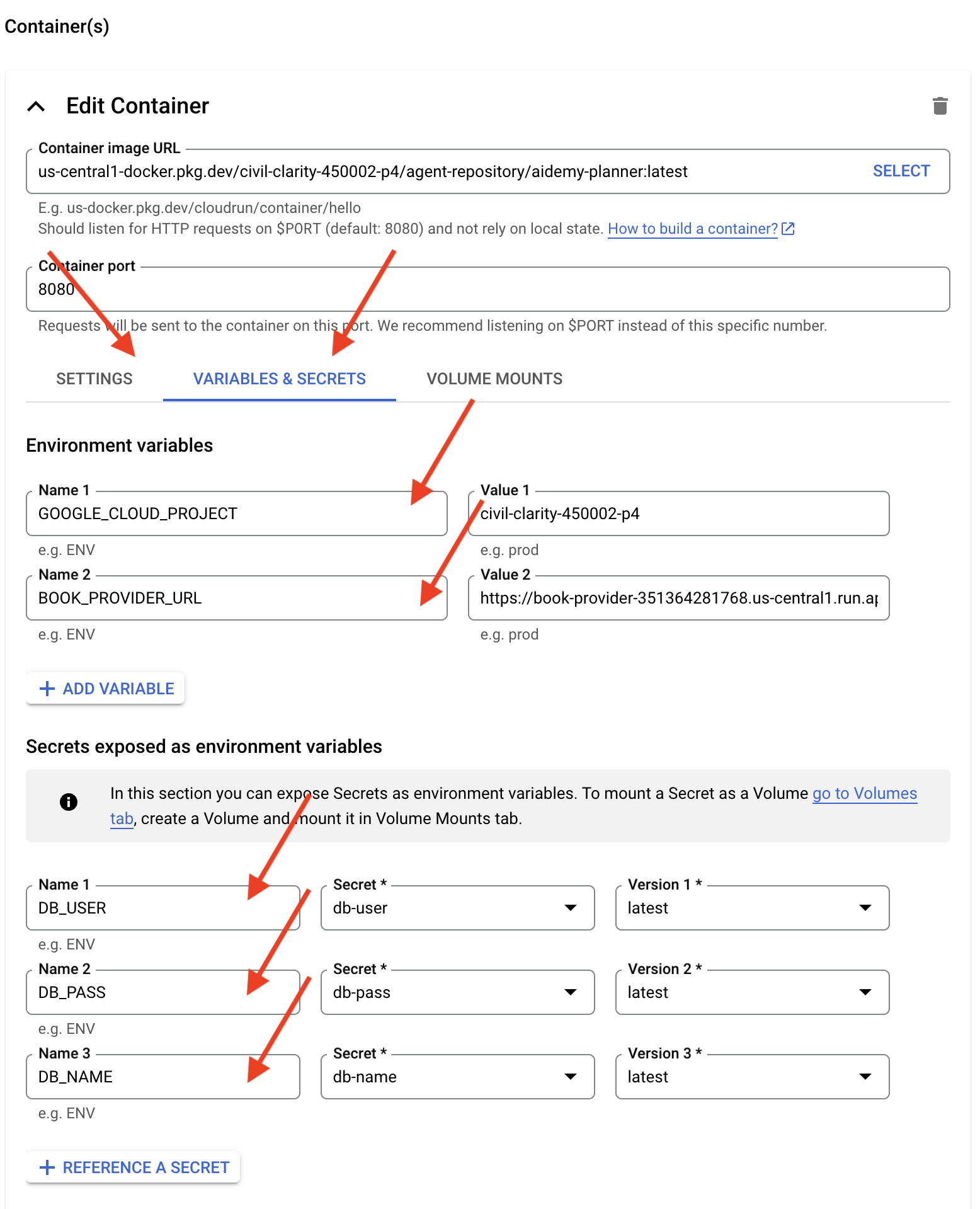

- Expand the Container(s), Volumes, Networking, Security section, and set the following under the Container(s) tab (:

- Setting tab:

- Resources

- memory : 2GiB

- Resources

- Variables & Secrets tab:

- Environment variables, add the following variables by clicking the + Add Variable button:

- Add name:

GOOGLE_CLOUD_PROJECTand value: <YOUR_PROJECT_ID> - Add name:

BOOK_PROVIDER_URL, and set the value to your book-provider function URL, which you can determine using the following command in the terminal:gcloud config set project $(cat ~/project_id.txt) gcloud run services describe book-provider \ --region=us-central1 \ --project=$PROJECT_ID \ --format="value(status.url)"

- Add name:

- Under the Secrets exposed as environment variables section, add the following secrets by clicking the + Reference as a secret button:

- Add name:

DB_USER, secret: selectdb-userand version:latest - Add name:

DB_PASS, secret: selectdb-passand version:latest - Add name:

DB_NAME, secret: selectdb-nameand version:latest

- Add name:

- Environment variables, add the following variables by clicking the + Add Variable button:

- Setting tab:

Leave other values as default.

👉 Click CREATE.

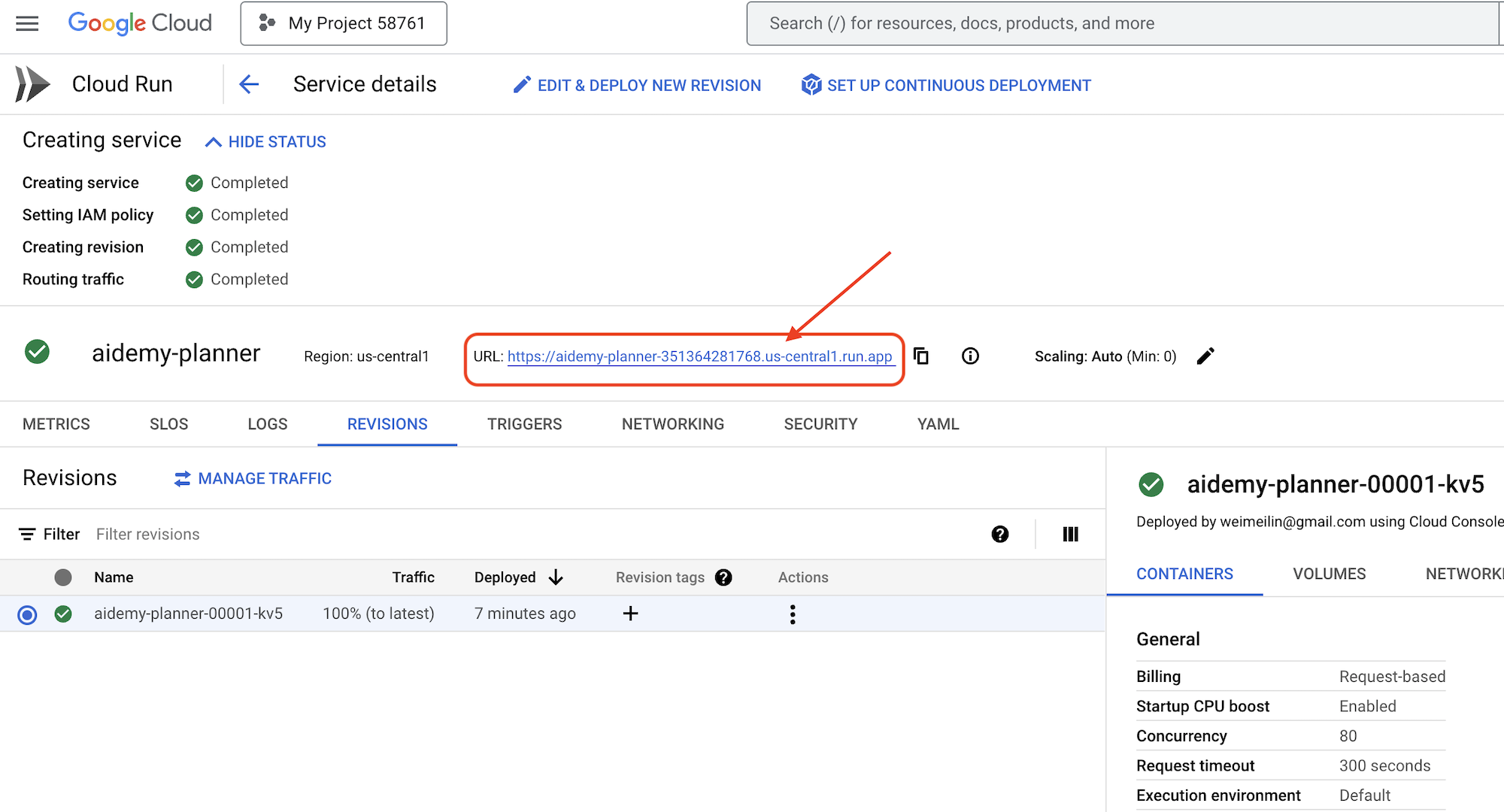

Cloud Run will deploy your service.

Once deployed, if you are not already on the detail page, click on the service name to go to its detail page. You can find the deployed URL available on the top.

👉 In the application interface, select 7 for the Year, choose Mathematics as the subject, and enter Algebra in the Add-on Request field.

👉 Click Generate Plan. This will provide the agent with the necessary context to generate a tailored lesson plan.

Congratulations! You've successfully created a teaching plan using our powerful AI agent. This demonstrates the potential of agents to significantly reduce workload and streamline tasks, ultimately improving efficiency and making life easier for educators.

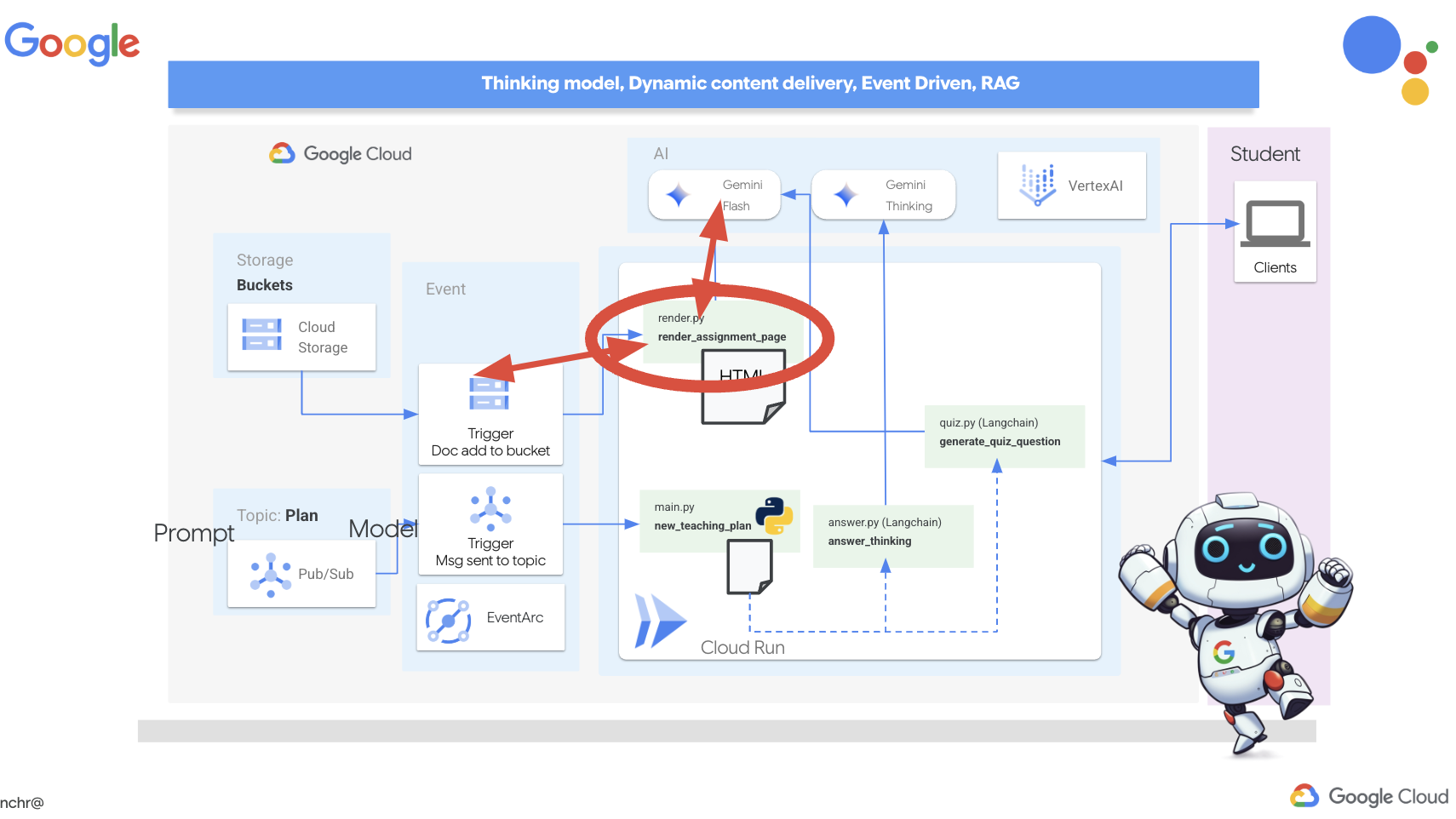

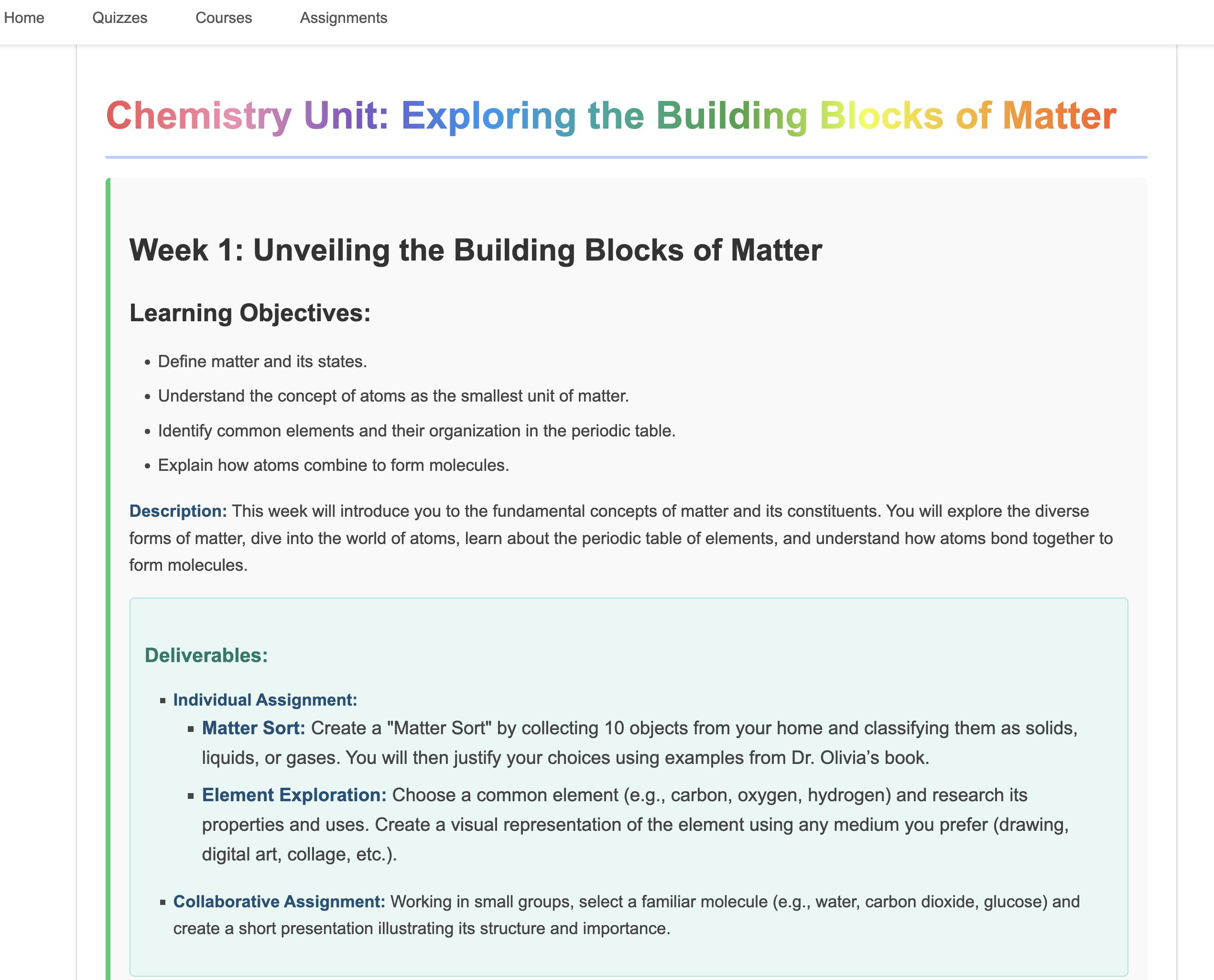

9. Multi-agent systems

Now that we've successfully implemented the teaching plan creation tool, let's shift our focus to building the student portal. This portal will provide students with access to quizzes, audio recaps, and assignments related to their coursework. Given the scope of this functionality, we'll leverage the power of multi-agent systems to create a modular and scalable solution.

As we discussed earlier, instead of relying on a single agent to handle everything, a multi-agent system allows us to break down the workload into smaller, specialized tasks, each handled by a dedicated agent. This approach offers several key advantages:

Modularity and Maintainability: Instead of creating a single agent that does everything, build smaller, specialized agents with well-defined responsibilities. This modularity makes the system easier to understand, maintain, and debug. When a problem arises, you can isolate it to a specific agent, rather than having to sift through a massive codebase.

Scalability: Scaling a single, complex agent can be a bottleneck. With a multi-agent system, you can scale individual agents based on their specific needs. For example, if one agent is handling a high volume of requests, you can easily spin up more instances of that agent without affecting the rest of the system.

Team Specialization: Think of it like this: you wouldn't ask one engineer to build an entire application from scratch. Instead, you assemble a team of specialists, each with expertise in a particular area. Similarly, a multi-agent system allows you to leverage the strengths of different LLMs and tools, assigning them to agents that are best suited for specific tasks.

Parallel Development: Different teams can work on different agents concurrently, speeding up the development process. Since agents are independent, changes to one agent are less likely to impact other agents.

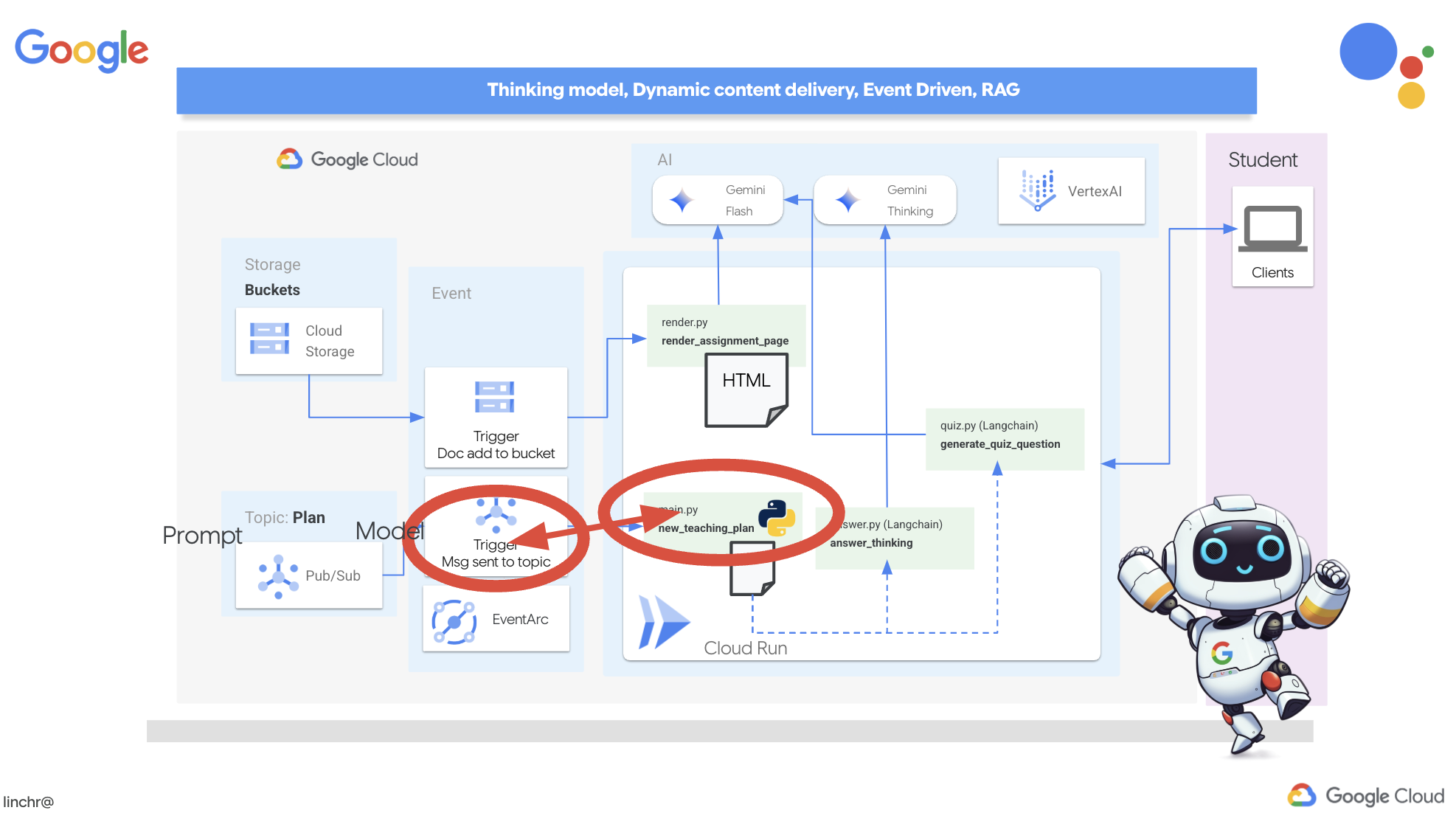

Event Driven Architecture

To enable effective communication and coordination between these agents, we'll employ an event-driven architecture. This means that agents will react to "events" happening within the system.

Agents subscribe to specific event types (e.g., "teaching plan generated," "assignment created"). When an event occurs, the relevant agents are notified and can react accordingly. This decoupling promotes flexibility, scalability, and real-time responsiveness.

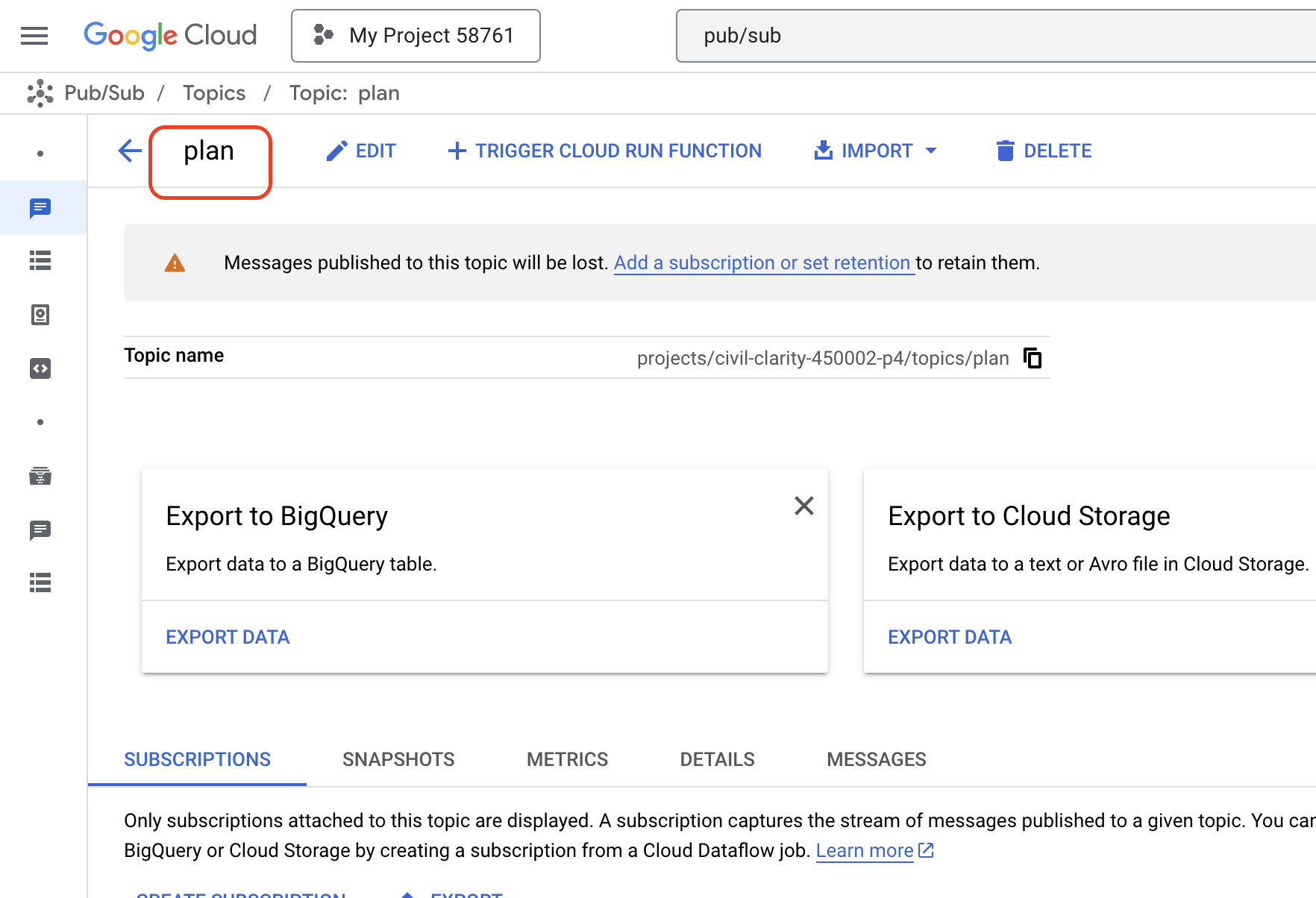

Now, to kick things off, we need a way to broadcast these events. To do this, we will set up a Pub/Sub topic. Let's start by creating a topic called plan.

👉 Go to Google Cloud Console pub/sub.

👉 Click on the Create Topic button.

👉 Configure the Topic with ID/name plan and uncheck Add a default subscription, leave rest as default and click Create.

The Pub/Sub page will refresh, and you should now see your newly created topic listed in the table.

Now, let's integrate the Pub/Sub event publishing functionality into our planner agent. We'll add a new tool that sends a "plan" event to the Pub/Sub topic we just created. This event will signal to other agents in the system (like those in the student portal) that a new teaching plan is available.

👉Go back to the Cloud Code Editor and open the app.py file located in the planner folder. We will be adding a function that publishes the event. Replace:

##ADD SEND PLAN EVENT FUNCTION HERE

with the following code

def send_plan_event(teaching_plan:str):

"""

Send the teaching event to the topic called plan

Args:

teaching_plan: teaching plan

"""

publisher = pubsub_v1.PublisherClient()

print(f"-------------> Sending event to topic plan: {teaching_plan}")

topic_path = publisher.topic_path(PROJECT_ID, "plan")

message_data = {"teaching_plan": teaching_plan}

data = json.dumps(message_data).encode("utf-8")

future = publisher.publish(topic_path, data)

return f"Published message ID: {future.result()}"

- send_plan_event: This function takes the generated teaching plan as input, creates a Pub/Sub publisher client, constructs the topic path, converts the teaching plan into a JSON string, and publishes the message to the topic.

In the same app.py file

👉Update the prompt to instruct the agent to send the teaching plan event to the Pub/Sub topic after generating the teaching plan. *Replace

### ADD send_plan_event CALL

with the following:

send_plan_event(teaching_plan)

By adding the send_plan_event tool and modifying the prompt, we've enabled our planner agent to publish events to Pub/Sub, allowing other components of our system to react to the creation of new teaching plans. We will now have a functional multi-agent system in the following sections.

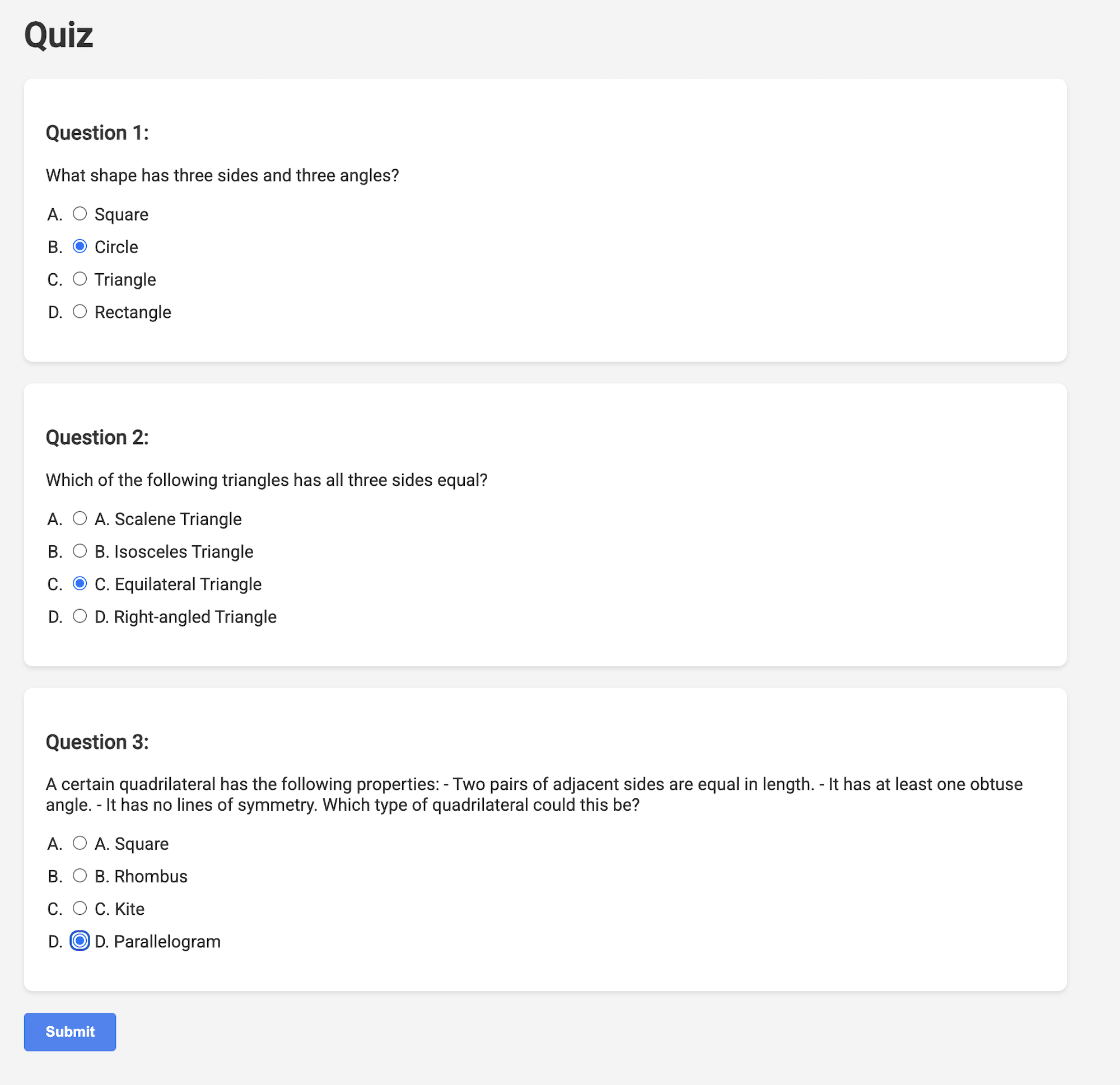

10. Empowering Students with On-Demand Quizzes

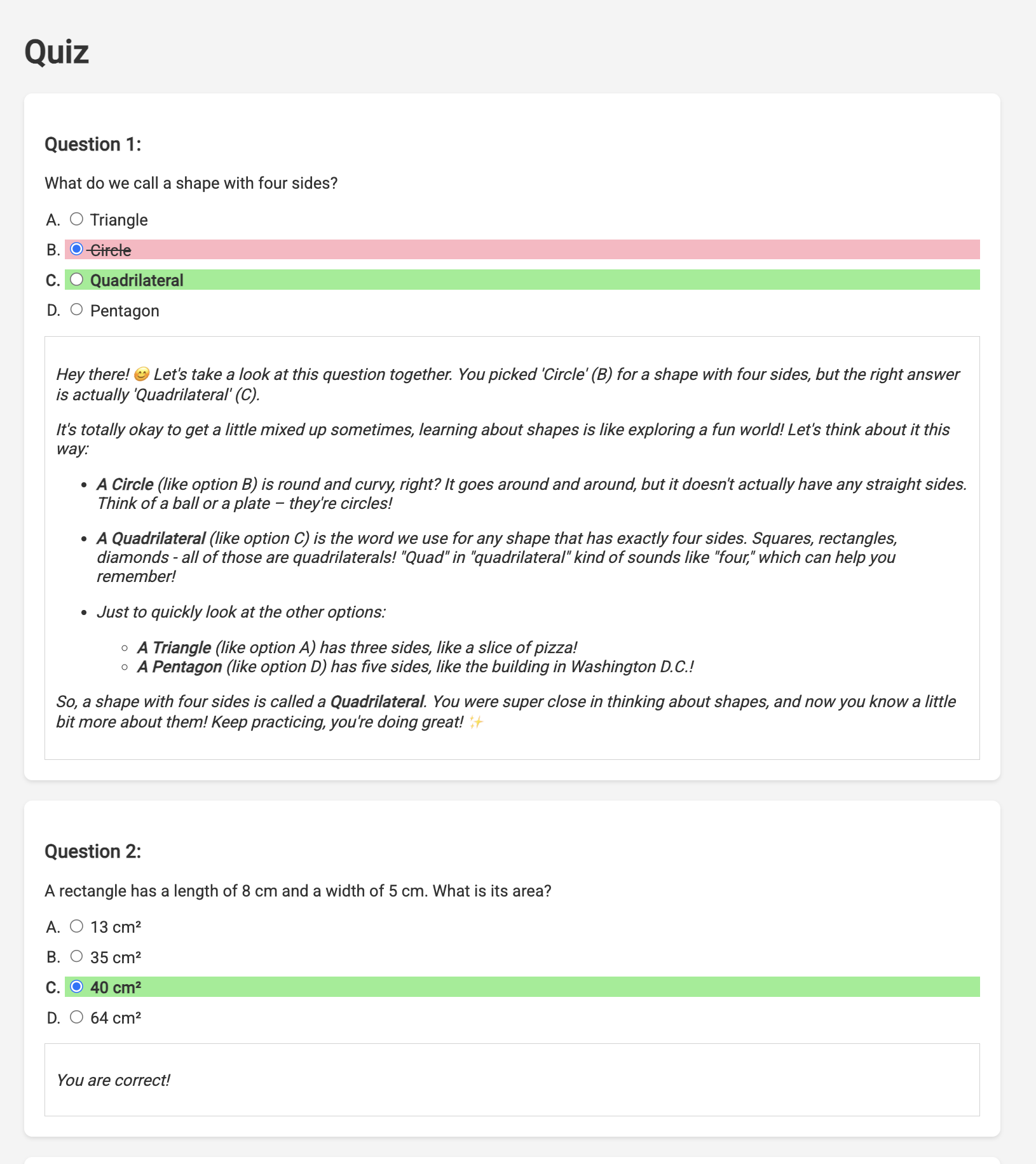

Imagine a learning environment where students have access to an endless supply of quizzes tailored to their specific learning plans. These quizzes provide immediate feedback, including answers and explanations, fostering a deeper understanding of the material. This is the potential we aim to unlock with our AI-powered quiz portal.

To bring this vision to life, we'll build a quiz generation component that can create multiple-choice questions based on the content of the teaching plan.

👉 In the Cloud Code Editor's Explorer pane, navigate to the portal folder. Open the quiz.py file copy and paste the following code to the end of the file.

def generate_quiz_question(file_name: str, difficulty: str, region:str ):

"""Generates a single multiple-choice quiz question using the LLM.

```json

{

"question": "The question itself",

"options": ["Option A", "Option B", "Option C", "Option D"],

"answer": "The correct answer letter (A, B, C, or D)"

}

```

"""

print(f"region: {region}")

# Connect to resourse needed from Google Cloud

llm = VertexAI(model_name="gemini-2.5-flash-preview-04-17", location=region)

plan=None

#load the file using file_name and read content into string call plan

with open(file_name, 'r') as f:

plan = f.read()

parser = JsonOutputParser(pydantic_object=QuizQuestion)

instruction = f"You'll provide one question with difficulty level of {difficulty}, 4 options as multiple choices and provide the anwsers, the quiz needs to be related to the teaching plan {plan}"

prompt = PromptTemplate(

template="Generates a single multiple-choice quiz question\n {format_instructions}\n {instruction}\n",

input_variables=["instruction"],

partial_variables={"format_instructions": parser.get_format_instructions()},

)

chain = prompt | llm | parser

response = chain.invoke({"instruction": instruction})

print(f"{response}")

return response

In the agent it creates a JSON output parser that's specifically designed to understand and structure the LLM's output. It uses the QuizQuestion model we defined earlier to ensure the parsed output conforms to the correct format (question, options, and answer).

👉 In your terminal, Execute the following commands to set up a virtual environment, install dependencies, and start the agent:

gcloud config set project $(cat ~/project_id.txt)

cd ~/aidemy-bootstrap/portal/

python -m venv env

source env/bin/activate

pip install -r requirements.txt

python app.py

👉 From the "Web preview" menu in the top right corner, choose Preview on port 8080. Cloud Shell will open a new browser tab or window with the web preview of your application.

👉 In the web application, Click on the "Quizzes" link, either in the top navigation bar or from the card on the index page. You should see three randomly generated quizzes displayed for the student. These quizzes are based on the teaching plan and demonstrate the power of our AI-powered quiz generation system.

👉To stop the locally running process, press Ctrl+C in the terminal.

Gemini 2 Thinking for Explanations

Okay, so we've got quizzes, which is a great start! But what if students get something wrong? That's where the real learning happens, right? If we can explain why their answer was off and how to get to the correct one, they're way more likely to remember it. Plus, it helps clear up any confusion and boost their confidence.

That's why we're going to bring in the big guns: Gemini 2's "thinking" model! Think of it like giving the AI a little extra time to think things through before explaining. It lets it give more detailed and better feedback.

We want to see if it can help students by assisting, answering and explaining in detail. To test it out, we'll start with a notoriously tricky subject, Calculus.

👉First, head over to the Cloud Code Editor, in answer.py inside the portal folder. Replace the following function code

def answer_thinking(question, options, user_response, answer, region):

return ""

with following code snippet:

def answer_thinking(question, options, user_response, answer, region):

try:

llm = VertexAI(model_name="gemini-2.0-flash-001",location=region)

input_msg = HumanMessage(content=[f"Here the question{question}, here are the available options {options}, this student's answer {user_response}, whereas the correct answer is {answer}"])

prompt_template = ChatPromptTemplate.from_messages(

[

SystemMessage(

content=(

"You are a helpful teacher trying to teach the student on question, you were given the question and a set of multiple choices "

"what's the correct answer. use friendly tone"

)

),

input_msg,

]

)

prompt = prompt_template.format()

response = llm.invoke(prompt)

print(f"response: {response}")

return response

except Exception as e:

print(f"Error sending message to chatbot: {e}") # Log this error too!

return f"Unable to process your request at this time. Due to the following reason: {str(e)}"

if __name__ == "__main__":

question = "Evaluate the limit: lim (x→0) [(sin(5x) - 5x) / x^3]"

options = ["A) -125/6", "B) -5/3 ", "C) -25/3", "D) -5/6"]

user_response = "B"

answer = "A"

region = "us-central1"

result = answer_thinking(question, options, user_response, answer, region)

This is a very simple langchain app where it Initializes the Gemini 2 Flash model, where we are instructing it to act as a helpful teacher and provide explanations

👉Execute the following command in the terminal:

gcloud config set project $(cat ~/project_id.txt)

cd ~/aidemy-bootstrap/portal/

source env/bin/activate

python answer.py

You should see output similar to the example provided in the original instructions. The current model may not provide as through explanation.

Okay, I see the question and the choices. The question is to evaluate the limit:

lim (x→0) [(sin(5x) - 5x) / x^3]

You chose option B, which is -5/3, but the correct answer is A, which is -125/6.

It looks like you might have missed a step or made a small error in your calculations. This type of limit often involves using L'Hôpital's Rule or Taylor series expansion. Since we have the form 0/0, L'Hôpital's Rule is a good way to go! You need to apply it multiple times. Alternatively, you can use the Taylor series expansion of sin(x) which is:

sin(x) = x - x^3/3! + x^5/5! - ...

So, sin(5x) = 5x - (5x)^3/3! + (5x)^5/5! - ...

Then, (sin(5x) - 5x) = - (5x)^3/3! + (5x)^5/5! - ...

Finally, (sin(5x) - 5x) / x^3 = - 5^3/3! + (5^5 * x^2)/5! - ...

Taking the limit as x approaches 0, we get -125/6.

Keep practicing, you'll get there!

👉 In the answer.py file, replace the

model_name from gemini-2.0-flash-001 to gemini-2.0-flash-thinking-exp-01-21 in the answer_thinking function.

This changes the LLM to a different one that does better with reasoning. This will help the model generate better explanations.

👉 Run the answer.py script again to test the new thinking model:

gcloud config set project $(cat ~/project_id.txt)

cd ~/aidemy-bootstrap/portal/

source env/bin/activate

python answer.py

Here is an example of the response from the thinking model that is much more thorough and detailed, providing a step-by-step explanation of how to solve the calculus problem. This highlights the power of "thinking" models in generating high-quality explanations. You should see output similar to this:

Hey there! Let's take a look at this limit problem together. You were asked to evaluate:

lim (x→0) [(sin(5x) - 5x) / x^3]

and you picked option B, -5/3, but the correct answer is actually A, -125/6. Let's figure out why!

It's a tricky one because if we directly substitute x=0, we get (sin(0) - 0) / 0^3 = (0 - 0) / 0 = 0/0, which is an indeterminate form. This tells us we need to use a more advanced technique like L'Hopital's Rule or Taylor series expansion.

Let's use the Taylor series expansion for sin(y) around y=0. Do you remember it? It looks like this:

sin(y) = y - y^3/3! + y^5/5! - ...

where 3! (3 factorial) is 3 × 2 × 1 = 6, 5! is 5 × 4 × 3 × 2 × 1 = 120, and so on.

In our problem, we have sin(5x), so we can substitute y = 5x into the Taylor series:

sin(5x) = (5x) - (5x)^3/3! + (5x)^5/5! - ...

sin(5x) = 5x - (125x^3)/6 + (3125x^5)/120 - ...

Now let's plug this back into our limit expression:

[(sin(5x) - 5x) / x^3] = [ (5x - (125x^3)/6 + (3125x^5)/120 - ...) - 5x ] / x^3

Notice that the '5x' and '-5x' cancel out! So we are left with:

= [ - (125x^3)/6 + (3125x^5)/120 - ... ] / x^3

Now, we can divide every term in the numerator by x^3:

= -125/6 + (3125x^2)/120 - ...

Finally, let's take the limit as x approaches 0. As x gets closer and closer to zero, terms with x^2 and higher powers will become very, very small and approach zero. So, we are left with:

lim (x→0) [ -125/6 + (3125x^2)/120 - ... ] = -125/6

Therefore, the correct answer is indeed **A) -125/6**.

It seems like your answer B, -5/3, might have come from perhaps missing a factor somewhere during calculation or maybe using an incorrect simplification. Double-check your steps when you were trying to solve it!

Don't worry, these limit problems can be a bit tricky sometimes! Keep practicing and you'll get the hang of it. Let me know if you want to go through another similar example or if you have any more questions! 😊

Now that we have confirmed it works, let's use the portal.

👉REMOVE the following test code from answer.py:

if __name__ == "__main__":

question = "Evaluate the limit: lim (x→0) [(sin(5x) - 5x) / x^3]"

options = ["A) -125/6", "B) -5/3 ", "C) -25/3", "D) -5/6"]

user_response = "B"

answer = "A"

region = "us-central1"

result = answer_thinking(question, options, user_response, answer, region)

👉Execute the following commands in the terminal to set up a virtual environment, install dependencies, and start the agent:

gcloud config set project $(cat ~/project_id.txt)

cd ~/aidemy-bootstrap/portal/

source env/bin/activate

python app.py

👉 From the "Web preview" menu in the top right corner, choose Preview on port 8080. Cloud Shell will open a new browser tab or window with the web preview of your application.

👉 In the web application, Click on the "Quizzes" link, either in the top navigation bar or from the card on the index page.

👉 Answer all the quizzes and make sure at least get one answer wrong and then click Submit.

Rather than staring blankly while waiting for the response, switch over to the Cloud Editor's terminal. You can observe the progress and any output or error messages generated by your function in the emulator's terminal. 😁

👉 In your terminal, stop the locally running process by pressing Ctrl+C in the terminal.

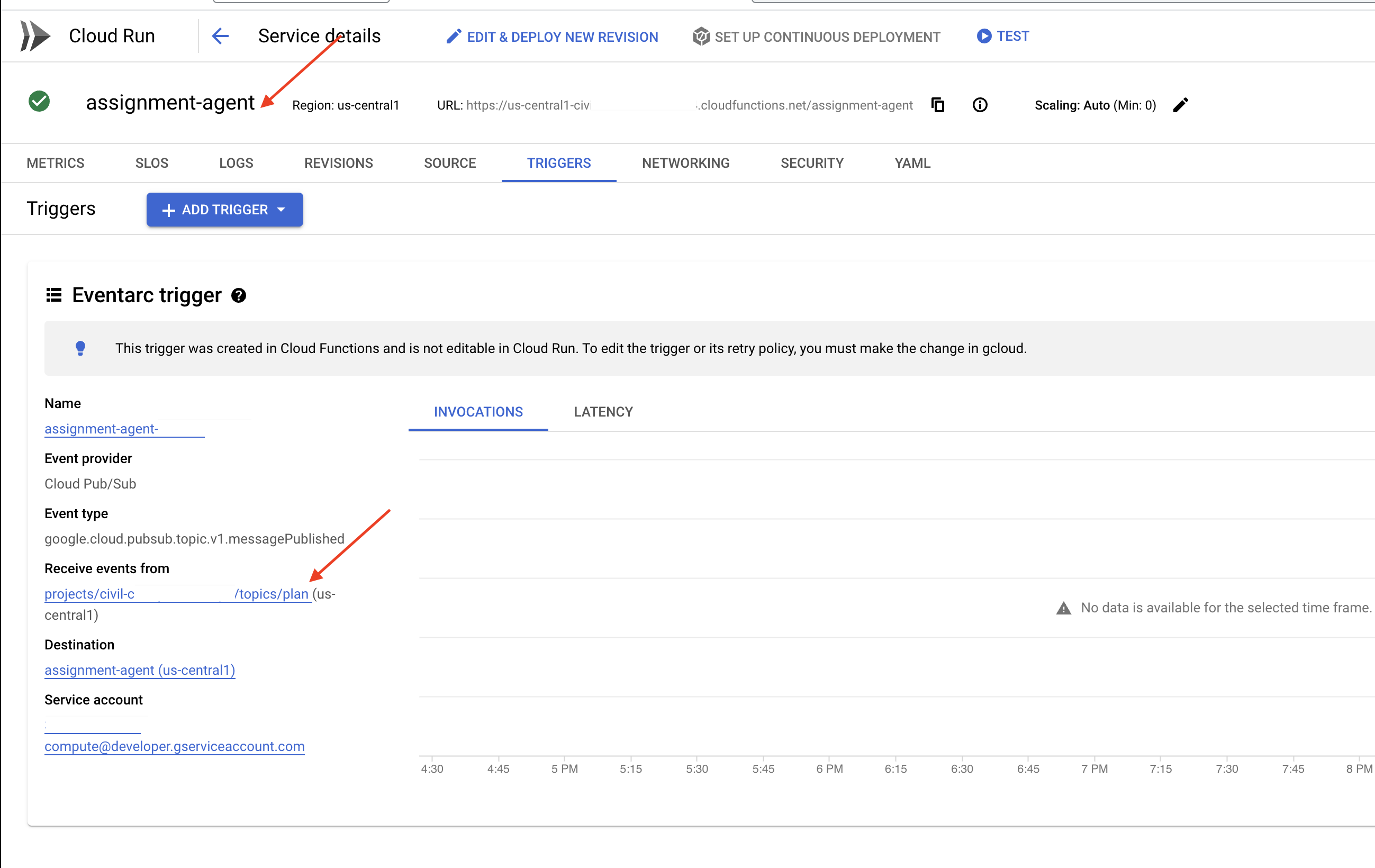

11. OPTIONAL: Orchestrating the Agents with Eventarc

So far, the student portal has been generating quizzes based on a default set of teaching plans. That's helpful, but it means our planner agent and portal's quiz agent aren't really talking to each other. Remember how we added that feature where the planner agent publishes its newly generated teaching plans to a Pub/Sub topic? Now it's time to connect that to our portal agent!

We want the portal to automatically update its quiz content whenever a new teaching plan is generated. To do that, we'll create an endpoint in the portal that can receive these new plans.

👉 In the Cloud Code Editor's Explorer pane, navigate to the portal folder.

👉 Open the app.py file for editing. REPLACE ## REPLACE ME! NEW TEACHING PLAN line with the following code:

@app.route('/new_teaching_plan', methods=['POST'])

def new_teaching_plan():

try:

# Get data from Pub/Sub message delivered via Eventarc

envelope = request.get_json()

if not envelope:

return jsonify({'error': 'No Pub/Sub message received'}), 400

if not isinstance(envelope, dict) or 'message' not in envelope:

return jsonify({'error': 'Invalid Pub/Sub message format'}), 400

pubsub_message = envelope['message']

print(f"data: {pubsub_message['data']}")

data = pubsub_message['data']

data_str = base64.b64decode(data).decode('utf-8')

data = json.loads(data_str)

teaching_plan = data['teaching_plan']

print(f"File content: {teaching_plan}")

with open("teaching_plan.txt", "w") as f:

f.write(teaching_plan)

print(f"Teaching plan saved to local file: teaching_plan.txt")

return jsonify({'message': 'File processed successfully'})

except Exception as e:

print(f"Error processing file: {e}")

return jsonify({'error': 'Error processing file'}), 500

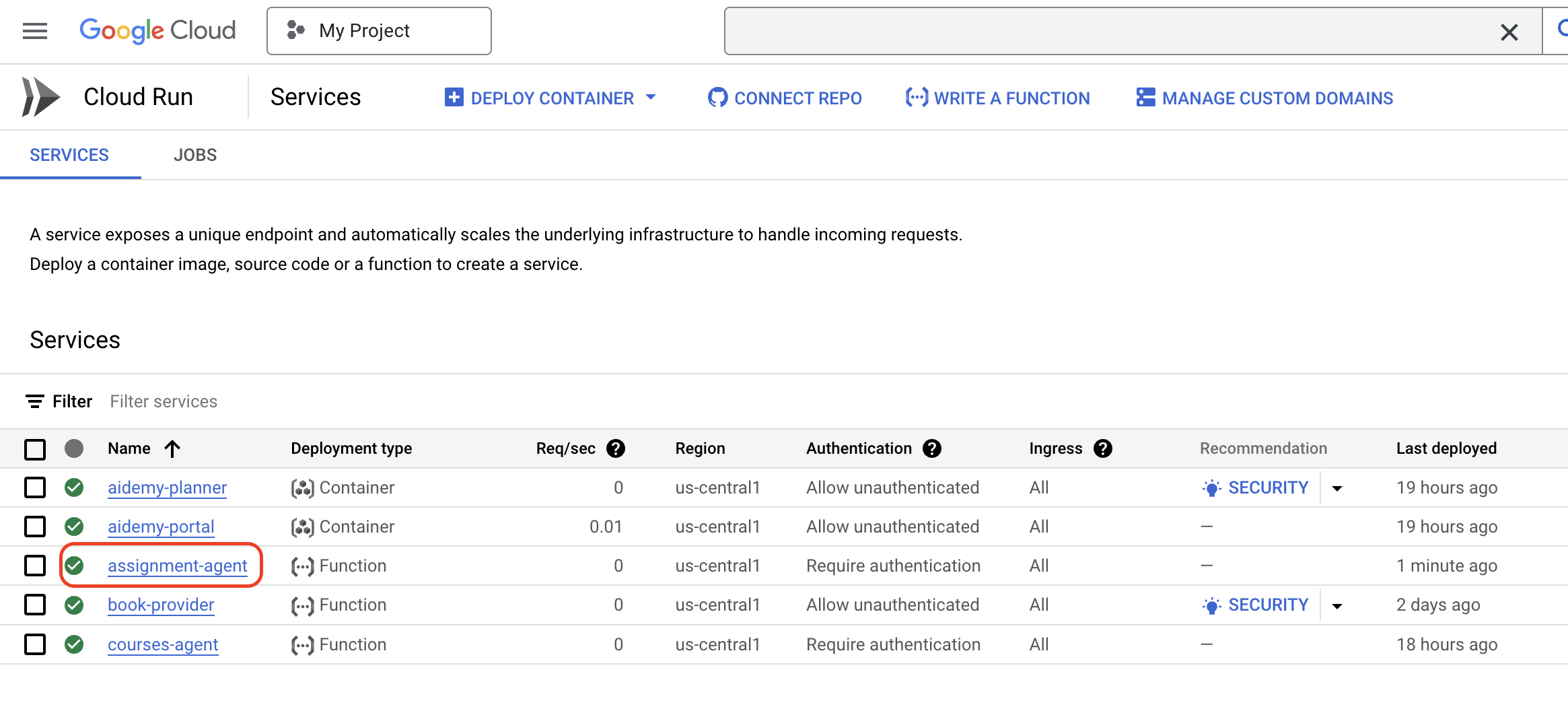

Rebuilding and Deploying to Cloud Run

You'll need to update and redeploy both our planner and portal agents to Cloud Run. This ensures they have the latest code and are configured to communicate via events.

👉First we'll rebuild and push the planner agent image, back in the terminal run:

cd ~/aidemy-bootstrap/planner/

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

docker build -t gcr.io/${PROJECT_ID}/aidemy-planner .

export PROJECT_ID=$(gcloud config get project)

docker tag gcr.io/${PROJECT_ID}/aidemy-planner us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-planner

docker push us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-planner

👉We'll do the same, build and push the portal agent image:

cd ~/aidemy-bootstrap/portal/

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

docker build -t gcr.io/${PROJECT_ID}/aidemy-portal .

export PROJECT_ID=$(gcloud config get project)

docker tag gcr.io/${PROJECT_ID}/aidemy-portal us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-portal

docker push us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-portal

👉 Navigate to Artifact Registry, you should see both the aidemy-planner and aidemy-portal container images listed under the agent-repository.

👉Back in the terminal, run this to update the Cloud Run image for the planner agent:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

gcloud run services update aidemy-planner \

--region=us-central1 \

--image=us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-planner:latest

You should see output similar to this:

OK Deploying... Done.

OK Creating Revision...

OK Routing traffic...

Done.

Service [aidemy-planner] revision [aidemy-planner-xxxxx] has been deployed and is serving 100 percent of traffic.

Service URL: https://aidemy-planner-xxx.us-central1.run.app

Make note of the Service URL; this is the link to your deployed planner agent. If you need to later determine the planner agent Service URL, use this command:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

gcloud run services describe aidemy-planner \

--region=us-central1 \

--format 'value(status.url)'

👉Run this to create the Cloud Run instance for the portal agent

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

gcloud run deploy aidemy-portal \

--image=us-central1-docker.pkg.dev/${PROJECT_ID}/agent-repository/aidemy-portal:latest \

--region=us-central1 \

--platform=managed \

--allow-unauthenticated \

--memory=2Gi \

--cpu=2 \

--set-env-vars=GOOGLE_CLOUD_PROJECT=${PROJECT_ID}

You should see output similar to this:

Deploying container to Cloud Run service [aidemy-portal] in project [xxxx] region [us-central1]

OK Deploying new service... Done.

OK Creating Revision...

OK Routing traffic...

OK Setting IAM Policy...

Done.

Service [aidemy-portal] revision [aidemy-portal-xxxx] has been deployed and is serving 100 percent of traffic.

Service URL: https://aidemy-portal-xxxx.us-central1.run.app

Make note of the Service URL; this is the link to your deployed student portal. If you need to later determine the student portal Service URL, use this command:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

gcloud run services describe aidemy-portal \

--region=us-central1 \

--format 'value(status.url)'

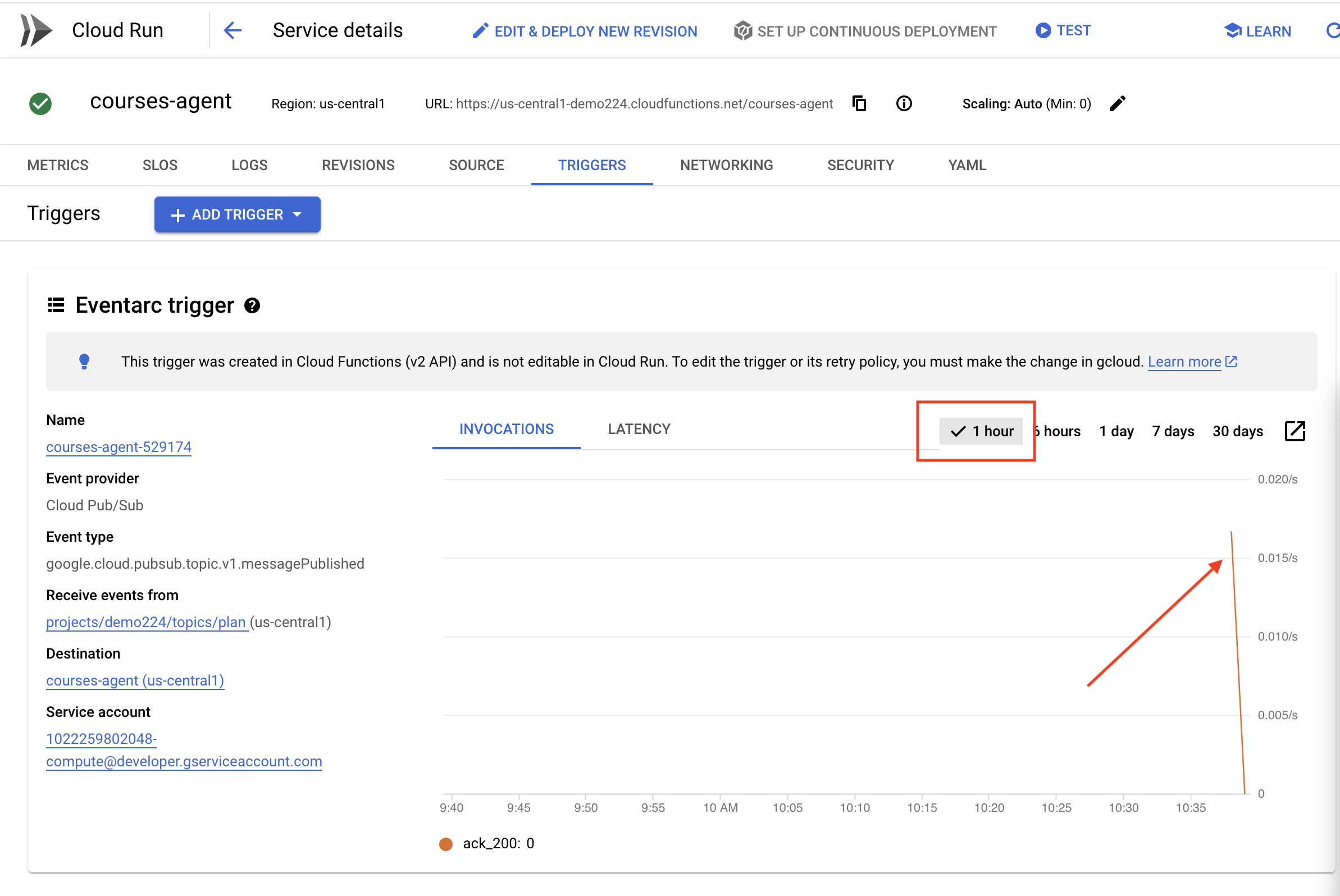

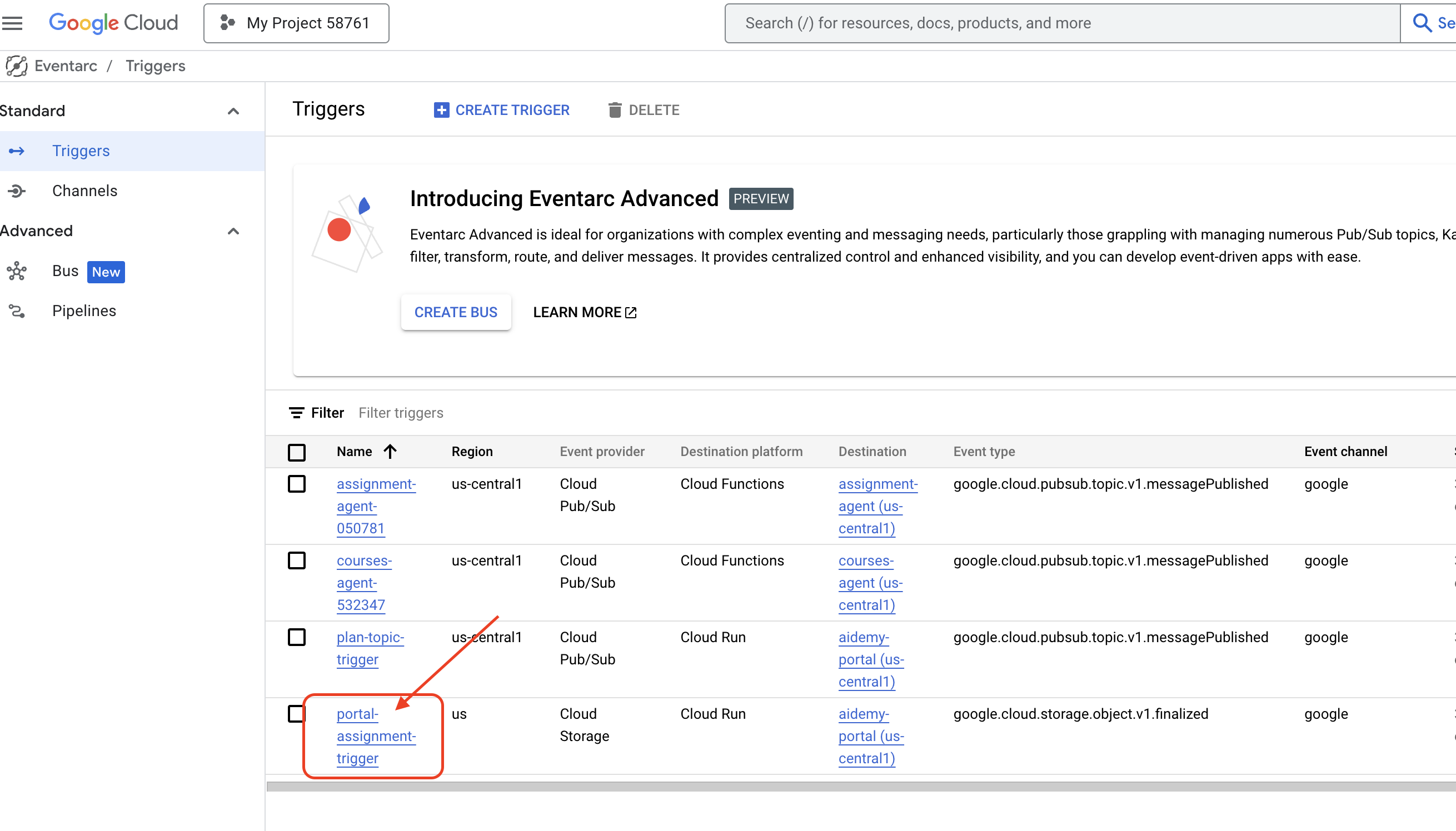

Creating the Eventarc Trigger

But here's the big question: how does this endpoint get notified when there's a fresh plan waiting in the Pub/Sub topic? That's where Eventarc swoops in to save the day!

Eventarc acts as a bridge, listening for specific events (like a new message arriving in our Pub/Sub topic) and automatically triggering actions in response. In our case, it will detect when a new teaching plan is published and then send a signal to our portal's endpoint, letting it know that it's time to update.

With Eventarc handling the event-driven communication, we can seamlessly connect our planner agent and portal agent, creating a truly dynamic and responsive learning system. It's like having a smart messenger that automatically delivers the latest lesson plans to the right place!

👉In the console head to the Eventarc.

👉Click the "+ CREATE TRIGGER" button.

Configure the Trigger (Basics):

- Trigger name:

plan-topic-trigger - Trigger type: Google sources

- Event provider: Cloud Pub/Sub

- Event type:

google.cloud.pubsub.topic.v1.messagePublished - Cloud Pub/Sub Topic: select

projects/PROJECT_ID/topics/plan - Region:

us-central1. - Service account:

- GRANT the service account with role

roles/iam.serviceAccountTokenCreator - Use the default value: Default compute service account

- GRANT the service account with role

- Event destination: Cloud Run

- Cloud Run service:

aidemy-portal - Ignore error message: Permission denied on ‘locations/me-central2' (or it may not exist).

- Service URL path:

/new_teaching_plan

👉 Click "Create".

The Eventarc Triggers page will refresh, and you should now see your newly created trigger listed in the table.

Now, access the planner agent using its Service URL to request a new teaching plan.

👉 Run this in the terminal to determine the planner agent Service URL:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

gcloud run services list --platform=managed --region=us-central1 --format='value(URL)' | grep planner

👉 Navigate to the URL that was output and this time try Year 5, Subject Science, and Add-on Request atoms.

Then, wait a minute or two, again this delay has been introduced due to billing limitation of this lab, under normal condition, there shouldn't be a delay.

Finally, access the student portal using its Service URL.

Run this in the terminal to determine the student portal agent Service URL:

gcloud config set project $(cat ~/project_id.txt)

export PROJECT_ID=$(gcloud config get project)

gcloud run services list --platform=managed --region=us-central1 --format='value(URL)' | grep portal

You should see that the quizzes have been updated and now align with the new teaching plan you just generated! This demonstrates the successful integration of Eventarc in the Aidemy system!

Congratulations! You've successfully built a multi-agent system on Google Cloud, leveraging event-driven architecture for enhanced scalability and flexibility! You've laid a solid foundation, but there's even more to explore. To delve deeper into the real benefits of this architecture, discover the power of Gemini 2's multimodal Live API, and learn how to implement single-path orchestration with LangGraph, feel free to continue on to the next two chapters.

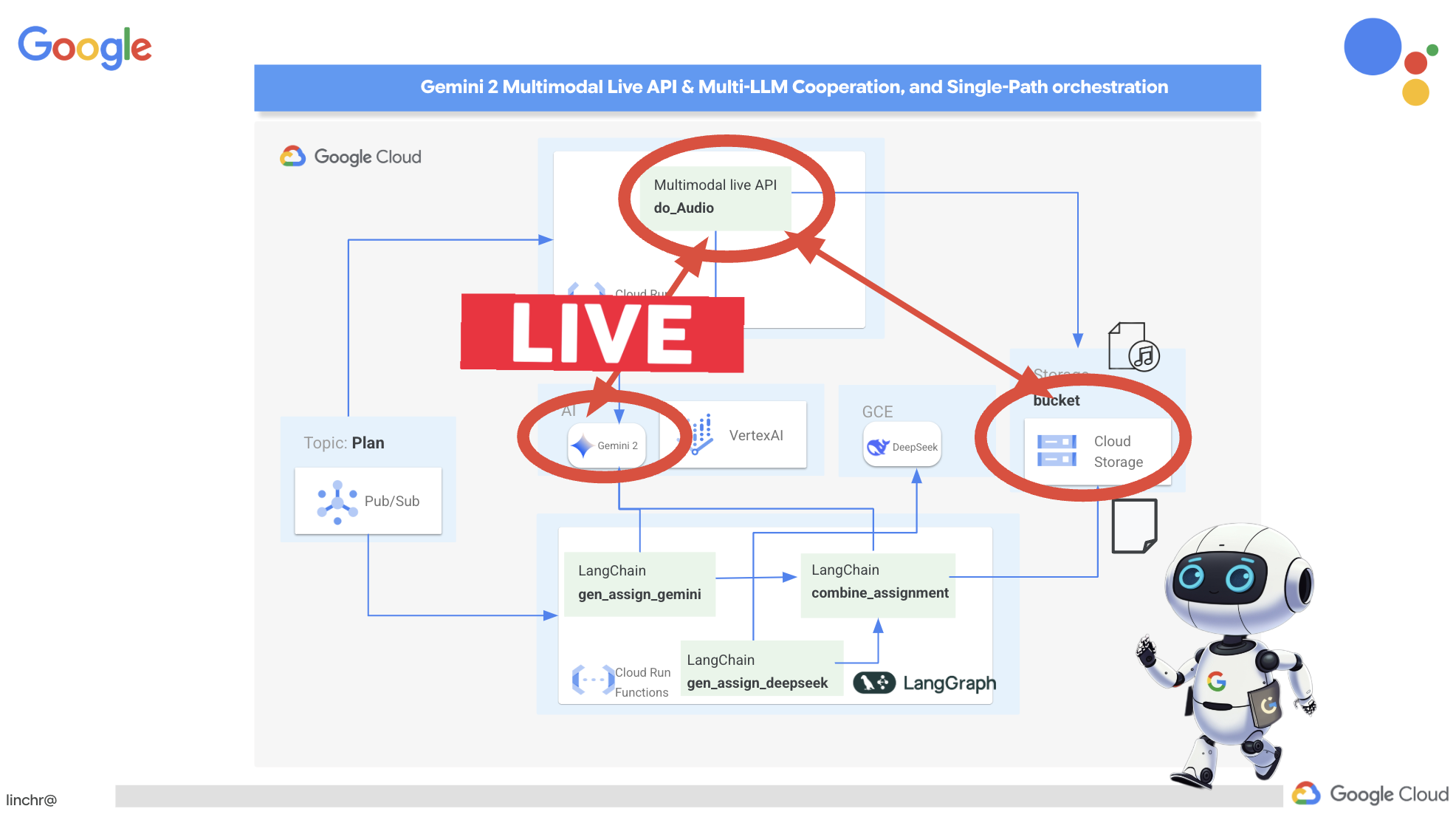

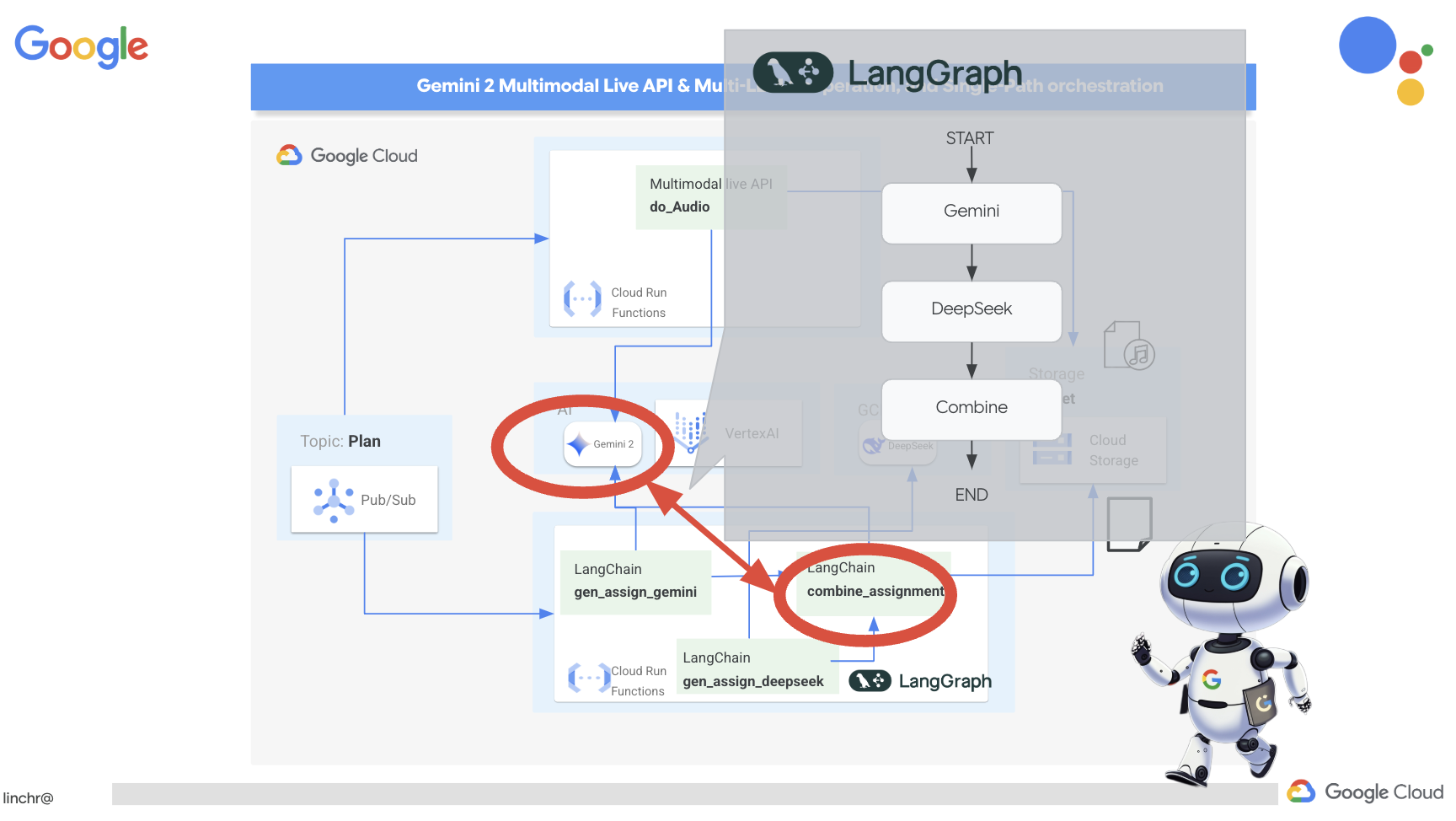

12. OPTIONAL: Audio Recaps with Gemini